1. 简介

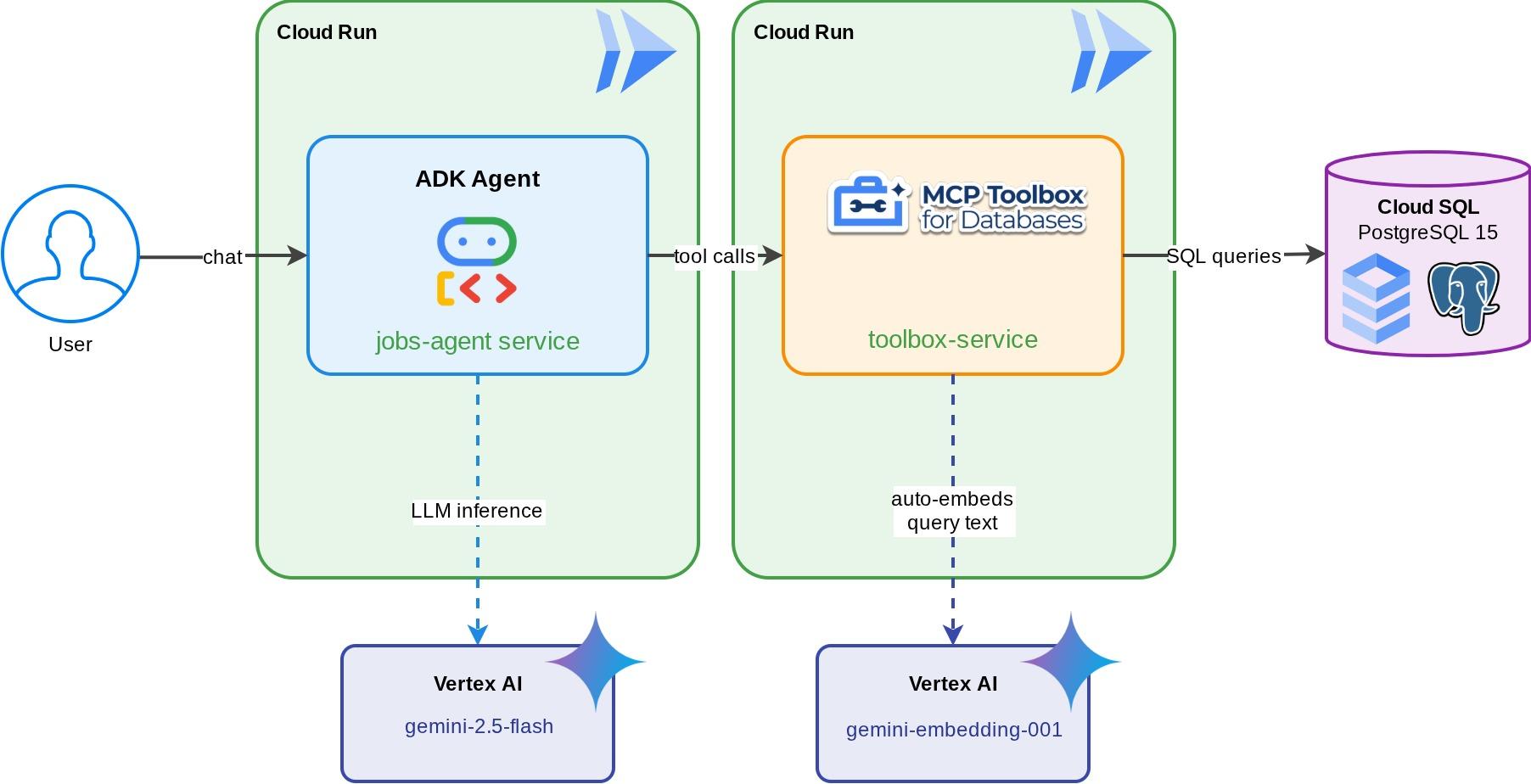

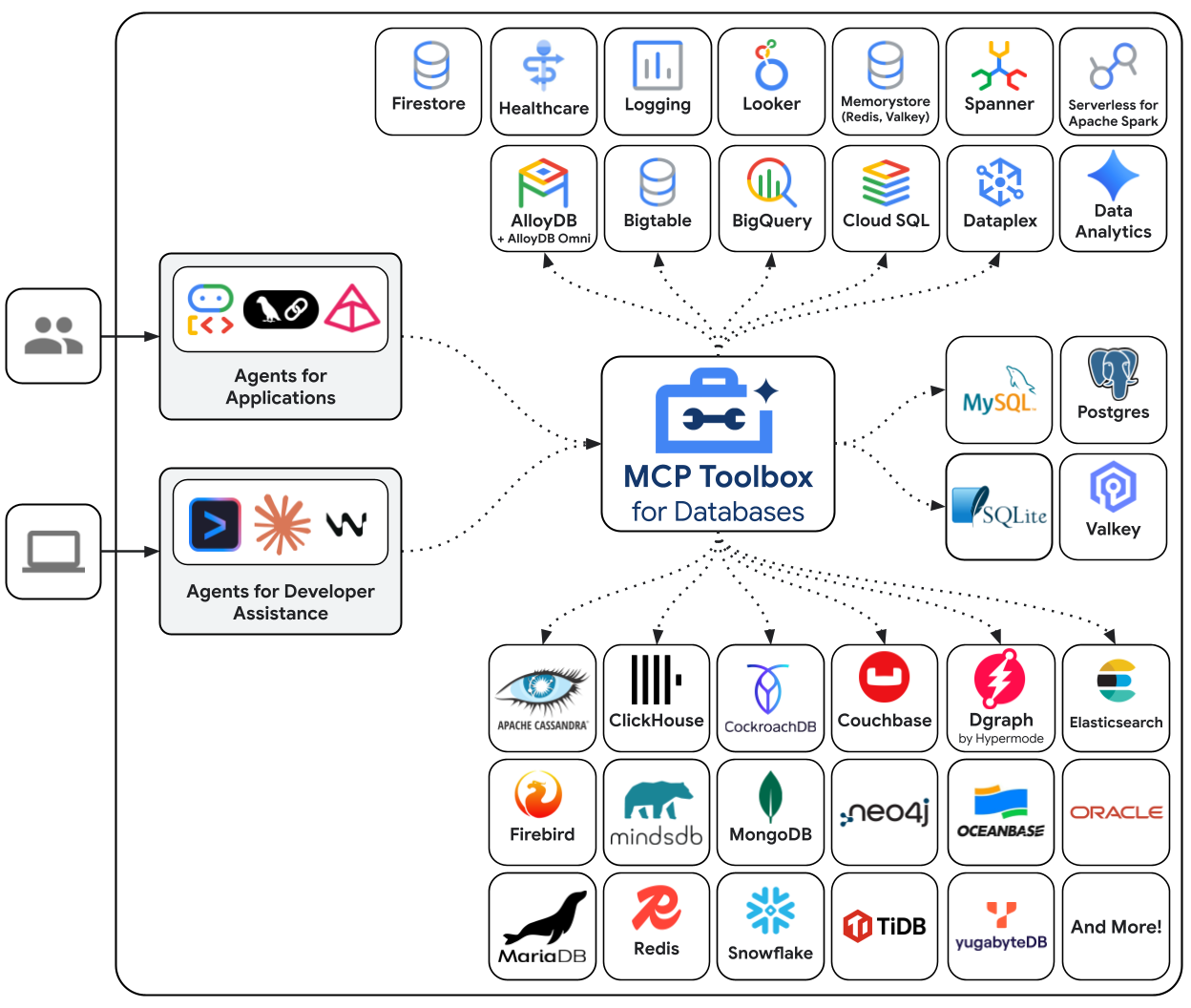

AI 代理的实用性取决于其可以访问的数据。大多数真实世界的数据都存储在数据库中,而将智能体连接到数据库通常意味着需要在智能体代码中编写连接管理、查询逻辑和嵌入流水线。每个需要数据库访问权限的代理都需要重复这项工作,并且每次更改查询都需要重新部署代理。

此 Codelab 展示了另一种方法。您可以在 YAML 文件中声明数据库工具(标准 SQL 查询、向量相似性搜索,甚至自动生成嵌入),而 MCP Toolbox for Databases 会作为 MCP 服务器处理所有数据库操作。代理代码保持最简:加载工具,让 Gemini 决定调用哪个工具。

构建内容

一个面向“TechJobs”的智能职位发布助理 - 一款由 Gemini 提供支持的 ADK 代理,可帮助开发者使用标准过滤条件(角色、技术栈)浏览技术招聘信息,并通过自然语言描述(例如“我想找一份远程工作,开发 AI 聊天机器人”)发现职位。该代理完全通过 MCP Toolbox for Databases 从 Cloud SQL PostgreSQL 数据库读取数据和向其中写入数据,该工具箱可处理所有数据库访问操作,包括为向量搜索自动生成嵌入。最后,工具箱和代理都将在 Cloud Run 上运行。

学习内容

- MCP (Model Context Protocol) 如何为 AI 智能体标准化工具访问,以及 MCP Toolbox for Databases 如何将此应用于数据库操作

- 将 MCP Toolbox for Databases 设置为 ADK 代理和 Cloud SQL PostgreSQL 之间的中间件

- 在

tools.yaml中以声明方式定义数据库工具 - 智能体中没有数据库代码 - 构建一个 ADK 智能体,该智能体使用

ToolboxToolset从正在运行的 Toolbox 服务器加载工具 - 使用 Cloud SQL 的内置

embedding()函数生成向量嵌入,并使用pgvector启用语义搜索 - 使用

valueFromParam功能在写入操作时自动提取向量 - 将 Toolbox 服务器和 ADK 智能体都部署到 Cloud Run

前提条件

- 具有试用结算账号的 Google Cloud 账号

- 基本熟悉 Python 和 SQL

- 如果之前使用过 Cloud Database 和 ADK,会有所帮助

2. 设置环境

此步骤将准备 Cloud Shell 环境、配置 Google Cloud 项目并克隆参考代码库。

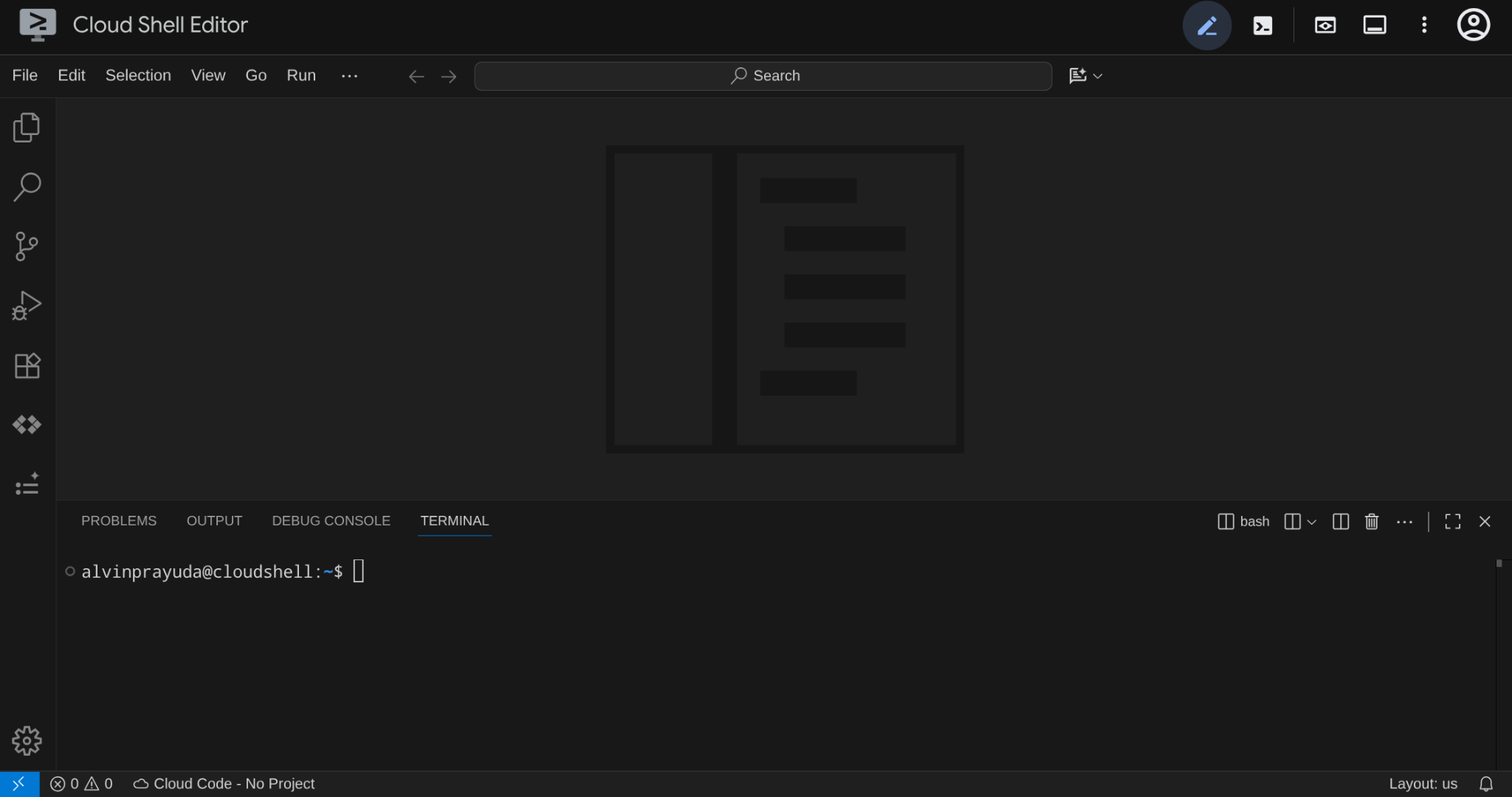

打开 Cloud Shell

在浏览器中打开 Cloud Shell。Cloud Shell 提供了一个预配置的环境,其中包含本 Codelab 所需的所有工具。在系统提示时点击授权,以

然后,依次点击“查看” ->“终端”,打开终端。您的界面应与此类似

这将是我们的主要界面,顶部是 IDE,底部是终端

设置工作目录

创建工作目录。您在此 Codelab 中编写的所有代码都位于此处:

mkdir -p ~/build-agent-adk-toolbox-cloudsql

cloudshell workspace ~/build-agent-adk-toolbox-cloudsql && cd ~/build-agent-adk-toolbox-cloudsql

之后,我们来准备几个目录,用于管理种子脚本和日志等内容

mkdir -p ~/build-agent-adk-toolbox-cloudsql/scripts

mkdir -p ~/build-agent-adk-toolbox-cloudsql/logs

设置您的 Google Cloud 项目

创建包含位置变量的 .env 文件:

# For Vertex AI / Gemini API calls

echo "GOOGLE_CLOUD_LOCATION=global" > .env

# For Cloud SQL, Cloud Run, Artifact Registry

echo "REGION=us-central1" >> .env

为简化终端中的项目设置,请将此项目设置脚本下载到您的工作目录中:

curl -sL https://raw.githubusercontent.com/alphinside/cloud-trial-project-setup/main/setup_verify_trial_project.sh -o setup_verify_trial_project.sh

运行脚本。它会验证您的试用结算账号,创建新项目(或验证现有项目),将项目 ID 保存到当前目录中的 .env 文件,并在 gcloud 中设置有效项目。

bash setup_verify_trial_project.sh && source .env

该脚本将:

- 验证您是否拥有有效的试用结算账号

- 检查

.env中是否存在现有项目(如果有) - 创建新项目或重复使用现有项目

- 将试用结算账号与您的项目相关联

- 将项目 ID 保存到

.env - 将项目设置为活跃

gcloud项目

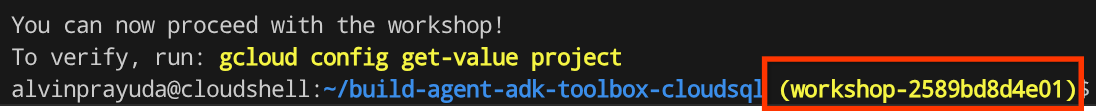

通过检查 Cloud Shell 终端提示中工作目录旁边的黄色文字,验证项目是否已正确设置。其中应显示您的项目 ID。

激活必需的 API

接下来,我们需要为将要交互的产品启用多个 API:

gcloud services enable \

aiplatform.googleapis.com \

sqladmin.googleapis.com \

compute.googleapis.com \

run.googleapis.com \

cloudbuild.googleapis.com \

artifactregistry.googleapis.com

- Vertex AI API (

aiplatform.googleapis.com) - 您的代理使用 Gemini 模型,而工具箱使用嵌入 API 进行向量搜索。 - Cloud SQL Admin API (

sqladmin.googleapis.com) - 您可以预配和管理 PostgreSQL 实例。 - Compute Engine API (

compute.googleapis.com) - 创建 Cloud SQL 实例时需要此 API。 - Cloud Run、Cloud Build、Artifact Registry - 在本 Codelab 后面的部署步骤中使用

3. 准备数据库初始化脚本

此步骤会开始创建 Cloud SQL 实例,并运行一个自动化设置脚本,该脚本会等待实例准备就绪,然后创建数据库、使用职位信息填充数据库并生成嵌入内容,所有这些操作都在一次完成。

首先,将数据库密码添加到 .env 文件并重新加载该文件:

echo "DB_PASSWORD=techjobs-pwd" >> .env

echo "DB_INSTANCE=jobs-instance" >> .env

echo "DB_NAME=jobs_db" >> .env

source .env

为实例和数据库创建创建 Bash 脚本

然后,使用以下命令创建 scripts/setup_database.sh 脚本

mkdir -p ~/build-agent-adk-toolbox-cloudsql/scripts

cloudshell edit scripts/setup_database.sh

然后,将以下代码复制到 scripts/setup_database.sh 文件中

#!/bin/bash

set -e

source .env

echo "================================================"

echo "Database Setup"

echo "================================================"

echo ""

# Step 1: Create Cloud SQL instance

echo "[1/5] Creating Cloud SQL instance..."

# Check if instance already exists

if gcloud sql instances describe "$DB_INSTANCE" --quiet >/dev/null 2>&1; then

echo " Instance already exists"

else

echo " Creating instance (takes 5-10 minutes)..."

gcloud sql instances create "$DB_INSTANCE" \

--database-version=POSTGRES_17 \

--tier=db-custom-1-3840 \

--edition=ENTERPRISE \

--region="$REGION" \

--root-password="$DB_PASSWORD" \

--enable-google-ml-integration \

--database-flags cloudsql.enable_google_ml_integration=on \

--quiet

fi

echo " ✓ Instance ready"

echo ""

# Step 2: Verify instance is ready

echo "[2/5] Verifying instance state..."

STATE=$(gcloud sql instances describe "$DB_INSTANCE" --format='value(state)')

if [ "$STATE" != "RUNNABLE" ]; then

echo "ERROR: Instance not ready (state: $STATE)"

exit 1

fi

echo " ✓ Instance is RUNNABLE"

echo ""

# Step 3: Grant IAM permissions

echo "[3/5] Granting Vertex AI permissions..."

SERVICE_ACCOUNT=$(gcloud sql instances describe "$DB_INSTANCE" \

--format='value(serviceAccountEmailAddress)')

if [ -z "$SERVICE_ACCOUNT" ]; then

echo "ERROR: Could not retrieve service account"

exit 1

fi

gcloud projects add-iam-policy-binding "$GOOGLE_CLOUD_PROJECT" \

--member="serviceAccount:$SERVICE_ACCOUNT" \

--role="roles/aiplatform.user" \

--quiet

echo " ✓ Permissions granted"

echo ""

# Step 4: Create database

echo "[4/5] Creating database..."

# Check if database already exists

if gcloud sql databases describe "$DB_NAME" \

--instance="$DB_INSTANCE" --quiet >/dev/null 2>&1; then

echo " Database already exists"

else

gcloud sql databases create "$DB_NAME" \

--instance="$DB_INSTANCE" \

--quiet

fi

echo " ✓ Database '$DB_NAME' ready"

echo ""

# Step 5: Seed database and generate embeddings

echo "[5/5] Seeding database and generating embeddings..."

SCRIPT_DIR="$(cd "$(dirname "${BASH_SOURCE[0]}")" && pwd)"

SETUP_SCRIPT="${SCRIPT_DIR}/setup_jobs_db.py"

if [ ! -f "$SETUP_SCRIPT" ]; then

echo "ERROR: Setup script not found: $SETUP_SCRIPT"

exit 1

fi

uv run "$SETUP_SCRIPT"

echo ""

echo "================================================"

echo "Setup complete!"

echo "================================================"

echo ""

为数据种子创建 Python 脚本

之后,使用以下命令创建初始脚本 Python 文件 scripts/setup_jobs_db.py

cloudshell edit scripts/setup_jobs_db.py

然后,将以下代码复制到 scripts/setup_jobs_db.py 文件中

import os

import sys

from pathlib import Path

from dotenv import load_dotenv

from google.cloud.sql.connector import Connector

import pg8000

import time

# Load environment variables from .env file

env_path = Path(__file__).parent.parent / '.env'

load_dotenv(env_path)

EMBEDDING_MODEL='gemini-embedding-001'

# Verify required environment variables

required_vars = ['GOOGLE_CLOUD_PROJECT', 'REGION', 'DB_PASSWORD']

missing_vars = [var for var in required_vars if not os.environ.get(var)]

if missing_vars:

print(f"ERROR: Missing required environment variables: {', '.join(missing_vars)}", file=sys.stderr)

print(f"", file=sys.stderr)

print(f"Expected .env file location: {env_path}", file=sys.stderr)

if not env_path.exists():

print(f"✗ File not found at that location", file=sys.stderr)

else:

print(f"✓ File exists but is missing the variables above", file=sys.stderr)

print(f"", file=sys.stderr)

print(f"Make sure your .env file contains:", file=sys.stderr)

for var in missing_vars:

print(f" {var}=<value>", file=sys.stderr)

sys.exit(1)

# Job listings data (fictional, for tutorial purposes only)

JOBS = [

("Senior Backend Engineer", "Stripe", "Backend", "Go, PostgreSQL, gRPC, Kubernetes", "$180-250K/year", "San Francisco, Hybrid", 3,

"Design and build high-throughput microservices powering payment infrastructure for millions of businesses. Optimize Go services for sub-100ms latency at scale, work with PostgreSQL and Redis for data persistence, and deploy on Kubernetes clusters handling billions of API calls."),

("Machine Learning Engineer", "Spotify", "Data/AI", "Python, TensorFlow, BigQuery, Vertex AI", "$170-230K/year", "Stockholm, Remote", 2,

"Build and deploy ML models for music recommendation and personalization systems serving hundreds of millions of listeners. Design feature pipelines in BigQuery, train models using distributed computing, and serve predictions through real-time APIs processing thousands of requests per second."),

("Frontend Engineer", "Vercel", "Frontend", "React, TypeScript, Next.js", "$140-190K/year", "Remote", 4,

"Build developer-facing dashboard interfaces and deployment tools used by millions of developers worldwide. Create responsive, accessible React components for project management, analytics, and real-time deployment monitoring with a focus on developer experience."),

("DevOps Engineer", "Datadog", "DevOps", "Terraform, GCP, Docker, Kubernetes, ArgoCD", "$160-220K/year", "New York, Hybrid", 2,

"Manage cloud infrastructure powering an observability platform used by thousands of engineering teams. Automate deployment pipelines with ArgoCD, manage multi-cloud Kubernetes clusters, and implement infrastructure-as-code with Terraform across production environments."),

("Mobile Engineer (Android)", "Grab", "Mobile", "Kotlin, Jetpack Compose, GraphQL", "$120-170K/year", "Singapore, Hybrid", 3,

"Develop features for a super-app serving millions of users across Southeast Asia. Build modern Android UIs with Jetpack Compose, integrate GraphQL APIs, and optimize app performance for diverse device capabilities and network conditions."),

("Data Engineer", "Airbnb", "Data", "Python, Apache Spark, Airflow, BigQuery", "$160-210K/year", "San Francisco, Hybrid", 2,

"Build data pipelines that process booking, search, and pricing data for a global travel marketplace. Design ETL workflows with Apache Spark and Airflow, maintain data warehouses in BigQuery, and ensure data quality for analytics and machine learning teams."),

("Full Stack Engineer", "Revolut", "Full Stack", "TypeScript, Node.js, React, PostgreSQL", "$130-180K/year", "London, Remote", 5,

"Build the next generation of financial products making banking accessible to millions of users across 35 countries. Develop real-time trading interfaces with React and WebSockets, build Node.js APIs handling market data streams, and design PostgreSQL schemas for financial transactions."),

("Site Reliability Engineer", "Cloudflare", "SRE", "Go, Prometheus, Grafana, GCP, Terraform", "$170-230K/year", "Austin, Hybrid", 2,

"Ensure 99.99% uptime for a global network handling millions of requests per second. Define SLOs, build monitoring dashboards with Prometheus and Grafana, manage incident response, and automate infrastructure scaling across 300+ data centers worldwide."),

("Cloud Architect", "Google Cloud", "Cloud", "GCP, Terraform, Kubernetes, Python", "$200-280K/year", "Seattle, Hybrid", 1,

"Help enterprises modernize their infrastructure on Google Cloud. Design multi-region architectures, lead migration projects from on-premises to GKE, and build reference implementations using Terraform and Cloud Foundation Toolkit."),

("Backend Engineer (Payments)", "Square", "Backend", "Java, Spring Boot, PostgreSQL, Kafka", "$160-220K/year", "San Francisco, Hybrid", 3,

"Build payment processing systems handling millions of transactions for businesses of all sizes. Design event-driven architectures using Kafka, implement idempotent payment flows with Spring Boot, and ensure PCI-DSS compliance across all services."),

("AI Engineer", "Hugging Face", "Data/AI", "Python, LangChain, Vertex AI, FastAPI, PostgreSQL", "$150-210K/year", "Paris, Remote", 2,

"Build AI-powered tools for the largest open-source ML community. Develop RAG pipelines that index and search model documentation, create conversational agents using LangChain, and deploy AI services with FastAPI on cloud infrastructure."),

("Platform Engineer", "Coinbase", "Platform", "Rust, Kubernetes, AWS, Terraform", "$180-250K/year", "Remote", 0,

"Build the infrastructure platform for a leading cryptocurrency exchange. Develop high-performance matching engines in Rust, manage Kubernetes clusters for microservices, and design CI/CD pipelines that enable rapid feature deployment with zero downtime."),

("QA Automation Engineer", "Shopify", "QA", "Python, Selenium, Cypress, Jenkins", "$110-160K/year", "Toronto, Hybrid", 3,

"Design and maintain automated test suites for a commerce platform powering millions of merchants. Build end-to-end test frameworks with Cypress and Selenium, integrate tests into Jenkins CI pipelines, and establish quality gates that prevent regressions in checkout and payment flows."),

("Security Engineer", "CrowdStrike", "Security", "Python, SIEM, Kubernetes, Penetration Testing", "$170-240K/year", "Austin, On-site", 1,

"Protect enterprise customers from cyber threats on a leading endpoint security platform. Conduct penetration testing, design security monitoring with SIEM tools, implement zero-trust networking in Kubernetes environments, and lead incident response for security events."),

("Product Engineer", "GitLab", "Full Stack", "Go, React, PostgreSQL, Redis, GCP", "$140-200K/year", "Remote", 4,

"Own features end-to-end for an all-in-one DevSecOps platform used by millions of developers. Build Go microservices for CI/CD pipelines, create React frontends for code review and project management, and collaborate with product managers to iterate on user-facing features using data-driven development."),

]

def get_connection():

"""Create a connection to Cloud SQL using the connector."""

project = os.environ['GOOGLE_CLOUD_PROJECT']

region = os.environ['REGION']

password = os.environ['DB_PASSWORD']

instance = os.environ['DB_INSTANCE']

database = os.environ['DB_NAME']

connector = Connector()

conn = connector.connect(

f"{project}:{region}:{instance}",

"pg8000",

user="postgres",

password=password,

db=database

)

return conn, connector

def create_schema(cursor):

"""Create extensions and jobs table."""

cursor.execute("CREATE EXTENSION IF NOT EXISTS google_ml_integration")

cursor.execute("CREATE EXTENSION IF NOT EXISTS vector")

cursor.execute("""

CREATE TABLE IF NOT EXISTS jobs (

id SERIAL PRIMARY KEY,

title VARCHAR NOT NULL,

company VARCHAR NOT NULL,

role VARCHAR NOT NULL,

tech_stack VARCHAR NOT NULL,

salary_range VARCHAR NOT NULL,

location VARCHAR NOT NULL,

openings INTEGER NOT NULL,

description TEXT NOT NULL,

description_embedding vector(3072)

)

""")

def seed_jobs(cursor, conn):

"""Insert job listings."""

cursor.execute("SELECT COUNT(*) FROM jobs")

existing_count = cursor.fetchone()[0]

if existing_count > 0:

print(f" {existing_count} jobs already exist, skipping seed")

return 0

cursor.executemany("""

INSERT INTO jobs (title, company, role, tech_stack, salary_range, location, openings, description)

VALUES (%s, %s, %s, %s, %s, %s, %s, %s)

""", JOBS)

conn.commit()

return len(JOBS)

def generate_embeddings(cursor, conn):

"""Generate embeddings using Cloud SQL's embedding() function."""

cursor.execute("SELECT COUNT(*) FROM jobs WHERE description_embedding IS NULL")

null_count = cursor.fetchone()[0]

if null_count == 0:

print(" All jobs already have embeddings")

return 0

cursor.execute(f"""

UPDATE jobs

SET description_embedding = embedding('{EMBEDDING_MODEL}', description)::vector

WHERE description_embedding IS NULL

""")

rows_updated = cursor.rowcount

conn.commit()

return rows_updated

def main():

conn, connector = get_connection()

cursor = conn.cursor()

try:

create_schema(cursor)

conn.commit()

seeded = seed_jobs(cursor, conn)

if seeded > 0:

print(f" ✓ Inserted {seeded} jobs")

# Waiting for vertex role propagation

time.sleep(60)

embedded = generate_embeddings(cursor, conn)

if embedded > 0:

print(f" ✓ Generated {embedded} embeddings")

except Exception as e:

print(f"ERROR: {e}", file=sys.stderr)

sys.exit(1)

finally:

cursor.close()

conn.close()

connector.close()

if __name__ == "__main__":

main()

现在,我们进入下一步

4. 创建并初始化数据库

现在,我们的脚本已准备好执行。我们需要使用 Python 来执行准备好的脚本,因此我们先准备好 Python

设置 Python 项目

uv 是一款使用 Rust 编写的快速 Python 软件包和项目管理器(请参阅 uv 文档)。此 Codelab 使用它来提高 Python 项目的维护速度并简化维护工作

初始化 Python 项目并添加所需的依赖项:

uv init

uv add cloud-sql-python-connector --extra pg8000

uv add python-dotenv

请注意,我们在此处使用 cloud-sql-python-connector Python SDK 来初始化与数据库实例的安全连接,该连接使用应用默认凭证进行身份验证。

执行设置脚本

现在,我们可以使用以下命令在后台运行设置脚本,并检查将写入 logs/atabase_setup.log 文件的控制台输出。在等待此操作完成期间,您可以继续执行下一部分

mkdir -p ~/build-agent-adk-toolbox-cloudsql/logs

bash scripts/setup_database.sh > logs/database_setup.log 2>&1 &

下载 Toolbox 二进制文件

在本教程中,我们将使用 MCP Toolbox,幸运的是,它附带了一个预构建的二进制文件,可在 Linux 环境中使用。现在,我们也在后台下载它,因为这需要相当长的时间。运行以下命令下载二进制文件,并检查 logs/toolbox_dl.log 上的输出日志。在等待此操作完成期间,您可以继续执行下一部分

cd ~/build-agent-adk-toolbox-cloudsql

curl -O https://storage.googleapis.com/mcp-toolbox-for-databases/v1.0.0/linux/amd64/toolbox > logs/toolbox_dl.log 2>&1 &

了解设置脚本 scripts/setup_database.sh

现在,我们来尝试了解之前配置的设置脚本。它会执行以下流程

- 我们在其中执行的第一个命令是

gcloud sql instances create命令,并带有以下标志

db-custom-1-3840是ENTERPRISE版中最小的专用核心 Cloud SQL 层(1 个 vCPU、3.75 GB RAM)。如需了解更多详情,请点击此处。Vertex AI 机器学习集成需要专用核心,共享核心层级(db-f1-micro、db-g1-small)不支持此功能。--root-password为默认postgres用户设置密码。--enable-google-ml-integration可启用 Cloud SQL 与 Vertex AI 的内置集成,让您可以使用embedding()函数直接从 SQL 调用嵌入模型。

- 验证实例是否已处于

RUNNABLE状态 - 使用

gcloud projects add-iam-policy-binding命令授予 Cloud SQL 实例的服务账号调用 Vertex AI 的权限。这是必需的,因为在为数据库设定初始值时,我们将使用内置的embedding()函数 - 创建数据库

- 执行初始配置脚本

setup_jobs_db.py脚本

了解种子脚本 scripts/setup_jobs_db.py

现在,我们来看一下初始配置脚本,该脚本会执行以下操作:

- 初始化与数据库实例的连接

- 安装两个 PostgreSQL 扩展程序:

google_ml_integration- 提供embedding()SQL 函数,该函数可直接从 SQL 调用 Vertex AI 嵌入模型。这是一个数据库级扩展程序,可让jobs_db内使用机器学习函数。您在创建实例期间设置的实例级标志 (--enable-google-ml-integration) 允许 Cloud SQL 虚拟机访问 Vertex AI;该扩展程序使 SQL 函数在此特定数据库中可用。vector(pgvector) - 添加了vector数据类型和距离运算符,用于存储和查询嵌入内容。

- 创建表,请注意

description_embedding列是vector(3072),即存储 3072 维向量的pgvector列。 - 播种初始作业数据

- 从

description字段生成嵌入数据,并通过embedding()函数使用内置 Vertex 集成填充description_embedding

embedding('gemini-embedding-001', description)- 直接从 SQL 调用 Vertex AI 的 Gemini 嵌入模型,并传递每个作业的description文本。这是您在初始脚本中安装的google_ml_integration扩展程序。::vector- 将返回的浮点数组转换为 pgvector 的vector类型,以便可以使用距离运算符存储和查询该数组。UPDATE跨所有 15 行运行,为每个职位说明生成一个 3072 维的嵌入。

这样会准备初始数据,供我们的代理访问

5. 配置 MCP Toolbox for Databases

此步骤介绍了 MCP Toolbox for Databases,将其配置为连接到您的 Cloud SQL 实例,并定义了两个标准 SQL 查询工具。

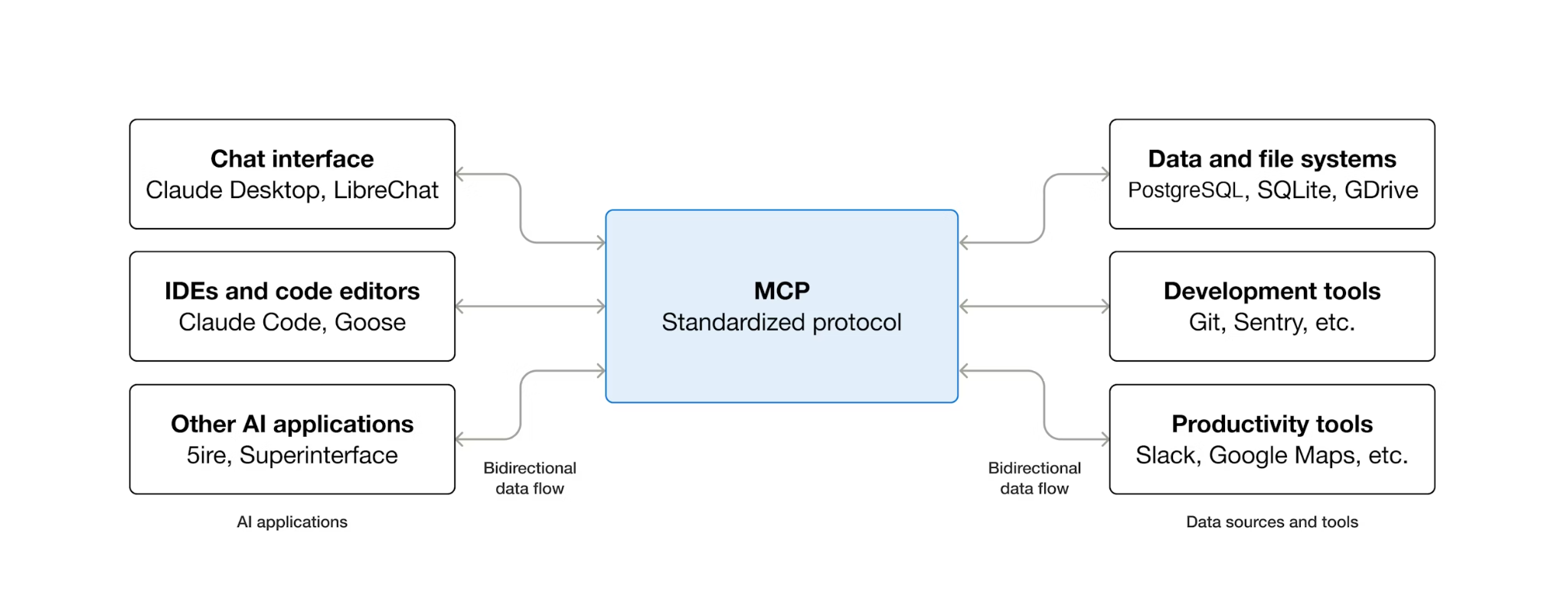

什么是 MCP?为什么要使用 Toolbox?

MCP(Model Context Protocol)是一种开放协议,可标准化 AI 智能体发现和与外部工具互动的方式。它定义了客户端-服务器模型:智能体托管 MCP 客户端,工具由 MCP 服务器公开。任何兼容 MCP 的客户端都可以使用任何兼容 MCP 的服务器,智能体无需为每个工具编写自定义集成代码。

MCP Toolbox for Databases 是一款专门为数据库访问而构建的开源 MCP 服务器。如果没有它,您需要编写 Python 函数来打开数据库连接、管理连接池、构建参数化查询以防止 SQL 注入、处理错误,并将所有这些代码嵌入到代理中。需要访问数据库的每个代理都会重复这项工作。更改查询意味着重新部署代理。

使用 Toolbox 时,您需要编写一个 YAML 文件。每种工具都映射到一条参数化 SQL 语句。Toolbox 可处理连接池、参数化查询、身份验证和可观测性。工具与代理分离 - 通过编辑 tools.yaml 并重启 Toolbox 来更新查询,无需修改代理代码。这些工具可用于 ADK、LangGraph、LlamaIndex 或任何 MCP 兼容框架。

编写工具配置

现在,我们需要在 Cloud Shell 编辑器中创建一个名为 tools.yaml 的文件,以设置工具配置

cloudshell edit tools.yaml

该文件使用多文档 YAML,每个以 --- 分隔的块都是一个独立的资源。每个资源都有一个声明其用途的 kind(sources 表示数据库连接,tools 表示可供代理调用的操作)和一个指定后端的 type(cloud-sql-postgres 表示来源,postgres-sql 表示基于 SQL 的工具)。工具通过 name 引用其来源,这样 Toolbox 就能知道要针对哪个连接池执行操作。环境变量使用 ${VAR_NAME} 语法,并在启动时解析。

现在,我们先将以下脚本复制到 tools.yaml 文件中

# tools.yaml

# --- Data Source ---

kind: source

name: jobs-db

type: cloud-sql-postgres

project: ${GOOGLE_CLOUD_PROJECT}

region: ${REGION}

instance: ${DB_INSTANCE}

database: ${DB_NAME}

user: postgres

password: ${DB_PASSWORD}

---

此脚本定义了以下资源:

- 来源 (

jobs-db) - 用于告知 Toolbox 如何连接到您的 Cloud SQL PostgreSQL 实例。cloud-sql-postgres类型在内部使用 Cloud SQL 连接器,可自动处理身份验证和安全连接。${GOOGLE_CLOUD_PROJECT}、${REGION}和${DB_PASSWORD}占位符在启动时从环境变量中解析。

接下来,将以下脚本附加到 tools.yaml 中 --- 符号的下方

# --- Tool 1: Search jobs by role and/or tech stack ---

kind: tool

name: search-jobs

type: postgres-sql

source: jobs-db

description: >-

Search for job listings by role category and/or tech stack.

Use this tool when the developer wants to browse listings

by role (e.g., Backend, Frontend, Data) or find jobs

using a specific technology. Both parameters accept an

empty string to match all values.

statement: |

SELECT title, company, role, tech_stack, salary_range, location, openings

FROM jobs

WHERE ($1 = '' OR LOWER(role) = LOWER($1))

AND ($2 = '' OR LOWER(tech_stack) LIKE '%' || LOWER($2) || '%')

ORDER BY title

LIMIT 10

parameters:

- name: role

type: string

description: "The role category to filter by (e.g., 'Backend', 'Frontend', 'Data/AI', 'DevOps'). Use empty string for all roles."

- name: tech_stack

type: string

description: "A technology to search for in the tech stack (partial match, e.g., 'Python', 'Kubernetes'). Use empty string for all tech stacks."

---

# --- Tool 2: Get full details for a specific job ---

kind: tool

name: get-job-details

type: postgres-sql

source: jobs-db

description: >-

Get full details for a specific job listing including its description,

salary range, location, and number of openings. Use this tool when the

developer asks about a particular job by title or company.

statement: |

SELECT title, company, role, tech_stack, salary_range, location, openings, description

FROM jobs

WHERE LOWER(title) LIKE '%' || LOWER($1) || '%'

OR LOWER(company) LIKE '%' || LOWER($1) || '%'

parameters:

- name: search_term

type: string

description: "The job title or company name to look up (partial match supported)."

---

此脚本定义了以下资源:

- 工具 1 和 2 (

search-jobs、get-job-details) - 标准 SQL 查询工具。每个映射都将工具名称(代理看到的内容)映射到参数化 SQL 语句(数据库执行的内容)。参数使用$1、$2位置占位符。Toolbox 会将这些语句作为预处理语句执行,从而防止 SQL 注入。

我们继续操作,在 tools.yaml 中 --- 符号下方附加以下脚本

# --- Embedding Model ---

kind: embeddingModel

name: gemini-embedding

type: gemini

model: gemini-embedding-001

project: ${GOOGLE_CLOUD_PROJECT}

location: ${GOOGLE_CLOUD_LOCATION}

dimension: 3072

---

此脚本定义了以下资源:

- 嵌入模型 (

gemini-embedding) - 将工具箱配置为调用 Gemini 的gemini-embedding-001模型来生成 3072 维的文本嵌入。该工具箱使用应用默认凭证 (ADC) 进行身份验证,因此在 Cloud Shell 或 Cloud Run 中无需使用 API 密钥。请注意,此处配置的dimension必须与之前配置的用于为数据库提供初始数据的dimension相同

我们继续操作,在 tools.yaml 中 --- 符号下方附加以下脚本

# --- Tool 3: Semantic search by description ---

kind: tool

name: search-jobs-by-description

type: postgres-sql

source: jobs-db

description: >-

Find jobs that match a natural language description of what the developer

is looking for. Use this tool when the developer describes their ideal job

using interests, work style, career goals, or project type rather than a

specific role or tech stack. Examples: "I want to work on AI chatbots,"

"a remote job at a fintech startup," "something involving infrastructure

and reliability."

statement: |

SELECT title, company, role, tech_stack, salary_range, location, description

FROM jobs

WHERE description_embedding IS NOT NULL

ORDER BY description_embedding <=> $1

LIMIT 5

parameters:

- name: search_query

type: string

description: "A natural language description of the kind of job the developer is looking for."

embeddedBy: gemini-embedding

---

此脚本定义了以下资源:

- 工具 3 (

search-jobs-by-description) - 一种向量搜索工具。search_query参数具有embeddedBy: gemini-embedding,该参数会指示 Toolbox 拦截原始文本,将其发送到嵌入模型,并在 SQL 语句中使用生成的向量。<=>运算符是 pgvector 的余弦距离,值越小表示说明越相似。

最后,将最后一个工具附加到 tools.yaml 中 --- 符号下方

# --- Tool 4: Add a new job listing with automatic embedding ---

kind: tool

name: add-job

type: postgres-sql

source: jobs-db

description: >-

Add a new job listing to the platform. Use this tool when a user asks

to post a job that is not currently listed.

statement: |

INSERT INTO jobs (title, company, role, tech_stack, salary_range, location, openings, description, description_embedding)

VALUES ($1, $2, $3, $4, $5, $6, CAST($7 AS INTEGER), $8, $9)

RETURNING title, company

parameters:

- name: title

type: string

description: "The job title (e.g., 'Senior Backend Engineer')."

- name: company

type: string

description: "The company name (e.g., 'Stripe', 'Spotify')."

- name: role

type: string

description: "The role category (e.g., 'Backend', 'Frontend', 'Data/AI', 'DevOps')."

- name: tech_stack

type: string

description: "Comma-separated list of technologies (e.g., 'Python, FastAPI, GCP')."

- name: salary_range

type: string

description: "The salary range (e.g., '$150-200K/year')."

- name: location

type: string

description: "Work location and arrangement (e.g., 'Remote')."

- name: openings

type: string

description: "The number of open positions."

- name: description

type: string

description: "A short description of the job (2-3 sentences)."

- name: description_vector

type: string

description: "Auto-generated embedding vector for the job description."

valueFromParam: description

embeddedBy: gemini-embedding

此脚本定义了以下资源:

- 工具 4 (

add-job) - 演示了矢量提取。description_vector参数有两个特殊字段: valueFromParam: description- 盒子会将description参数中的值复制到此参数中。LLM 永远不会看到此参数。embeddedBy: gemini-embedding- 工具箱会将复制的文本嵌入到向量中,然后再将其传递给 SQL。

结果:一个工具调用存储了原始说明文本及其向量嵌入,而代理对嵌入一无所知。

多文档 YAML 格式使用 --- 分隔每个资源。每个文档都有 kind、name 和 type 字段来定义其内容。总而言之,我们已配置以下所有内容:

- 定义源数据库

- 定义工具(工具 1 和 2),以使用标准过滤条件查询数据库

- 定义嵌入模型

- 定义用于对数据库执行向量搜索的工具(工具 3)

- 定义用于将向量数据注入(工具 4)到数据库的工具

6. 运行 MCP Toolbox 服务器

在上一步中,我们已经为 MCP Toolbox 设置了必要的配置。现在,我们已准备好运行服务器

验证植入的数据

在启动 Toolbox 之前,请先确认数据库设置已完成。使用以下命令创建 Python 脚本 scripts/verify_database.py

cloudshell edit scripts/verify_seed.py

然后,将以下代码复制到 scripts/verify_seed.py 文件中

#!/usr/bin/env python3

"""Verify the database has 15 jobs with embeddings."""

import os

import sys

from pathlib import Path

from dotenv import load_dotenv

from google.cloud.sql.connector import Connector

import pg8000

# Load environment variables

env_path = Path(__file__).parent.parent / '.env'

load_dotenv(env_path)

# Verify required environment variables

required_vars = ['GOOGLE_CLOUD_PROJECT', 'REGION', 'DB_PASSWORD', 'DB_INSTANCE', 'DB_NAME']

missing_vars = [var for var in required_vars if not os.environ.get(var)]

if missing_vars:

print(f"ERROR: Missing environment variables: {', '.join(missing_vars)}", file=sys.stderr)

sys.exit(1)

def verify_database():

"""Check that 15 jobs exist with embeddings."""

connector = Connector()

try:

project = os.environ['GOOGLE_CLOUD_PROJECT']

region = os.environ['REGION']

password = os.environ['DB_PASSWORD']

instance = os.environ['DB_INSTANCE']

database = os.environ['DB_NAME']

conn = connector.connect(

f"{project}:{region}:{instance}",

"pg8000",

user="postgres",

password=password,

db=database

)

cursor = conn.cursor()

# Count jobs and embeddings

cursor.execute("SELECT COUNT(*) FROM jobs")

job_count = cursor.fetchone()[0]

cursor.execute("SELECT COUNT(*) FROM jobs WHERE description_embedding IS NOT NULL")

embedding_count = cursor.fetchone()[0]

print(f"Jobs: {job_count}/15")

print(f"Embeddings: {embedding_count}/15")

cursor.close()

conn.close()

if job_count == 15 and embedding_count == 15:

print("\n✓ Database ready!")

return True

else:

print("\n✗ Database not ready")

return False

except Exception as e:

print(f"\nERROR: {e}", file=sys.stderr)

return False

finally:

connector.close()

if __name__ == "__main__":

success = verify_database()

sys.exit(0 if success else 1)

此脚本将检查职位发布数据的数量及其嵌入情况。使用以下命令运行脚本

uv run scripts/verify_seed.py

如果您看到以下终端输出,则表示数据已准备就绪

Jobs: 15/15 Embeddings: 15/15 ✓ Database ready!

启动 Toolbox 服务器

在之前的设置步骤中,我们已经下载了 toolbox 可执行文件。确保此二进制文件存在且已成功下载,否则,请下载该文件并等待下载完成

cd ~/build-agent-adk-toolbox-cloudsql

if [ ! -f toolbox ]; then

curl -O https://storage.googleapis.com/mcp-toolbox-for-databases/v1.0.0/linux/amd64/toolbox

fi

chmod +x toolbox

我们需要将 .env 变量公开给由 MCP 工具箱运行的子进程。运行以下命令以启动工具箱服务器,并将其控制台输出记录到 logs/mcp_toolbox.log 文件中

set -a; source .env; set +a

./toolbox --config tools.yaml --enable-api > logs/mcp_toolbox.log 2>&1 &

您应该会在 logs/mcp_toolbox.log 文件中看到确认服务器已准备就绪的输出,如下所示:

... INFO "Initialized 1 sources: jobs-db" ... INFO "Initialized 0 authServices: " ... INFO "Using Vertex AI backend for Gemini embedding" ... INFO "Initialized 1 embeddingModels: gemini-embedding" ... INFO "Initialized 4 tools: add-job, search-jobs, get-job-details, search-jobs-by-description" ... ... INFO "Server ready to serve!"

验证工具

查询 Toolbox API 以列出所有已注册的工具:

curl -s http://localhost:5000/api/toolset | uv run -m json.tool

您应该会看到工具及其说明和参数。如下所示

...

"search-jobs-by-description": {

"description": "Find jobs that match a natural language description of what the developer is looking for. Use this tool when the developer describes their ideal job using interests, work style, career goals, or project type rather than a specific role or tech stack. Examples: \"I want to work on AI chatbots,\" \"a remote job at a fintech startup,\" \"something involving infrastructure and reliability.\"",

"parameters": [

{

"name": "search_query",

"type": "string",

"required": true,

"description": "A natural language description of the kind of job the developer is looking for.",

"authSources": []

}

],

"authRequired": []

}

...

直接测试 search-jobs 工具:

curl -s -X POST http://localhost:5000/api/tool/search-jobs/invoke \

-H "Content-Type: application/json" \

-d '{"role": "Backend", "tech_stack": ""}' | jq '.result | fromjson'

响应应包含种子数据中的两个后端工程职位。

[

{

"title": "Backend Engineer (Payments)",

"company": "Square",

"role": "Backend",

"tech_stack": "Java, Spring Boot, PostgreSQL, Kafka",

"salary_range": "$160-220K/year",

"location": "San Francisco, Hybrid",

"openings": 3

},

{

"title": "Senior Backend Engineer",

"company": "Stripe",

"role": "Backend",

"tech_stack": "Go, PostgreSQL, gRPC, Kubernetes",

"salary_range": "$180-250K/year",

"location": "San Francisco, Hybrid",

"openings": 3

}

]

7. 构建 ADK 智能体

现在,我们将在此项目中使用 Python 中的 ADK,让我们添加所需的依赖项:

uv add google-adk==1.29.0 toolbox-adk==1.0.0

google-adk- Google 的智能体开发套件,包括 Gemini SDKtoolbox-adk- 针对 MCP Toolbox for Databases 的 ADK 集成。

创建代理目录结构

ADK 需要特定的文件夹布局:一个以代理命名的目录,其中包含 __init__.py、agent.py 和 .env。为了帮助您完成此操作,它内置了可快速建立结构的命令:

uv run adk create jobs_agent \

--model gemini-2.5-flash \

--project ${GOOGLE_CLOUD_PROJECT} \

--region ${GOOGLE_CLOUD_LOCATION}

您的目录现在应如下所示:

build-agent-adk-toolbox-cloudsql/ ├── jobs_agent/ │ ├── __init__.py │ ├── agent.py │ └── .env ├── logs ├── scripts └── ...

接下来,我们需要将 ADK 代理集成到正在运行的 Toolbox 服务器,并测试所有四种工具 - 标准查询、语义搜索和向量提取。代理代码非常简单:所有数据库逻辑都位于 tools.yaml 中。

配置代理的环境

ADK 从 shell 环境中读取 GOOGLE_GENAI_USE_VERTEXAI、GOOGLE_CLOUD_PROJECT 和 GOOGLE_CLOUD_LOCATION,您已在之前的步骤中设置了这些变量。唯一特定于代理的变量是 TOOLBOX_URL - 将其附加到代理的 .env 文件:

echo -e "\nTOOLBOX_URL=http://127.0.0.1:5000" >> jobs_agent/.env

更新代理模块

在 Cloud Shell Editor 中打开 jobs_agent/agent.py

cloudshell edit jobs_agent/agent.py

并将内容覆盖为以下代码:

# jobs_agent/agent.py

import os

from google.adk.agents import LlmAgent

from toolbox_adk import ToolboxToolset

TOOLBOX_URL = os.environ.get("TOOLBOX_URL", "http://127.0.0.1:5000")

toolbox = ToolboxToolset(TOOLBOX_URL)

root_agent = LlmAgent(

name="jobs_agent",

model="gemini-2.5-flash",

instruction="""You are a helpful assistant at "TechJobs," a tech job listing platform.

Your job:

- Help developers browse job listings by role or tech stack.

- Provide full details about specific positions, including salary range and number of openings.

- Recommend jobs based on natural language descriptions of what the developer is looking for.

- Add new job listings to the platform when asked.

When a developer asks about a specific job by title or company, use the get-job-details tool.

When a developer asks for a specific role category or tech stack, use the search-jobs tool.

When a developer describes what kind of job they want — by interest area, work style,

career goals, or project type — use the search-jobs-by-description tool for semantic search.

When in doubt between search-jobs and search-jobs-by-description, prefer

search-jobs-by-description — it searches job descriptions and finds more relevant matches.

If a position has no openings (openings is 0), let the developer know

and suggest similar alternatives from the search results.

Be conversational, knowledgeable, and concise.""",

tools=[toolbox],

)

请注意,此处没有数据库代码 - ToolboxToolset 在启动时连接到 Toolbox 服务器并加载所有可用工具。代理按名称调用工具;工具箱将这些调用转换为针对 Cloud SQL 的 SQL 查询。

对于本地开发,TOOLBOX_URL 环境变量默认为 http://127.0.0.1:5000。稍后部署到 Cloud Run 时,您可以使用 Toolbox 服务的 Cloud Run 网址替换此网址,而无需更改任何代码。

该指令目前仅引用了两个标准工具(search-jobs 和 get-job-details)。您将在下一步中添加语义搜索和提取工具,届时会扩展该指令。

测试代理

启动 ADK 开发者界面:

cd ~/build-agent-adk-toolbox-cloudsql

uv run adk web --allow_origins "regex:https://.*\.cloudshell\.dev"

使用 Cloud Shell 的网页预览功能打开终端中显示的网址(通常为 http://localhost:8000),或按住 Ctrl 键并点击终端中显示的网址。从左上角的智能体下拉菜单中选择 jobs_agent。

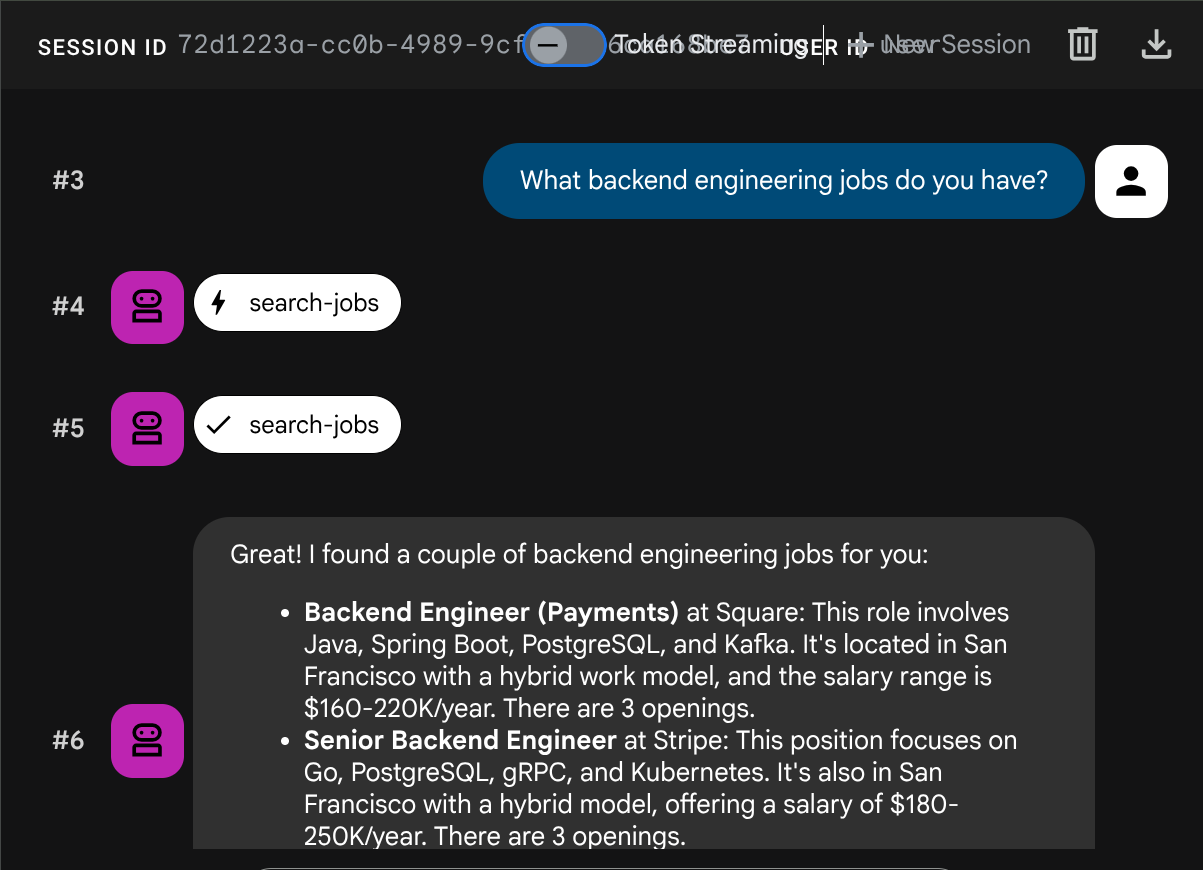

测试标准查询

不妨试试以下提示,验证标准 SQL 工具:

What backend engineering jobs do you have?

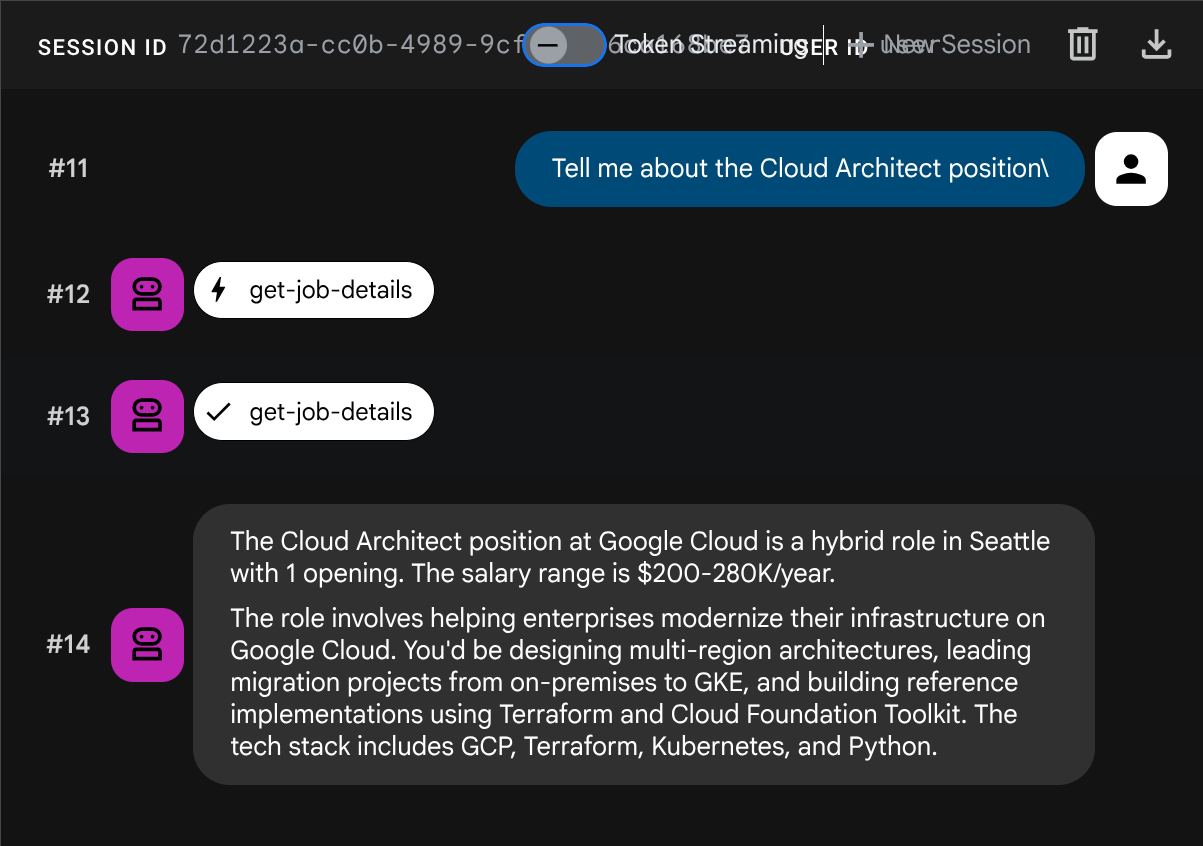

Any jobs using Kubernetes?

Tell me about the Cloud Architect position

测试语义搜索

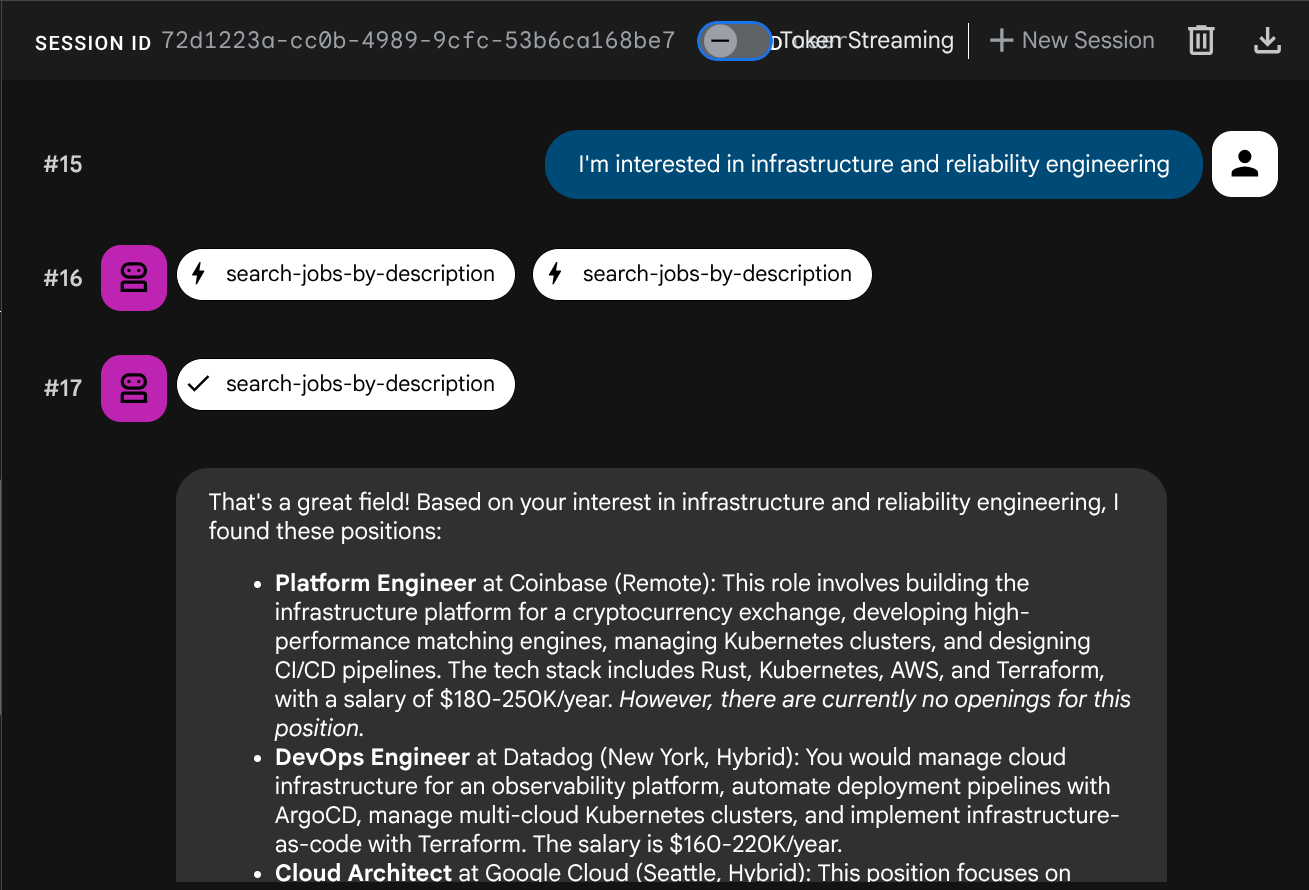

尝试使用未映射到特定角色或技术堆栈的自然语言描述:

I want a remote job where I can work on AI and machine learning

Find me something in fintech with good work-life balance

I'm interested in infrastructure and reliability engineering

智能体将尝试根据查询类型选择合适的工具:结构化过滤条件通过 search-jobs,自然语言描述通过 search-jobs-by-description。

测试向量提取

让代理添加新作业:

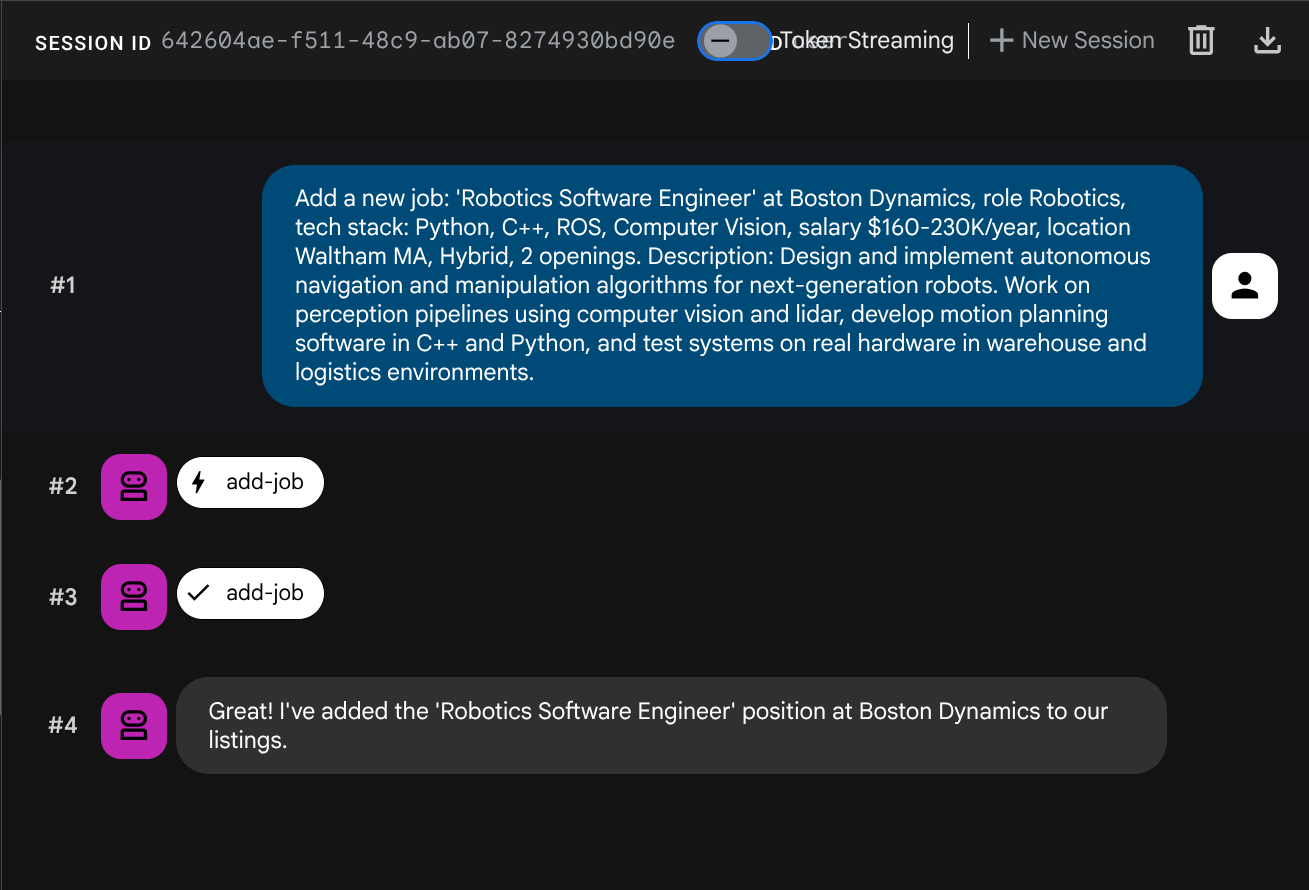

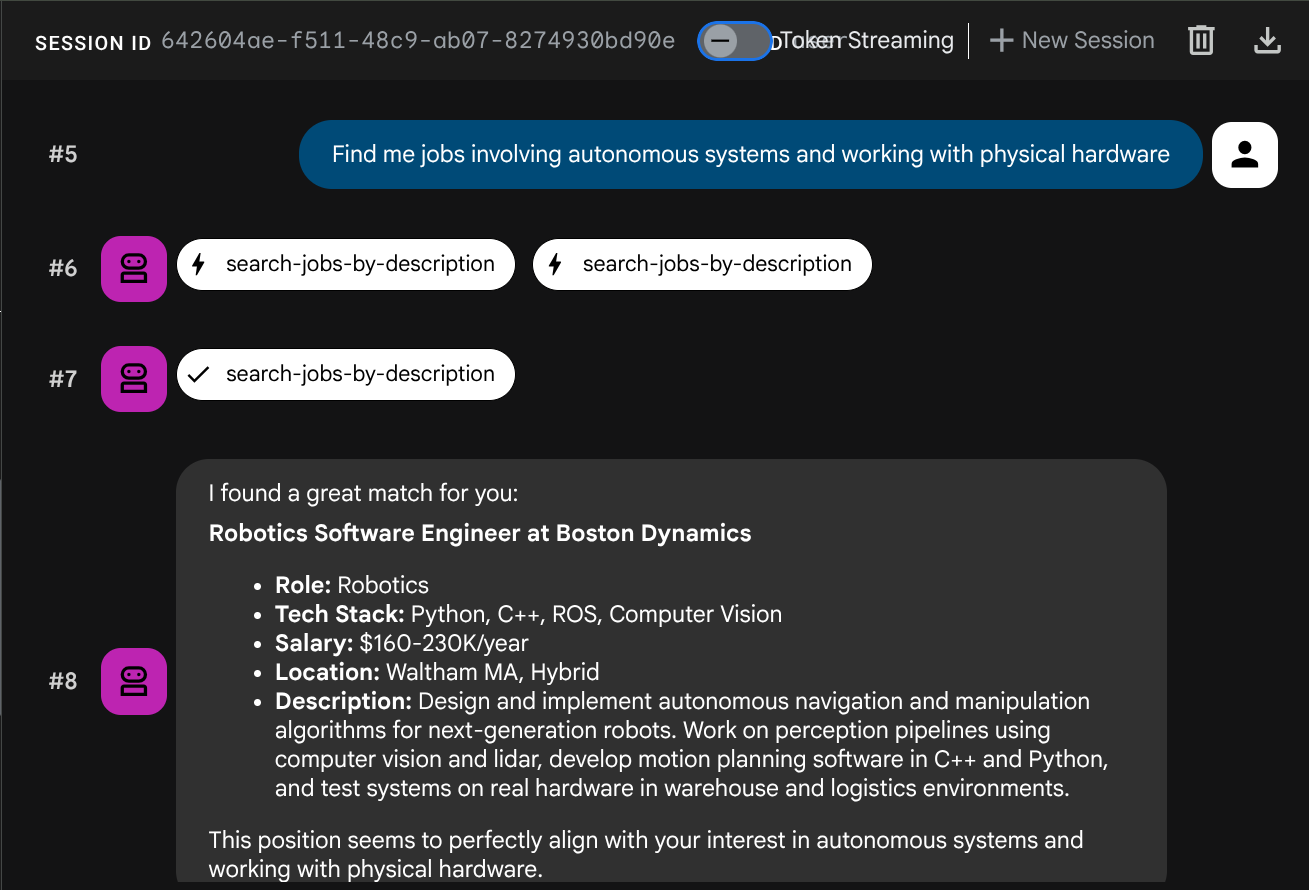

Add a new job: 'Robotics Software Engineer' at Boston Dynamics, role Robotics, tech stack: Python, C++, ROS, Computer Vision, salary $160-230K/year, location Waltham MA, Hybrid, 2 openings. Description: Design and implement autonomous navigation and manipulation algorithms for next-generation robots. Work on perception pipelines using computer vision and lidar, develop motion planning software in C++ and Python, and test systems on real hardware in warehouse and logistics environments.

现在,尝试搜索该内容:

Find me jobs involving autonomous systems and working with physical hardware

嵌入是在 INSERT 期间自动生成的,无需单独执行任何步骤。

现在,您已经拥有一个利用 ADK、MCP Toolbox 和 CloudSQL 的完整且可正常运行的智能体 RAG 应用。恭喜!接下来,我们将进一步把这些应用部署到 Cloud Run!

现在,让我们在继续操作之前,按两次 Ctrl+C 来终止进程,从而停止开发者界面。

8. 部署到 Cloud Run

代理和工具箱在本地运行。此步骤会将两者都部署为 Cloud Run 服务,以便通过互联网访问它们。Toolbox 服务在 Cloud Run 上作为 MCP 服务器运行,而代理服务会连接到该服务。

准备部署工具箱

为 Toolbox 服务创建部署目录:

cd ~/build-agent-adk-toolbox-cloudsql

mkdir -p deploy-toolbox

cp toolbox tools.yaml deploy-toolbox/

为 Toolbox 创建 Dockerfile。在 Cloud Shell Editor 中打开 deploy-toolbox/Dockerfile:

cloudshell edit deploy-toolbox/Dockerfile

并将以下脚本复制到其中

# deploy-toolbox/Dockerfile

FROM debian:bookworm-slim

RUN apt-get update && apt-get install -y ca-certificates && rm -rf /var/lib/apt/lists/*

WORKDIR /app

COPY toolbox tools.yaml ./

RUN chmod +x toolbox

EXPOSE 8080

CMD ["./toolbox", "--config", "tools.yaml", "--enable-api", "--address", "0.0.0.0", "--port", "8080"]

工具箱二进制文件和 tools.yaml 打包到最小的 Debian 映像中。Cloud Run 会将流量路由到端口 8080。

部署 Toolbox 服务

cd ~/build-agent-adk-toolbox-cloudsql

gcloud run deploy toolbox-service \

--source deploy-toolbox/ \

--region $REGION \

--set-env-vars "DB_PASSWORD=$DB_PASSWORD,DB_INSTANCE=$DB_INSTANCE,DB_NAME=$DB_NAME,GOOGLE_CLOUD_PROJECT=$GOOGLE_CLOUD_PROJECT,REGION=$REGION,GOOGLE_CLOUD_LOCATION=$GOOGLE_CLOUD_LOCATION" \

--allow-unauthenticated \

--quiet > logs/deploy_toolbox.log 2>&1 &

此命令会将源代码提交给 Cloud Build,构建容器映像,将其推送到 Artifact Registry,并将其部署到 Cloud Run。这需要几分钟时间 - 我们可以检查 logs/deploy_toolbox.log 文件中的部署过程日志

准备智能体以进行部署

在构建 Toolbox 的同时,设置代理的部署文件。

在项目根目录中创建 Dockerfile。在 Cloud Shell Editor 中打开 Dockerfile:

cloudshell edit Dockerfile

然后,复制以下内容

# Dockerfile

FROM ghcr.io/astral-sh/uv:python3.12-trixie-slim

WORKDIR /app

COPY pyproject.toml ./

COPY uv.lock ./

RUN uv sync --no-dev

COPY jobs_agent/ jobs_agent/

EXPOSE 8080

CMD ["uv", "run", "adk", "web", "--host", "0.0.0.0", "--port", "8080"]

此 Dockerfile 使用 ghcr.io/astral-sh/uv 作为基础映像,其中预安装了 Python 和 uv,无需通过 pip 单独安装 uv。

创建一个 .dockerignore 文件,以从容器映像中排除不必要的文件:

cloudshell edit .dockerignore

然后将以下脚本复制到其中

# .dockerignore

.venv/

__pycache__/

*.pyc

.env

jobs_agent/.env

toolbox

tools.yaml

seed.sql

deploy-toolbox/

部署代理服务

等待工具箱部署完成。在 logs/deploy_toolbox.log 上再次检查部署流程,以验证该流程。然后,使用以下命令检索其 Cloud Run 网址

TOOLBOX_URL=$(gcloud run services describe toolbox-service \

--region=$REGION \

--format='value(status.url)')

echo "Toolbox URL: $TOOLBOX_URL"

您将看到类似于以下内容的输出

Toolbox URL: https://toolbox-service-xxxxxx-xx.a.run.app

接下来,我们验证已部署的工具箱是否正常运行:

curl -s "$TOOLBOX_URL/api/toolset" | python3 -m json.tool | head -5

如果输出内容与此示例类似,则表示部署已成功

{

"serverVersion": "1.0.0+binary.linux.amd64.c5524d3",

"tools": {

"add-job": {

"description": "Add a new job listing to the platform. Use this tool when a user asks to post a job that is not currently listed.",

接下来,我们来部署代理,并将 Toolbox 网址作为环境变量传递:

cd ~/build-agent-adk-toolbox-cloudsql

gcloud run deploy jobs-agent \

--source . \

--region $REGION \

--set-env-vars "TOOLBOX_URL=$TOOLBOX_URL,GOOGLE_CLOUD_PROJECT=$GOOGLE_CLOUD_PROJECT,GOOGLE_CLOUD_LOCATION=$GOOGLE_CLOUD_LOCATION,GOOGLE_GENAI_USE_VERTEXAI=TRUE" \

--allow-unauthenticated \

--quiet

代理代码从环境(您之前已设置)中读取 TOOLBOX_URL。在本地,它指向 http://127.0.0.1:5000;在 Cloud Run 上,它指向 Toolbox 服务网址。无需更改任何代码。

测试已部署的代理

检索代理的 Cloud Run 网址:

AGENT_URL=$(gcloud run services describe jobs-agent \

--region=$REGION \

--format='value(status.url)')

echo "Agent URL: $AGENT_URL"

在浏览器中打开该网址。ADK 开发者界面会加载,该界面与您一直在本地使用的界面相同,现在在 Cloud Run 上运行。

从下拉菜单中选择 jobs_agent 并进行测试:

What backend engineering jobs do you have?

I want a remote job working on AI and machine learning

这两个查询都通过已部署的服务运行:Cloud Run 上的代理调用 Cloud Run 上的 Toolbox,后者查询 Cloud SQL。

9. 恭喜 / 清理

您已构建并部署了一个智能职位公告助理,该助理使用 MCP Toolbox for Databases 将 ADK 代理和 Cloud SQL PostgreSQL 连接起来,并支持标准 SQL 查询和语义向量搜索。

您学到的内容

- MCP 如何为 AI 智能体标准化工具访问,以及 MCP Toolbox for Databases 如何将此功能专门应用于数据库操作 - 使用声明性 YAML 配置替换自定义数据库代码

- 如何使用

cloud-sql-postgres源类型将 Cloud SQL PostgreSQL 配置为工具箱数据源 - 如何使用参数化语句定义可防止 SQL 注入的标准 SQL 查询工具

- 如何使用 pgvector 和

gemini-embedding-001启用向量搜索,并使用embeddedBy参数自动嵌入查询 valueFromParam如何实现自动向量提取 - LLM 提供文本说明,而 Toolbox 会在后台复制、嵌入向量并将其与文本一起存储- ADK 的

ToolboxToolset如何从正在运行的 Toolbox 服务器加载工具,从而最大限度地减少代理代码并完全分离数据库逻辑 - 如何将 Toolbox MCP 服务器和 ADK 代理作为单独的服务部署到 Cloud Run

清理

为避免因本 Codelab 中创建的资源导致您的 Google Cloud 账号产生费用,您可以删除各个资源,也可以删除整个项目。

方法 1:删除项目(推荐)

最简单的清理方法是删除项目。这会移除与项目关联的所有资源。

gcloud projects delete $GOOGLE_CLOUD_PROJECT

方法 2:删除单个资源

如果您想保留项目,但仅移除在本 Codelab 中创建的资源,请执行以下操作:

gcloud run services delete jobs-agent --region=$REGION --quiet

gcloud run services delete toolbox-service --region=$REGION --quiet

gcloud sql instances delete jobs-instance --quiet

gcloud artifacts repositories delete cloud-run-source-deploy --location=$REGION --quiet 2>/dev/null