درباره این codelab

1. نمای کلی

BigQuery انبار داده های تجزیه و تحلیلی کم هزینه و کاملاً مدیریت شده گوگل است. BigQuery NoOps است—هیچ زیرساختی برای مدیریت وجود ندارد و شما نیازی به مدیر پایگاه داده ندارید—بنابراین می توانید برای یافتن بینش های معنادار، بر تجزیه و تحلیل داده ها تمرکز کنید، از SQL آشنا استفاده کنید و از مدل پرداخت به موقع ما استفاده کنید.

در این لبه کد، از کتابخانه های سرویس گیرنده Google Cloud برای Python برای پرس و جو از مجموعه داده های عمومی BigQuery با پایتون استفاده خواهید کرد.

چیزی که یاد خواهید گرفت

- نحوه استفاده از Cloud Shell

- نحوه فعال کردن BigQuery API

- نحوه احراز هویت درخواست های API

- نحوه نصب کتابخانه کلاینت پایتون

- چگونه آثار شکسپیر را جویا شویم

- چگونه مجموعه داده GitHub را پرس و جو کنیم

- نحوه تنظیم کش و نمایش آمار

آنچه شما نیاز دارید

نظرسنجی

چگونه از این آموزش استفاده خواهید کرد؟

تجربه خود را با پایتون چگونه ارزیابی می کنید؟

تجربه خود را در استفاده از خدمات Google Cloud چگونه ارزیابی می کنید؟

2. راه اندازی و الزامات

تنظیم محیط خود به خود

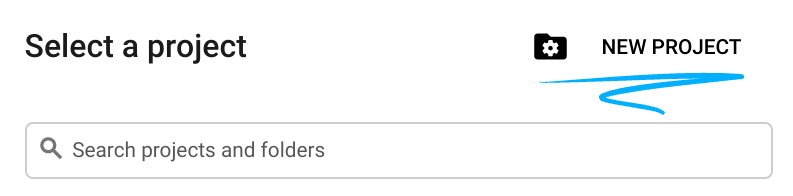

- به Google Cloud Console وارد شوید و یک پروژه جدید ایجاد کنید یا از یک موجود استفاده مجدد کنید. اگر قبلاً یک حساب Gmail یا Google Workspace ندارید، باید یک حساب ایجاد کنید .

- نام پروژه نام نمایشی برای شرکت کنندگان این پروژه است. این یک رشته کاراکتری است که توسط API های Google استفاده نمی شود و می توانید هر زمان که بخواهید آن را به روز کنید.

- شناسه پروژه باید در تمام پروژههای Google Cloud منحصربهفرد باشد و تغییرناپذیر باشد (پس از تنظیم نمیتوان آن را تغییر داد). Cloud Console به طور خودکار یک رشته منحصر به فرد تولید می کند. معمولاً برای شما مهم نیست که چیست. در اکثر کدها، باید به شناسه پروژه ارجاع دهید (و معمولاً به عنوان

PROJECT_IDشناخته میشود)، بنابراین اگر آن را دوست ندارید، یک نمونه تصادفی دیگر ایجاد کنید، یا میتوانید شناسه پروژه را امتحان کنید و ببینید در دسترس است. سپس پس از ایجاد پروژه "یخ زده" می شود. - یک مقدار سوم وجود دارد، یک شماره پروژه که برخی از API ها از آن استفاده می کنند. در مورد هر سه این مقادیر در مستندات بیشتر بیاموزید.

- در مرحله بعد، برای استفاده از منابع Cloud/APIها، باید صورتحساب را در کنسول Cloud فعال کنید . اجرا کردن از طریق این کد لبه نباید هزینه زیادی داشته باشد، اگر اصلاً باشد. برای اینکه منابع را خاموش کنید تا بیش از این آموزش متحمل صورتحساب نشوید، دستورالعملهای «پاکسازی» را که در انتهای Codelab یافت میشود دنبال کنید. کاربران جدید Google Cloud واجد شرایط برنامه آزمایشی رایگان 300 دلاری هستند.

Cloud Shell را راه اندازی کنید

در حالی که Google Cloud را می توان از راه دور از لپ تاپ شما کار کرد، در این کد لبه از Google Cloud Shell استفاده خواهید کرد، یک محیط خط فرمان که در Cloud اجرا می شود.

Cloud Shell را فعال کنید

- از Cloud Console، روی Activate Cloud Shell کلیک کنید

.

.

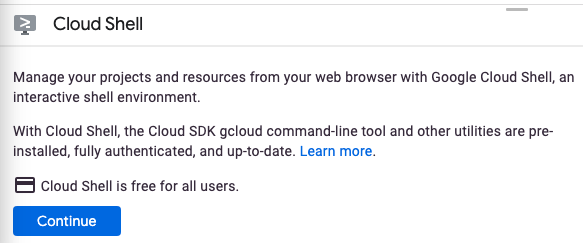

اگر قبلاً Cloud Shell را راهاندازی نکردهاید، یک صفحه میانی (در زیر تاشو) برای شما نمایش داده میشود که آن را توصیف میکند. اگر اینطور است، روی Continue کلیک کنید (و دیگر آن را نخواهید دید). در اینجا به نظر می رسد که آن صفحه یک بار مصرف:

تهیه و اتصال به Cloud Shell فقط باید چند لحظه طول بکشد.

این ماشین مجازی با تمام ابزارهای توسعه مورد نیاز شما بارگذاری شده است. این دایرکتوری اصلی 5 گیگابایتی دائمی را ارائه می دهد و در Google Cloud اجرا می شود و عملکرد شبکه و احراز هویت را بسیار افزایش می دهد. بیشتر، اگر نه همه، کار شما در این کد لبه را می توان به سادگی با یک مرورگر یا Chromebook انجام داد.

پس از اتصال به Cloud Shell، باید ببینید که قبلاً احراز هویت شده اید و پروژه قبلاً روی ID پروژه شما تنظیم شده است.

- برای تایید احراز هویت، دستور زیر را در Cloud Shell اجرا کنید:

gcloud auth list

خروجی فرمان

Credentialed Accounts

ACTIVE ACCOUNT

* <my_account>@<my_domain.com>

To set the active account, run:

$ gcloud config set account `ACCOUNT`

- دستور زیر را در Cloud Shell اجرا کنید تا تأیید کنید که دستور gcloud از پروژه شما اطلاع دارد:

gcloud config list project

خروجی فرمان

[core] project = <PROJECT_ID>

اگر اینطور نیست، می توانید آن را با این دستور تنظیم کنید:

gcloud config set project <PROJECT_ID>

خروجی فرمان

Updated property [core/project].

3. API را فعال کنید

BigQuery API باید به طور پیش فرض در تمام پروژه های Google Cloud فعال باشد. می توانید با دستور زیر در Cloud Shell بررسی کنید که آیا این درست است یا خیر: شما باید BigQuery لیست شده باشید:

gcloud services list

شما باید BigQuery را در لیست مشاهده کنید:

NAME TITLE bigquery.googleapis.com BigQuery API ...

در صورتی که BigQuery API فعال نباشد، میتوانید از دستور زیر در Cloud Shell برای فعال کردن آن استفاده کنید:

gcloud services enable bigquery.googleapis.com

4. احراز هویت درخواست های API

برای ارسال درخواست به BigQuery API، باید از یک حساب سرویس استفاده کنید. یک حساب سرویس متعلق به پروژه شما است و توسط کتابخانه سرویس گیرنده Google Cloud Python برای ایجاد درخواست های BigQuery API استفاده می شود. مانند هر حساب کاربری دیگری، یک حساب سرویس با یک آدرس ایمیل نشان داده می شود. در این بخش، از Cloud SDK برای ایجاد یک حساب سرویس استفاده میکنید و سپس اعتبارنامههایی را ایجاد میکنید که باید آنها را به عنوان حساب سرویس تأیید کنید.

ابتدا یک متغیر محیطی PROJECT_ID را تنظیم کنید:

export PROJECT_ID=$(gcloud config get-value core/project)

در مرحله بعد، یک حساب سرویس جدید برای دسترسی به BigQuery API با استفاده از:

gcloud iam service-accounts create my-bigquery-sa \ --display-name "my bigquery service account"

در مرحله بعد، اعتبارنامه هایی ایجاد کنید که کد پایتون شما از آنها برای ورود به عنوان حساب سرویس جدید شما استفاده می کند. این اعتبارنامه ها را ایجاد کنید و با استفاده از دستور زیر آن را به عنوان فایل JSON ~/key.json ذخیره کنید:

gcloud iam service-accounts keys create ~/key.json \

--iam-account my-bigquery-sa@${PROJECT_ID}.iam.gserviceaccount.com

در نهایت، متغیر محیطی GOOGLE_APPLICATION_CREDENTIALS را که توسط کتابخانه مشتری BigQuery Python که در مرحله بعد پوشش داده شده است، برای یافتن اعتبار شما استفاده میکند، تنظیم کنید. متغیر محیطی باید با استفاده از:

export GOOGLE_APPLICATION_CREDENTIALS=~/key.json

میتوانید درباره احراز هویت BigQuery API بیشتر بخوانید.

5. کنترل دسترسی را تنظیم کنید

BigQuery از مدیریت هویت و دسترسی (IAM) برای مدیریت دسترسی به منابع استفاده می کند. BigQuery دارای تعدادی نقش از پیش تعریف شده (user، dataOwner، dataViewer و غیره) است که می توانید به حساب سرویس خود که در مرحله قبل ایجاد کرده اید اختصاص دهید. می توانید اطلاعات بیشتری در مورد کنترل دسترسی در BigQuery Docs بخوانید.

قبل از اینکه بتوانید مجموعه داده های عمومی را پرس و جو کنید، باید مطمئن شوید که حساب سرویس حداقل نقش roles/bigquery.user را دارد. در Cloud Shell، دستور زیر را اجرا کنید تا نقش کاربر را به حساب سرویس اختصاص دهید:

gcloud projects add-iam-policy-binding ${PROJECT_ID} \

--member "serviceAccount:my-bigquery-sa@${PROJECT_ID}.iam.gserviceaccount.com" \

--role "roles/bigquery.user"

میتوانید دستور زیر را اجرا کنید تا بررسی کنید که حساب سرویس نقش کاربر را دارد:

gcloud projects get-iam-policy $PROJECT_ID

شما باید موارد زیر را ببینید:

bindings: - members: - serviceAccount:my-bigquery-sa@<PROJECT_ID>.iam.gserviceaccount.com role: roles/bigquery.user ...

6. کتابخانه مشتری را نصب کنید

کتابخانه مشتری BigQuery Python را نصب کنید:

pip3 install --user --upgrade google-cloud-bigquery

اکنون آماده کدنویسی با BigQuery API هستید!

7. آثار شکسپیر را جویا شوید

مجموعه داده عمومی هر مجموعه داده ای است که در BigQuery ذخیره می شود و در دسترس عموم قرار می گیرد. بسیاری از مجموعه داده های عمومی دیگر برای پرس و جو در دسترس شما هستند. در حالی که برخی از مجموعه داده ها توسط گوگل میزبانی می شوند، اکثر آنها توسط اشخاص ثالث میزبانی می شوند. برای اطلاعات بیشتر به صفحه مجموعه داده های عمومی مراجعه کنید.

علاوه بر مجموعه داده های عمومی، BigQuery تعداد محدودی جدول نمونه را ارائه می دهد که می توانید از آنها پرس و جو کنید. این جداول در مجموعه bigquery-public-data:samples موجود است. جدول shakespeare در مجموعه داده samples حاوی فهرست کلمات آثار شکسپیر است. تعداد دفعاتی که هر کلمه در هر مجموعه ظاهر می شود را نشان می دهد.

در این مرحله جدول shakespeare را پرس و جو می کنید.

ابتدا، در Cloud Shell یک برنامه پایتون ساده ایجاد کنید که از آن برای اجرای نمونه های Translation API استفاده می کنید.

mkdir bigquery-demo cd bigquery-demo touch app.py

ویرایشگر کد را از سمت راست بالای Cloud Shell باز کنید:

به فایل app.py داخل پوشه bigquery-demo بروید و کد زیر را جایگزین کنید.

from google.cloud import bigquery

client = bigquery.Client()

query = """

SELECT corpus AS title, COUNT(word) AS unique_words

FROM `bigquery-public-data.samples.shakespeare`

GROUP BY title

ORDER BY unique_words

DESC LIMIT 10

"""

results = client.query(query)

for row in results:

title = row['title']

unique_words = row['unique_words']

print(f'{title:<20} | {unique_words}')

یک یا دو دقیقه وقت بگذارید و کد را مطالعه کنید و ببینید که جدول چگونه استعلام می شود.

به Cloud Shell برگردید، برنامه را اجرا کنید:

python3 app.py

شما باید لیستی از کلمات و وقوع آنها را ببینید:

hamlet | 5318 kinghenryv | 5104 cymbeline | 4875 troilusandcressida | 4795 kinglear | 4784 kingrichardiii | 4713 2kinghenryvi | 4683 coriolanus | 4653 2kinghenryiv | 4605 antonyandcleopatra | 4582

8. مجموعه داده GitHub را پرس و جو کنید

برای آشنایی بیشتر با BigQuery، اکنون یک پرس و جو علیه مجموعه داده عمومی GitHub صادر خواهید کرد. متداول ترین پیام های commit را در GitHub پیدا خواهید کرد. همچنین از کنسول وب BigQuery برای پیش نمایش و اجرای پرس و جوهای ad-hoc استفاده خواهید کرد.

برای مشاهده اینکه داده ها چگونه به نظر می رسند، مجموعه داده GitHub را در رابط کاربری وب BigQuery باز کنید:

روی دکمه پیش نمایش کلیک کنید تا ببینید داده ها چگونه به نظر می رسند:

به فایل app.py داخل پوشه bigquery_demo بروید و کد زیر را جایگزین کنید.

from google.cloud import bigquery

client = bigquery.Client()

query = """

SELECT subject AS subject, COUNT(*) AS num_duplicates

FROM bigquery-public-data.github_repos.commits

GROUP BY subject

ORDER BY num_duplicates

DESC LIMIT 10

"""

results = client.query(query)

for row in results:

subject = row['subject']

num_duplicates = row['num_duplicates']

print(f'{subject:<20} | {num_duplicates:>9,}')

یک یا دو دقیقه وقت بگذارید و کد را مطالعه کنید و ببینید که چگونه جدول برای رایج ترین پیام های commit پرس و جو می شود.

به Cloud Shell برگردید، برنامه را اجرا کنید:

python3 app.py

شما باید لیستی از پیام های commit و وقوع آنها را ببینید:

Update README.md | 1,685,515

Initial commit | 1,577,543

update | 211,017

| 155,280

Create README.md | 153,711

Add files via upload | 152,354

initial commit | 145,224

first commit | 110,314

Update index.html | 91,893

Update README | 88,862

9. ذخیره سازی و آمار

BigQuery نتایج پرس و جوها را در حافظه پنهان ذخیره می کند. در نتیجه پرس و جوهای بعدی زمان کمتری می برد. امکان غیرفعال کردن کش با گزینه های پرس و جو وجود دارد. BigQuery همچنین آمار مربوط به پرس و جوهایی مانند زمان ایجاد، زمان پایان، کل بایت های پردازش شده را پیگیری می کند.

در این مرحله، کش کردن را غیرفعال میکنید و همچنین آمار مربوط به کوئریها را نمایش میدهید.

به فایل app.py داخل پوشه bigquery_demo بروید و کد زیر را جایگزین کنید.

from google.cloud import bigquery

client = bigquery.Client()

query = """

SELECT subject AS subject, COUNT(*) AS num_duplicates

FROM bigquery-public-data.github_repos.commits

GROUP BY subject

ORDER BY num_duplicates

DESC LIMIT 10

"""

job_config = bigquery.job.QueryJobConfig(use_query_cache=False)

results = client.query(query, job_config=job_config)

for row in results:

subject = row['subject']

num_duplicates = row['num_duplicates']

print(f'{subject:<20} | {num_duplicates:>9,}')

print('-'*60)

print(f'Created: {results.created}')

print(f'Ended: {results.ended}')

print(f'Bytes: {results.total_bytes_processed:,}')

چند نکته در مورد کد قابل ذکر است. ابتدا، کش کردن با معرفی QueryJobConfig و تنظیم use_query_cache روی false غیرفعال می شود. دوم، شما به آمار مربوط به پرس و جو از شی job دسترسی پیدا کردید.

به Cloud Shell برگردید، برنامه را اجرا کنید:

python3 app.py

مانند قبل، باید لیستی از پیام های commit و وقوع آنها را مشاهده کنید. علاوه بر این، در پایان باید برخی از آمارهای مربوط به پرس و جو را نیز مشاهده کنید:

Update README.md | 1,685,515

Initial commit | 1,577,543

update | 211,017

| 155,280

Create README.md | 153,711

Add files via upload | 152,354

initial commit | 145,224

first commit | 110,314

Update index.html | 91,893

Update README | 88,862

------------------------------------------------------------

Created: 2020-04-03 13:30:08.801000+00:00

Ended: 2020-04-03 13:30:15.334000+00:00

Bytes: 2,868,251,894

10. بارگیری داده ها در BigQuery

اگر می خواهید داده های خود را پرس و جو کنید، باید داده های خود را در BigQuery بارگیری کنید. BigQuery از بارگیری داده ها از بسیاری از منابع از جمله فضای ذخیره سازی ابری، سایر سرویس های Google و سایر منابع قابل خواندن پشتیبانی می کند. حتی میتوانید دادههای خود را با استفاده از درجهای پخش جریانی پخش کنید. برای اطلاعات بیشتر به صفحه بارگیری داده در BigQuery مراجعه کنید.

در این مرحله، یک فایل JSON ذخیره شده در فضای ابری را در جدول BigQuery بارگذاری می کنید. فایل JSON در gs://cloud-samples-data/bigquery/us-states/us-states.json قرار دارد

اگر در مورد محتوای فایل JSON کنجکاو هستید، می توانید از ابزار خط فرمان gsutil برای دانلود آن در Cloud Shell استفاده کنید:

gsutil cp gs://cloud-samples-data/bigquery/us-states/us-states.json .

می توانید ببینید که حاوی لیست ایالت های ایالات متحده است و هر ایالت یک سند JSON در یک خط جداگانه است:

head us-states.json

{"name": "Alabama", "post_abbr": "AL"}

{"name": "Alaska", "post_abbr": "AK"}

...

برای بارگیری این فایل JSON در BigQuery، به فایل app.py در داخل پوشه bigquery_demo بروید و کد زیر را جایگزین کنید.

from google.cloud import bigquery

client = bigquery.Client()

gcs_uri = 'gs://cloud-samples-data/bigquery/us-states/us-states.json'

dataset = client.create_dataset('us_states_dataset')

table = dataset.table('us_states_table')

job_config = bigquery.job.LoadJobConfig()

job_config.schema = [

bigquery.SchemaField('name', 'STRING'),

bigquery.SchemaField('post_abbr', 'STRING'),

]

job_config.source_format = bigquery.SourceFormat.NEWLINE_DELIMITED_JSON

load_job = client.load_table_from_uri(gcs_uri, table, job_config=job_config)

print('JSON file loaded to BigQuery')

دو دقیقه وقت بگذارید و مطالعه کنید که چگونه کد فایل JSON را بارگیری می کند و جدولی با طرحواره زیر مجموعه داده ایجاد می کند.

به Cloud Shell برگردید، برنامه را اجرا کنید:

python3 app.py

یک مجموعه داده و یک جدول در BigQuery ایجاد می شود.

برای تأیید اینکه مجموعه داده ایجاد شده است، به کنسول BigQuery بروید. باید یک مجموعه داده و جدول جدید ببینید. برای مشاهده داده های خود به برگه پیش نمایش جدول بروید:

11. تبریک می گویم!

شما یاد گرفتید که چگونه از BigQuery با پایتون استفاده کنید!

پاک کن

برای جلوگیری از تحمیل هزینه به حساب Google Cloud خود برای منابع استفاده شده در این آموزش:

- در Cloud Console، به صفحه مدیریت منابع بروید.

- در لیست پروژه، پروژه خود را انتخاب کنید و سپس روی حذف کلیک کنید.

- در گفتگو، ID پروژه را تایپ کنید و سپس بر روی Shut down کلیک کنید تا پروژه حذف شود.

بیشتر بدانید

- Google BigQuery: https://cloud.google.com/bigquery/docs/

- پایتون در Google Cloud: https://cloud.google.com/python/

- کتابخانه های Cloud Client برای Python: https://googleapis.github.io/google-cloud-python/

مجوز

این اثر تحت مجوز Creative Commons Attribution 2.0 Generic مجوز دارد.