1. Introduction

There are many reasons to run surveys: assess customer satisfaction, run market research, improve a product or service, or appraise employee engagement. However, if you have tried to work with survey data before you probably know that the standard format is difficult to work with. In this guide, we build an automated pipeline that captures Google Forms results, prepares the data for analysis with Cloud Dataprep, loads it into BigQuery and allows your team to perform visual analytics using tools like Looker or Data Studio.

What you'll build

In this codelab, you will use Dataprep to transform responses from our example Google Forms survey into a format that is useful for data analytics. You will push the transformed data into BigQuery where you can ask deeper questions with SQL and join it onto other datasets for more powerful analyses. At the end, you can explore pre-built dashboards, or connect your own business intelligence tool to BigQuery to create new reports.

What you'll learn

- How to transform survey data using Dataprep

- How to push survey data into BigQuery

- How to get more insight from survey data

What you'll need

- A Google Cloud project with billing, BigQuery and Dataprep enabled

- A basic knowledge of Dataprep is helpful, but not required

- A basic knowledge of BigQuery and SQL is helpful, but not required

2. Manage Google Forms Responses

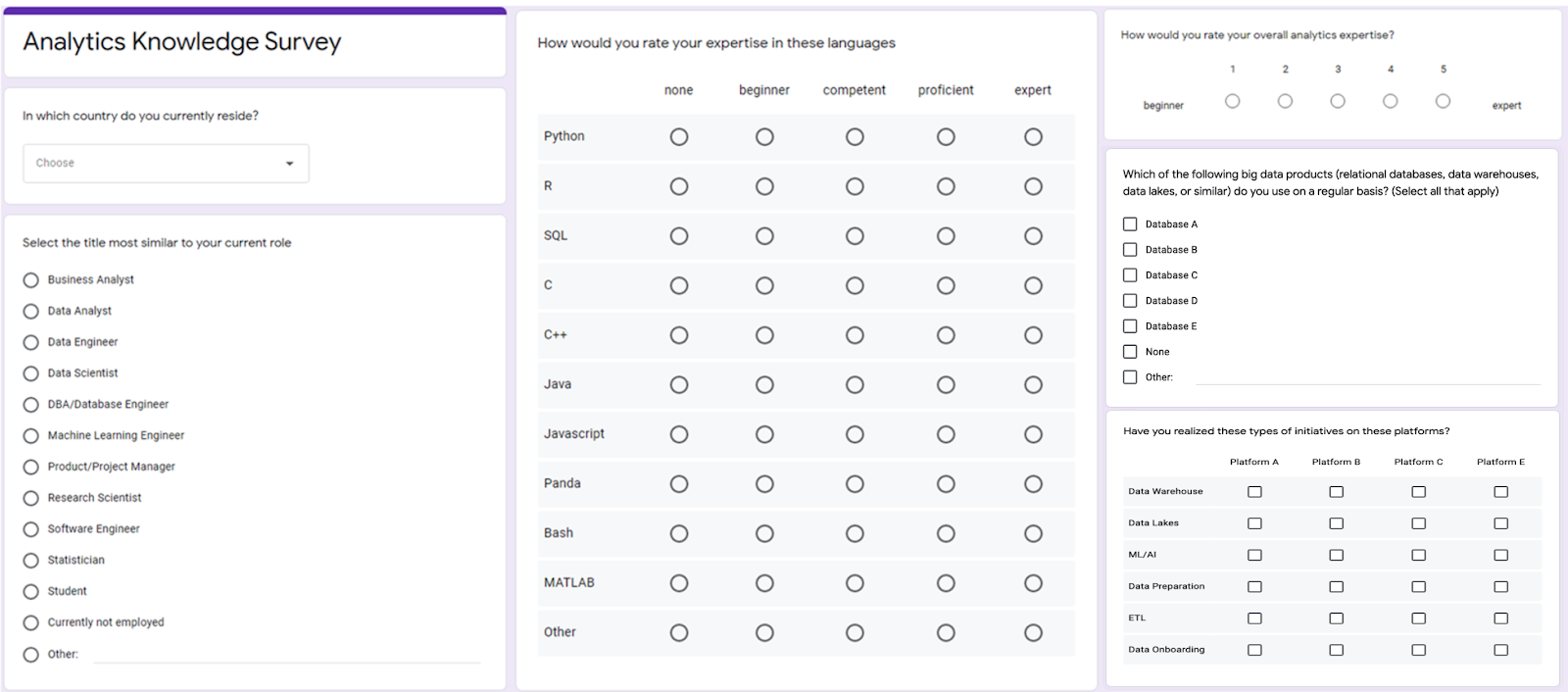

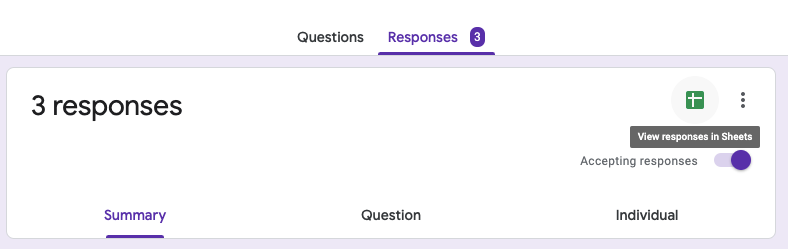

We'll start by taking a closer look at the Google Forms responses to our example survey.

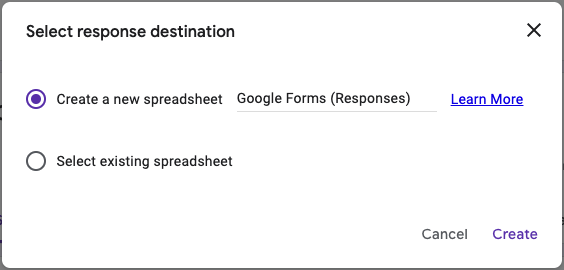

Survey results can be exported from the "responses" tab by clicking the Google Sheets icon and creating a new spreadsheet or loading the results into an existing one. Google Forms will continue to add responses to the spreadsheet as responders submit their replies until you deselect the "Accepting responses" button.

Let's now review each response type and how it translates in the Google Sheets file.

3. Transform Survey Responses

Survey questions can be grouped into four families that will have a particular export format. Based on the type of question, you will need to restructure the data in a certain way. Here, we review each of the groups and the types of transformations that we need to apply.

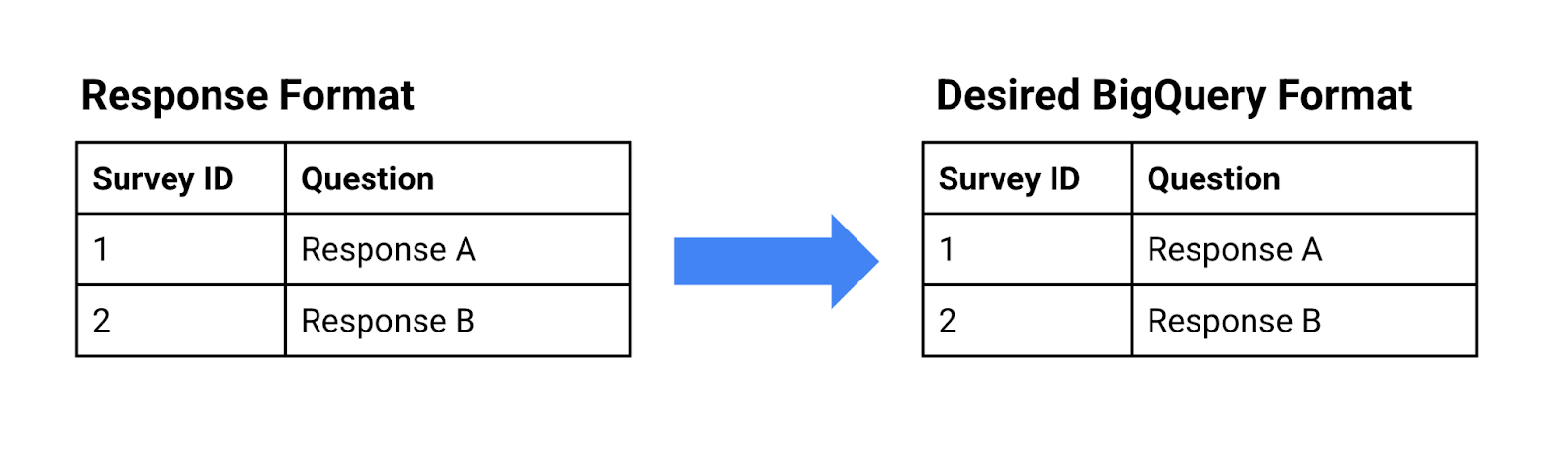

Single Choice Questions: short answer, paragraph, dropdown, linear scale, etc.

- Question name: column name

- Response: cell value

- Transformation requirements: no transformation is needed; the response is loaded as-is.

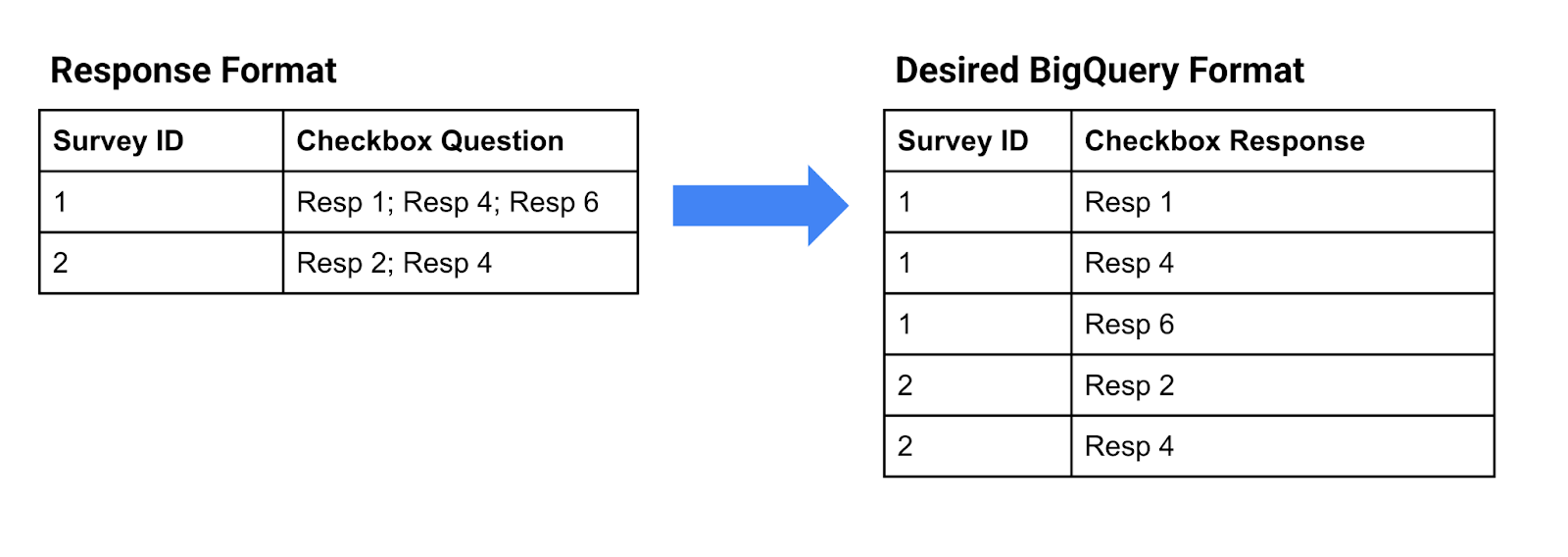

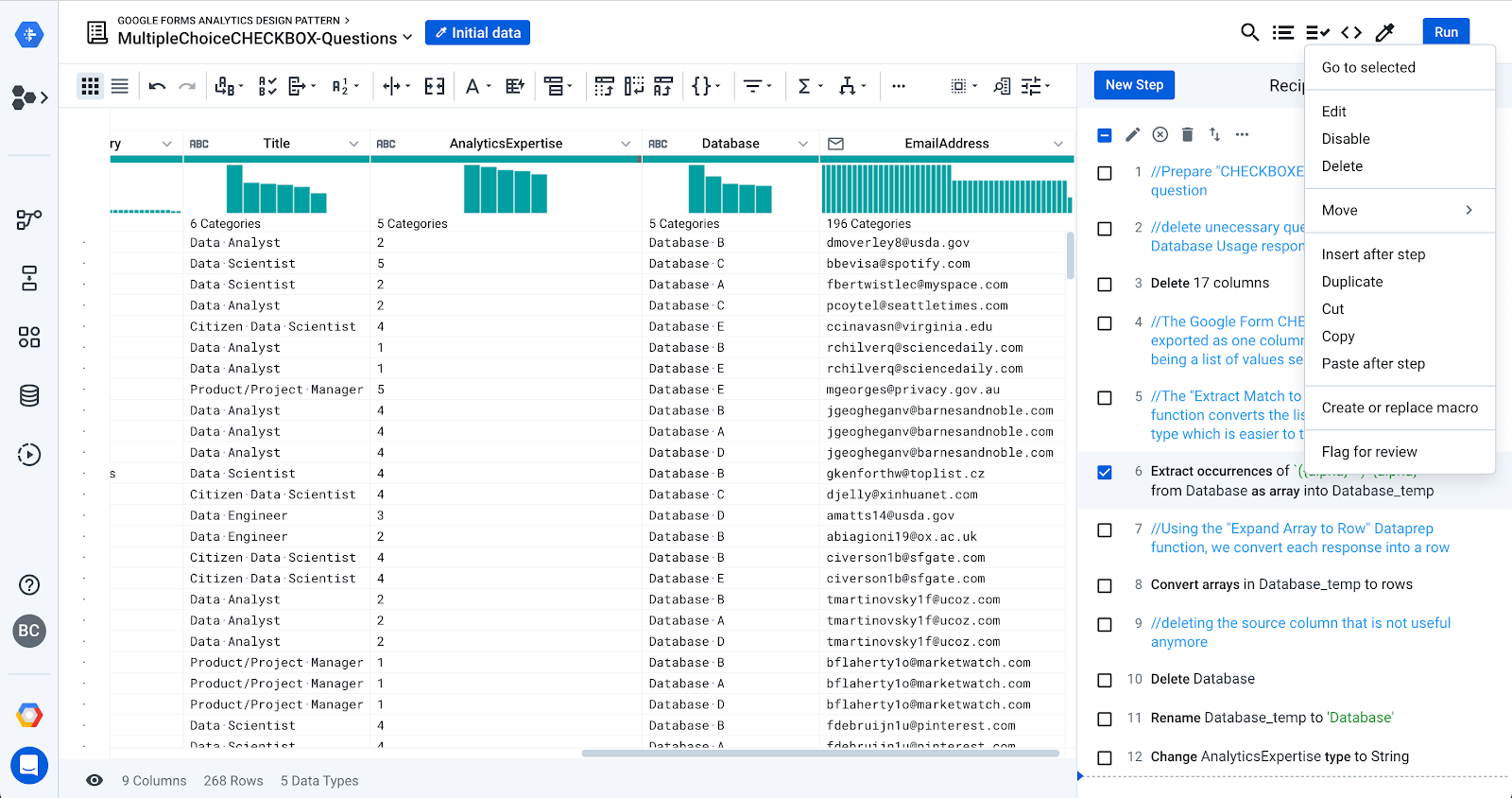

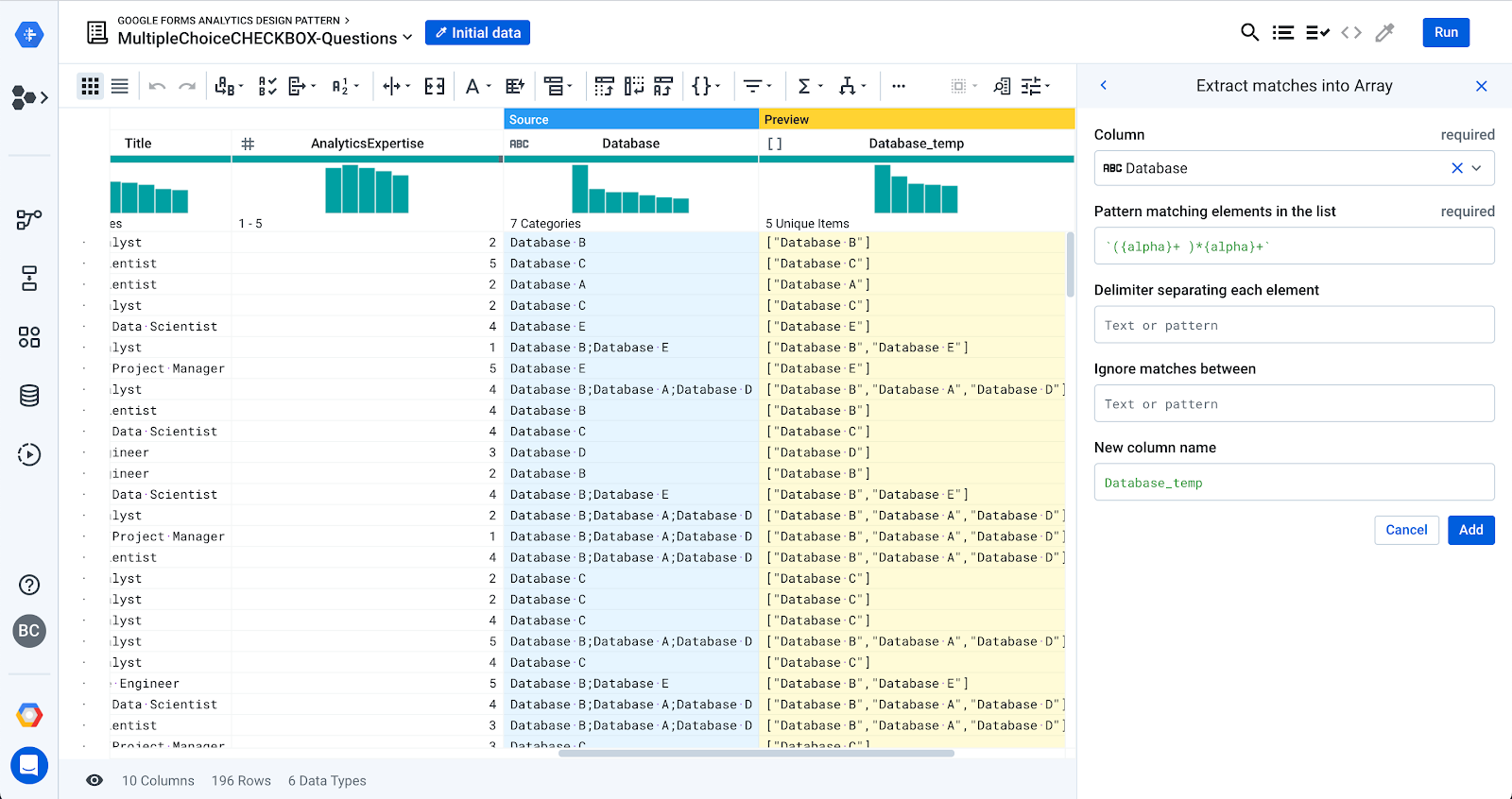

Multiple Choices Questions: multiple choices, checkbox

- Question name: column name

- Response: list of value with semicolon separator (e.g. "Resp 1; Resp 4; Resp 6")

- Transformation requirements: the list of values needs to be extracted and pivoted, so each response becomes a new row.

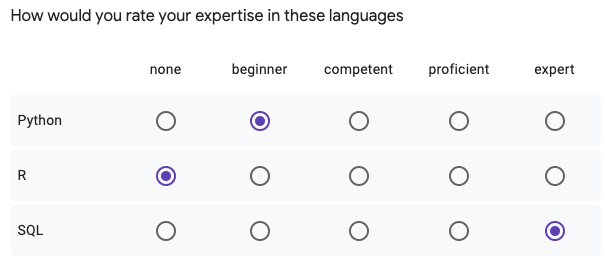

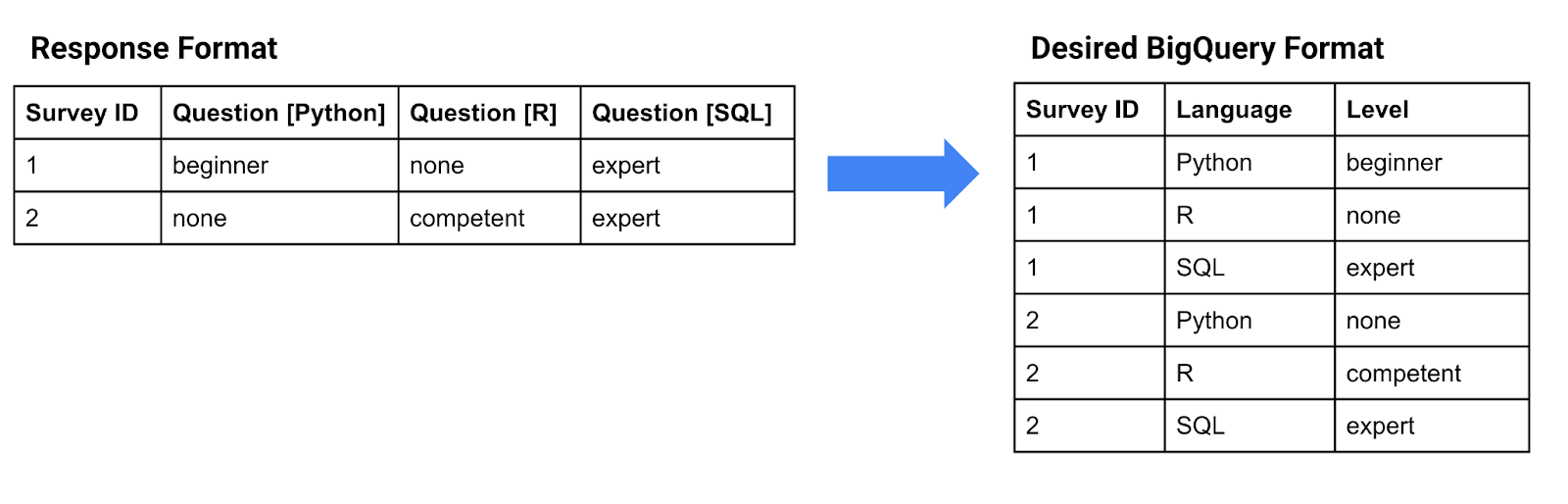

Multiple Choices Grid Questions

Here is an example of a multiple choices question. One has to select one single value from each row.

- Question name: each individual question becomes a column name with this format "Question [Option]".

- Response: each individual response in the grid becomes a column with a unique value.

- Transformation requirements: each question/answer must become a new row in the table and broken into two columns. One column mentioning the question option and the other column with the response.

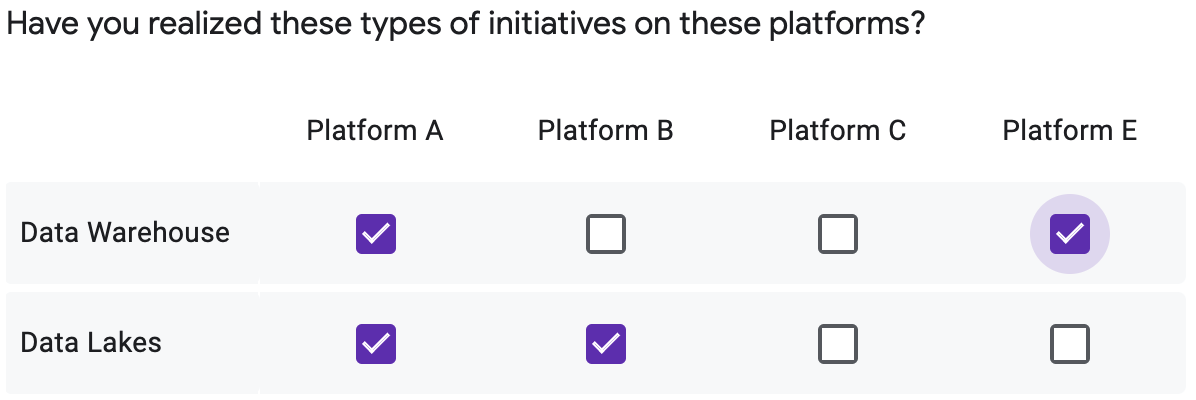

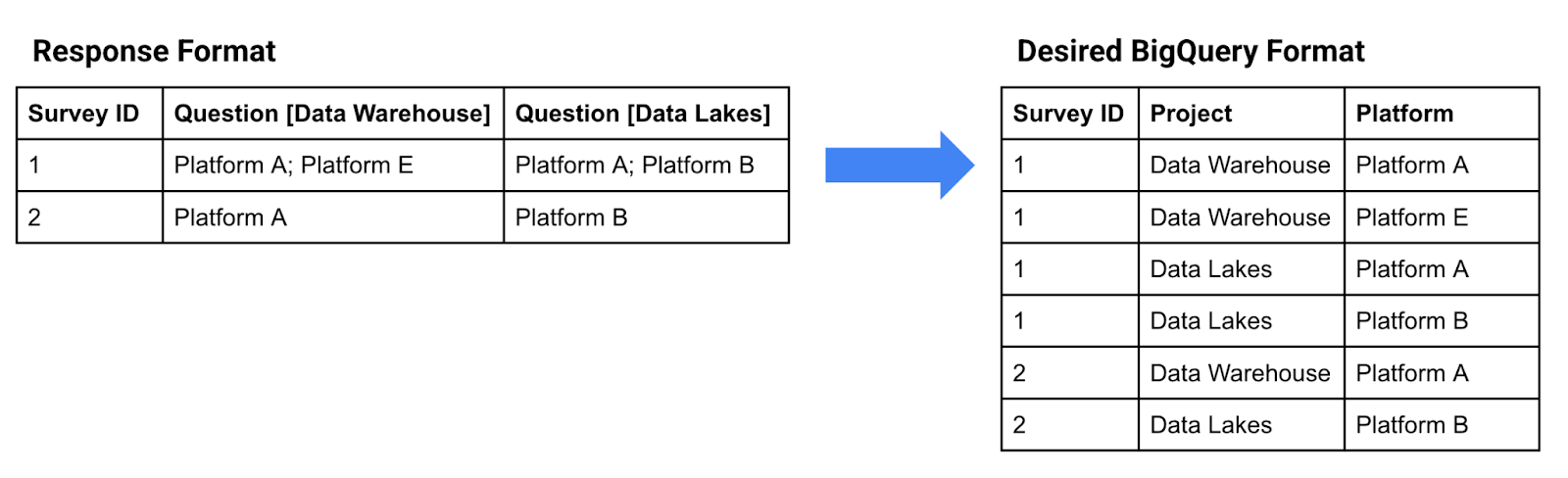

Multiple Choices Checkbox Grid Questions

Here is an example of a checkbox grid. One can select none to multiple values from each row.

- Question name: each individual question becomes a column name with this format "Question [Option]"..

- Response: each individual response in the grid becomes a column with a list of values semi-colon separated.

- Transformation requirements: these question types combine the "checkbox" and the "Multiple choices grid" categories and must be resolved in this order.

First, each response's list of values needs to be extracted and pivoted, so each answer becomes a new row for the particular question.

Second: each individual response must become a new row in the table and broken into two columns. One column mentioning the question option and the other column with the answer.

Next, we'll show how these transformations are handled with Cloud Dataprep.

4. Build the Cloud Dataprep Flow

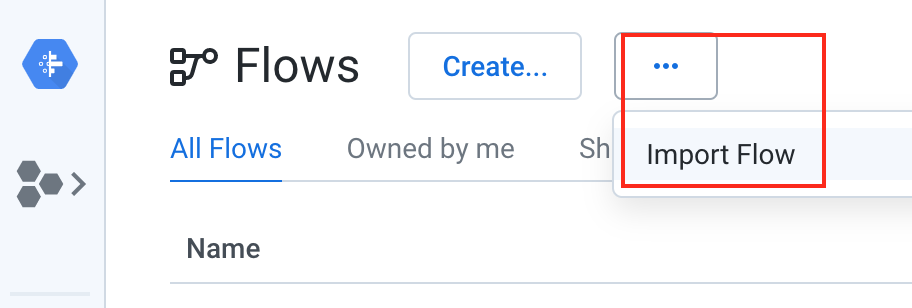

Import the "Google Forms Analytics Design Pattern" in Cloud Dataprep

Download the Google Forms Analytics Design Pattern flow package (without unzipping it). In the Cloud Dataprep application, click the Flows icon in the left navigation bar. Then In the Flows page, select Import from the context menu.

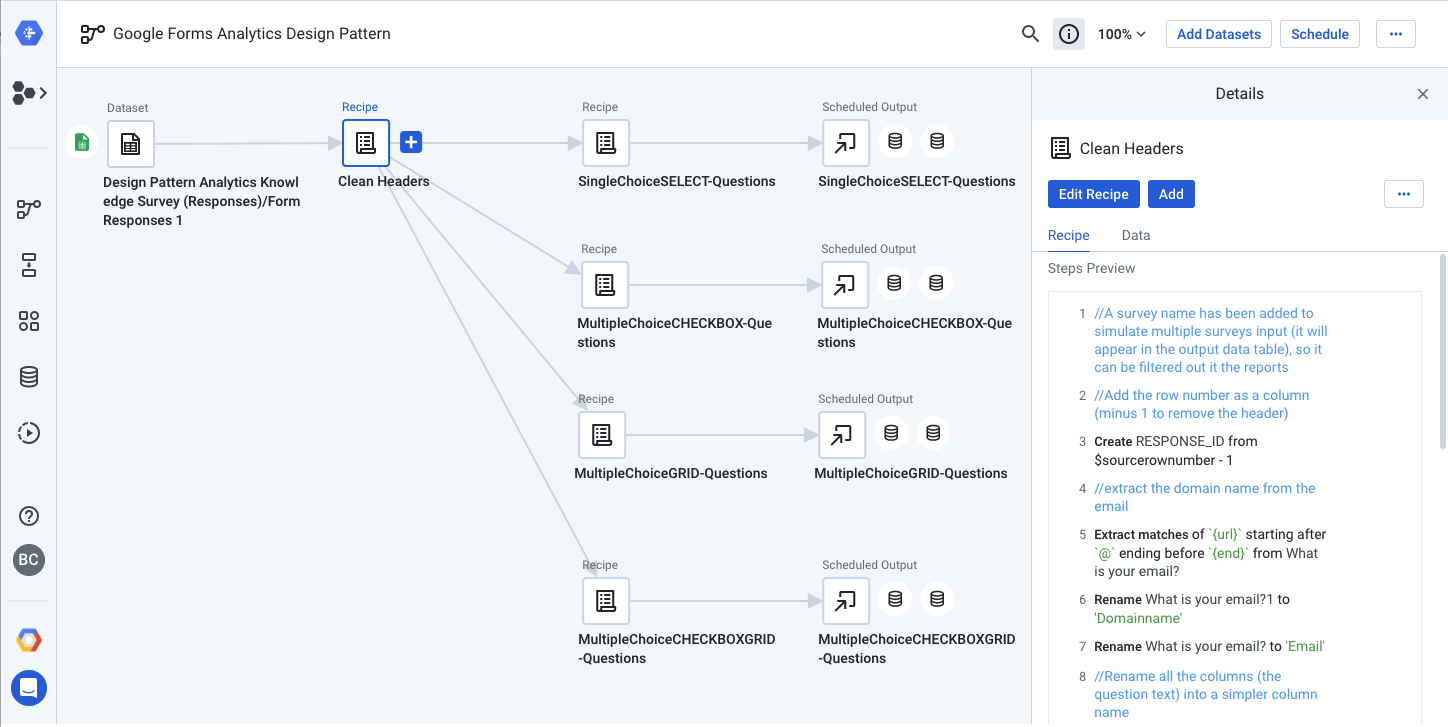

After you have imported the flow, select the imported flow to edit it, your screen should look like this:

Connect Google Sheets Survey Results Spreadsheet

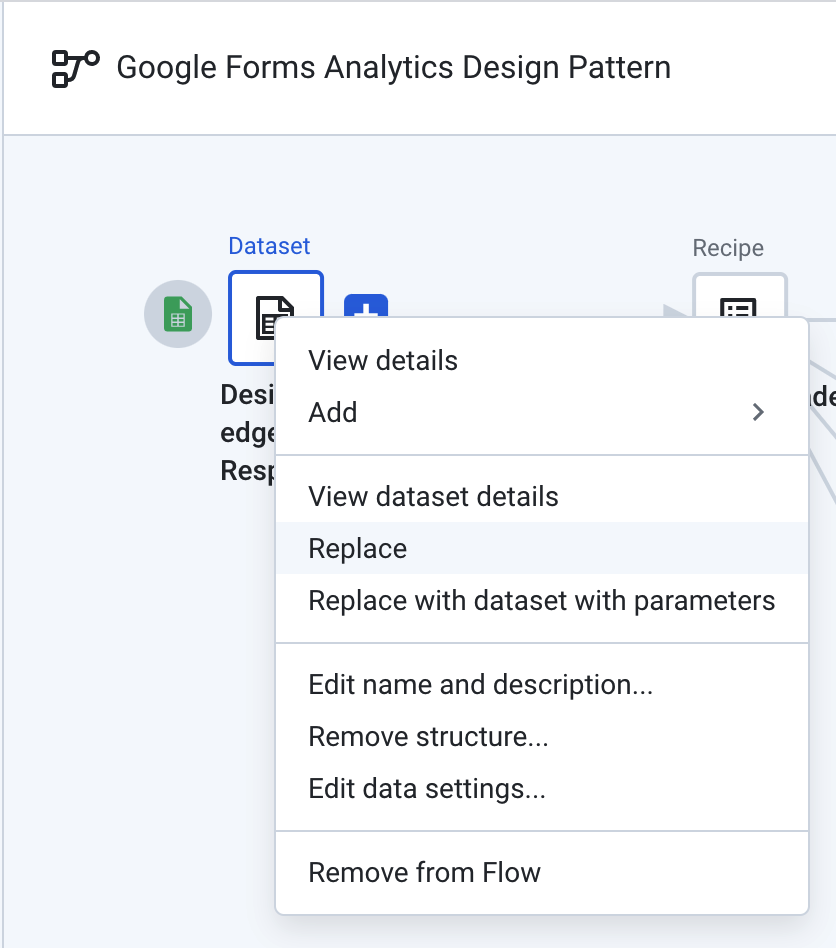

On the left side of the flow, the data source must be reconnected to a Google Sheets containing the Google Forms results. Right click on the Google Sheet datasets object and select "Replace".

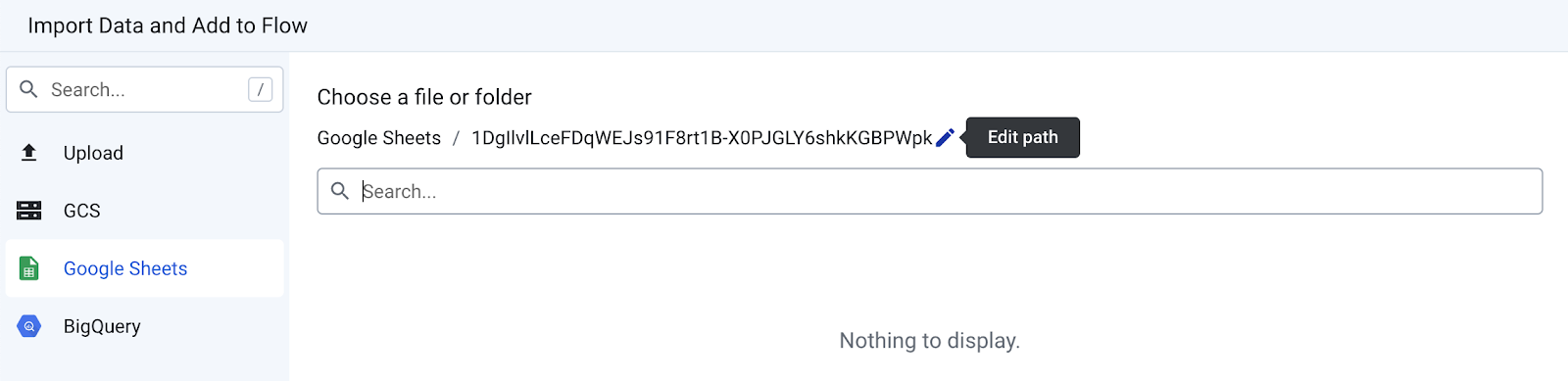

Then click on the "Import Datasets" link at the bottom of the modal. Click the "Edit path" pencil.

From there, replace the current value with this link that is pointing to a Google Sheets with some Google Forms results, you can use our example or your own copy: https://docs.google.com/spreadsheets/d/1DgIlvlLceFDqWEJs91F8rt1B-X0PJGLY6shkKGBPWpk/edit?usp=sharing

Click "Go" and then "Import & Add to Flow" at the bottom right. When you are back in the modal, click the "Replace" button at the bottom right.

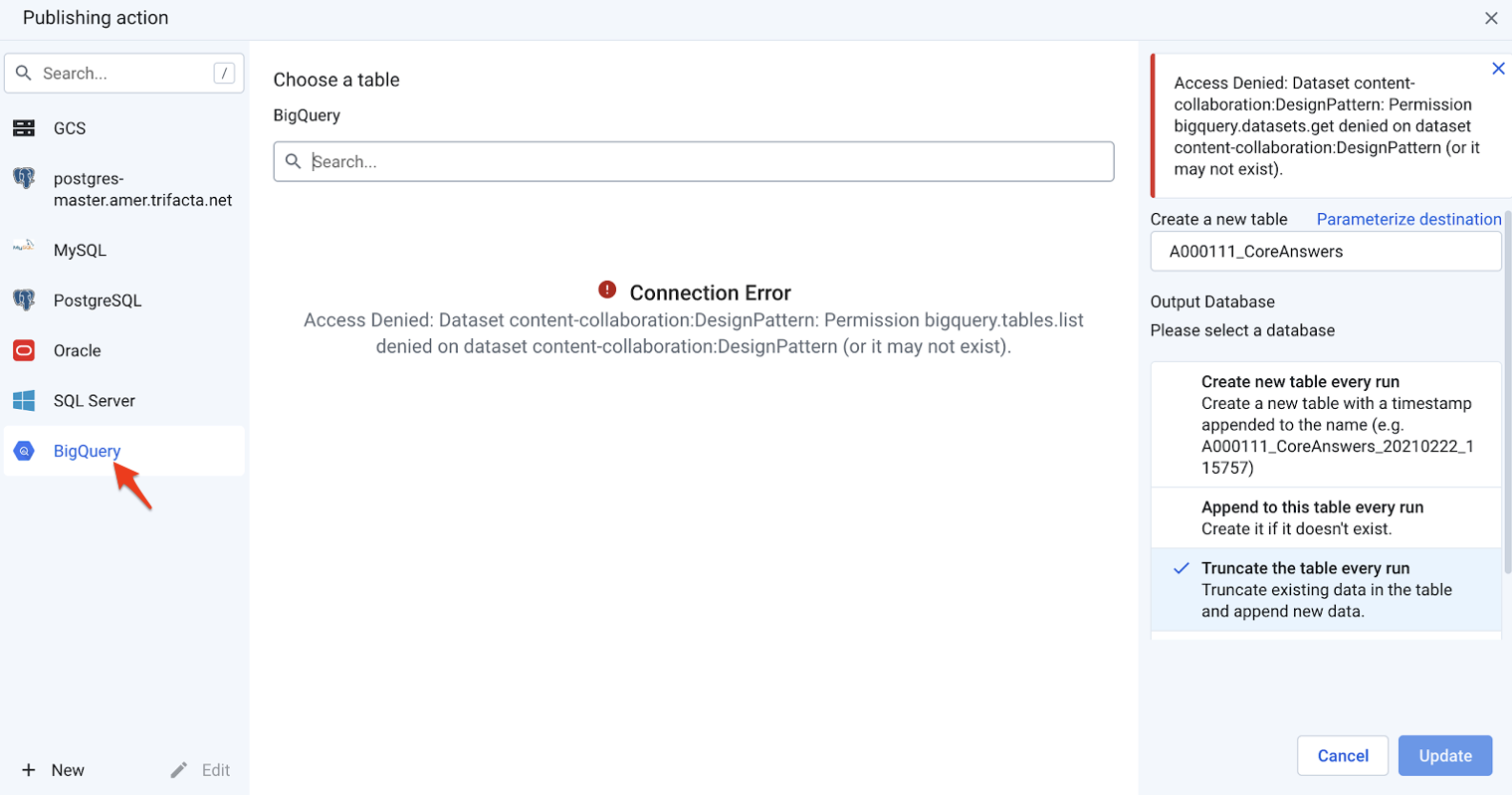

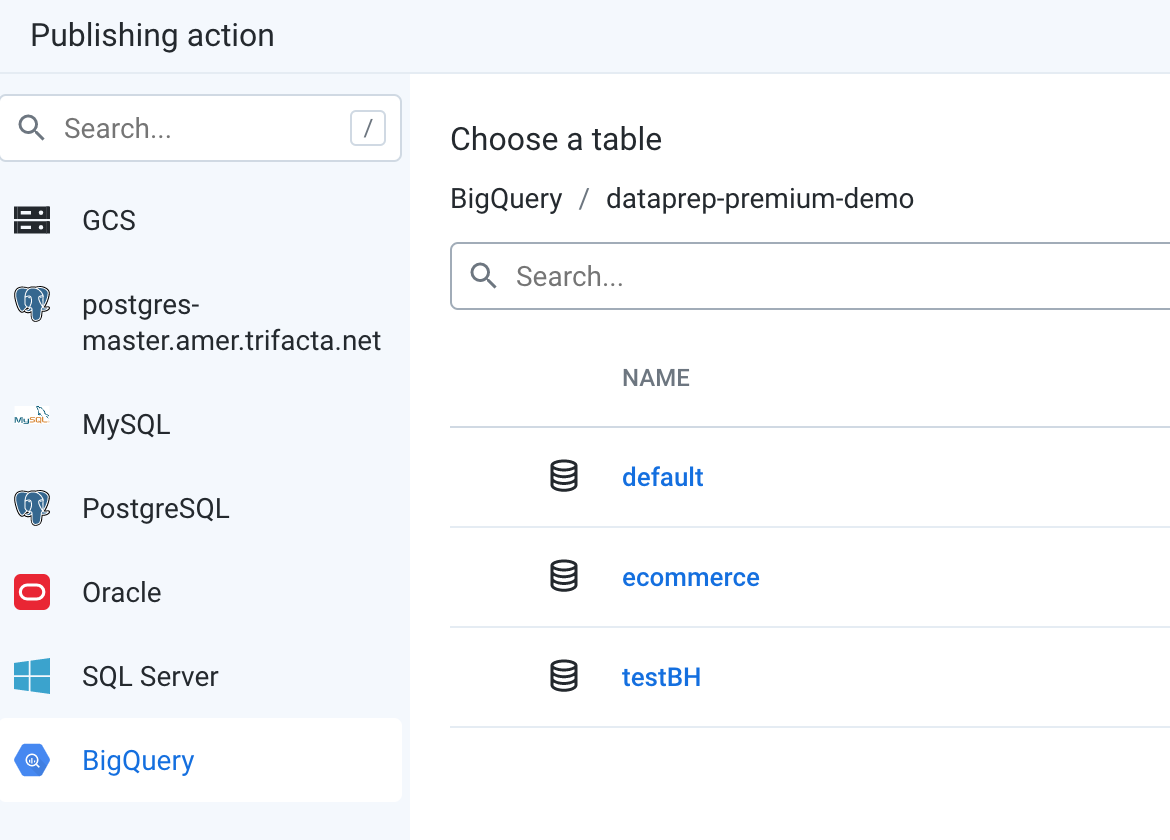

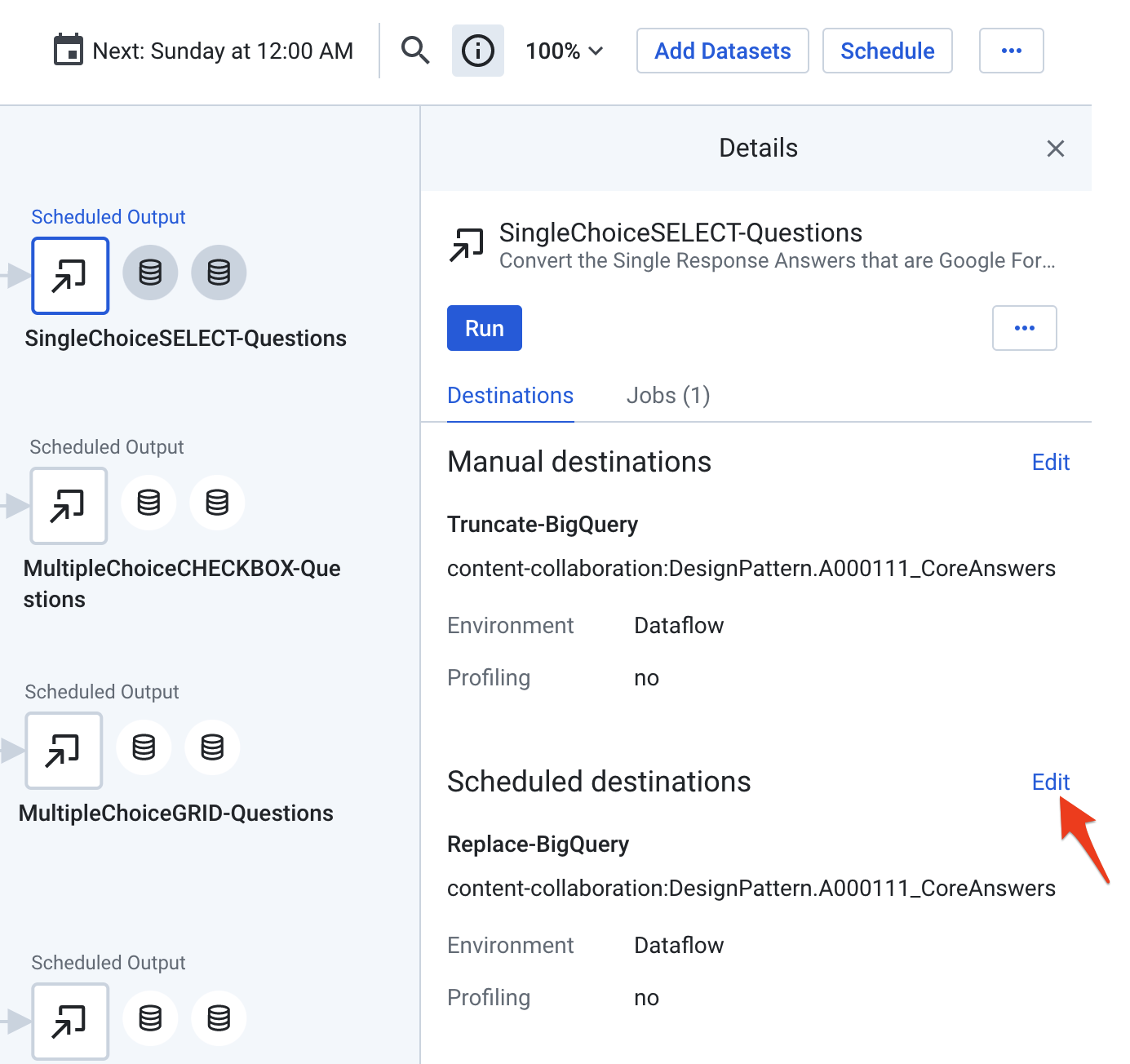

Connect BigQuery Tables

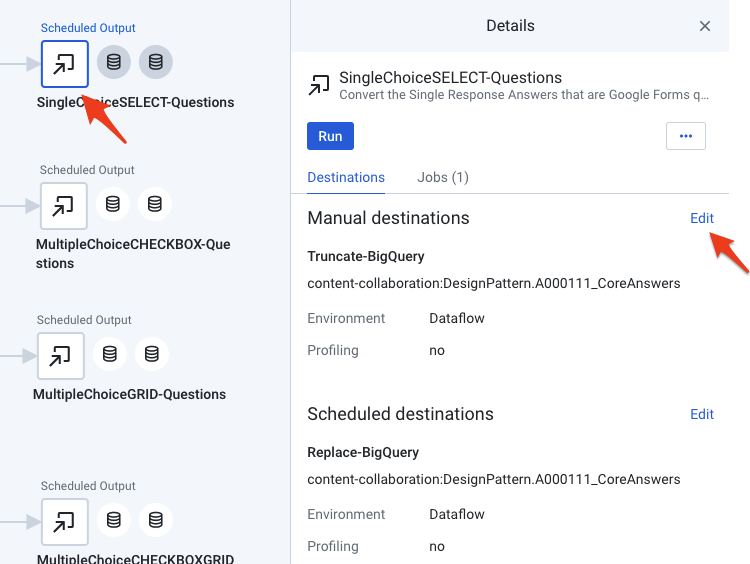

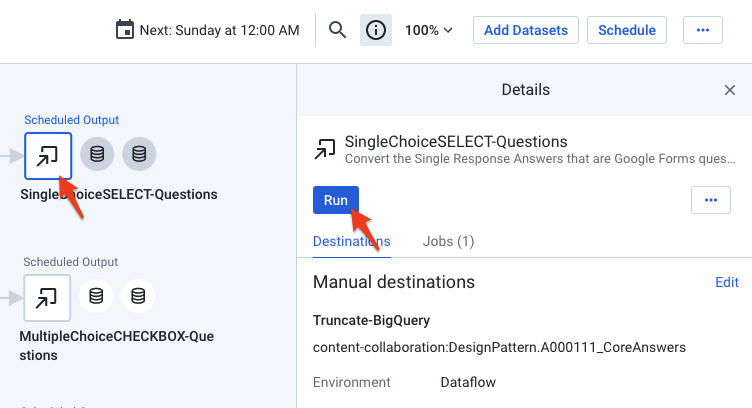

On the right side of the flow, you need to connect the outputs to your own BigQuery instance. For each of the outputs, click the icon and then edit its properties as follows.

First, start by editing the "Manual destinations"

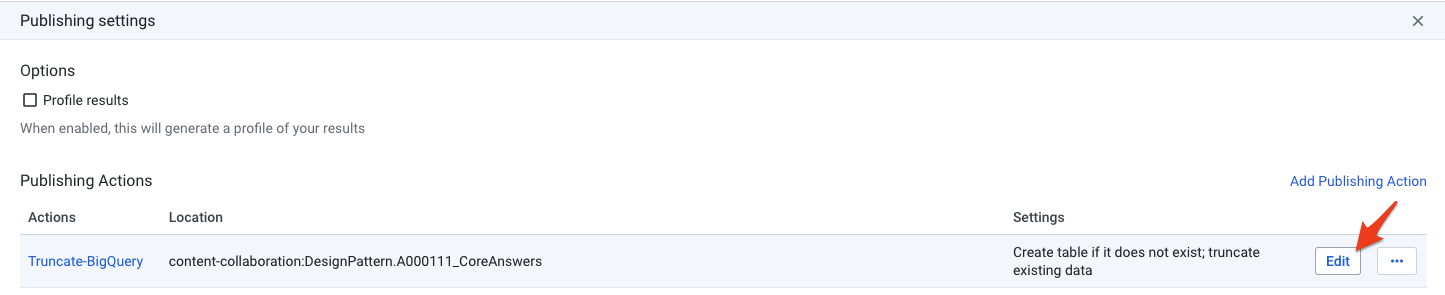

In the following "Publishing Settings" screen, click the edit button

When you see the "Publishing Action" screen, you need to change the connection settings by clicking the BigQuery connection and editing its properties.

Select the BigQuery dataset where you want the Google Forms results to be loaded into. You can select "default" if you haven't created any BigQuery dataset yet.

After you have edited the "Manual destinations", proceed the same way for the "Scheduled destinations" output.

Iterate on each output following the same steps. In total you have to edit 8 destinations.

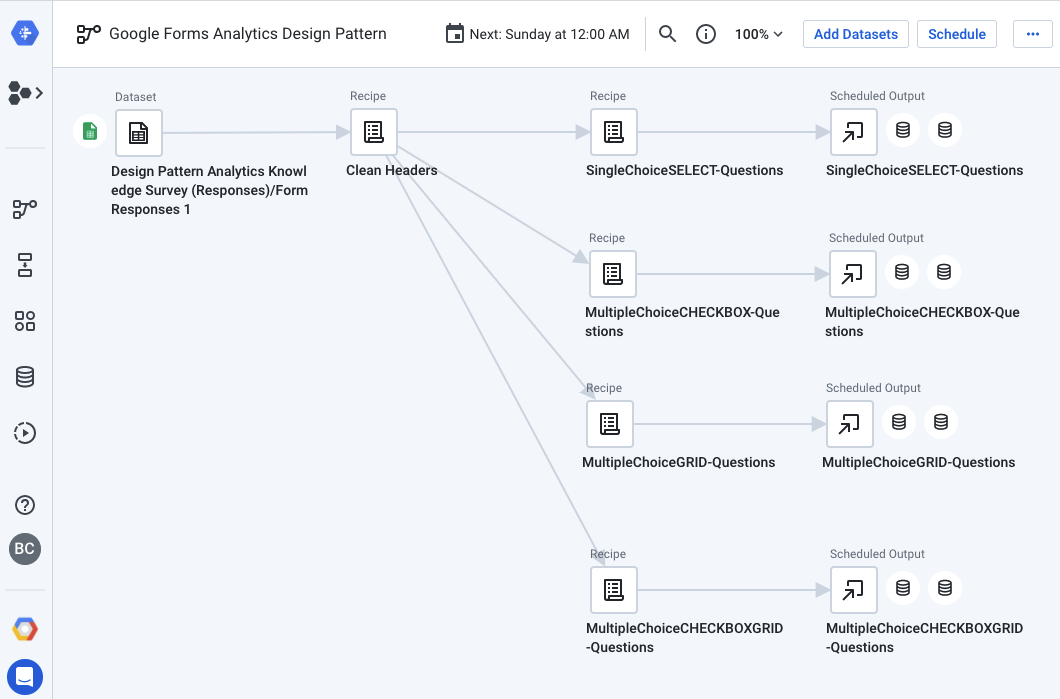

5. Cloud Dataprep Flow Explained

The basic idea of the "Google Forms Analytics Design Pattern" flow is to perform the transformations on the survey responses as previously described - by breaking down each question category into a specific Cloud Dataprep data transformation recipe.

This flow breaks the questions into 4 tables (corresponding to the 4 question categories, for simplicity purposes)

We suggest that you explore each of the recipes one by one starting with "Clean Headers" and then "SingleChoiceSELECT-Questions" followed by each other recipes underneath.

All the recipes are commented to explain the various transformation steps. When in a recipe, you can edit a step and preview the before/after state of a particular column.

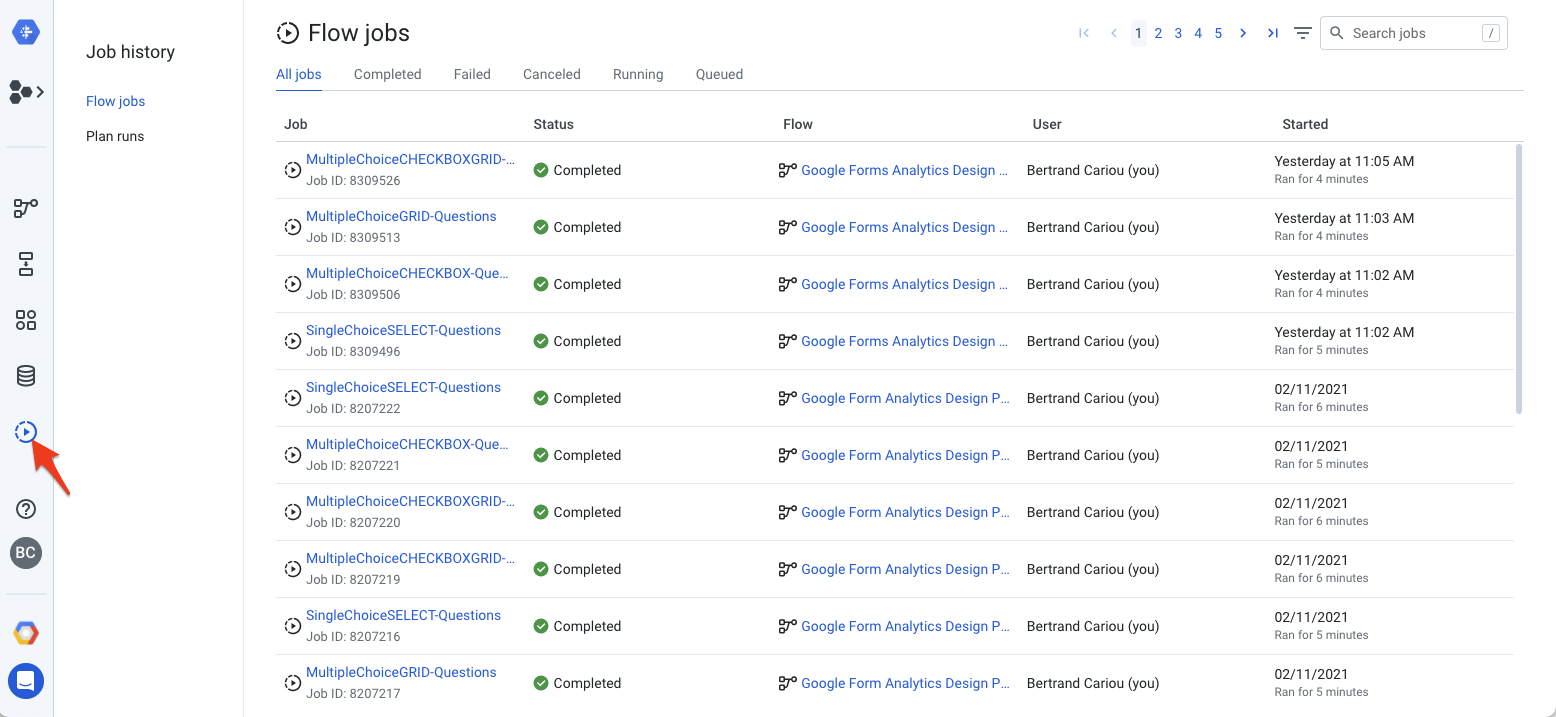

6. Run the Cloud Dataprep Flow

Now that your source and destinations are properly configured, you can run the flow to transform and load the responses into BigQuery. Select each of the outputs and click the "Run" button. If the specified BigQuery table exists, Dataprep will append new rows, otherwise it will create a new table.

Click the "job history" icon on the left pan to monitor the jobs. It should take a few minutes to proceed and load the BigQuery tables.

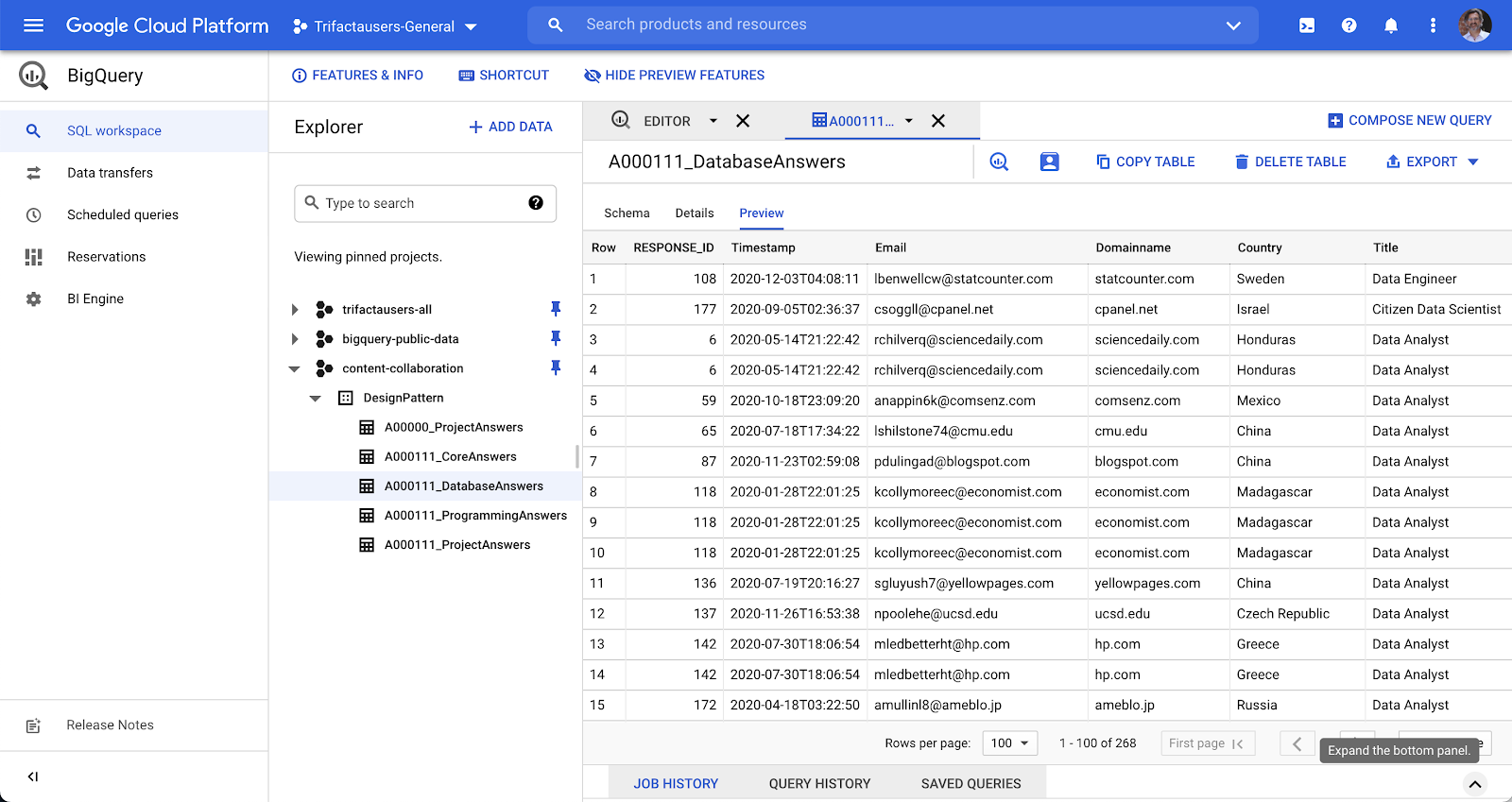

When all jobs are completed, the survey results will be loaded in BigQuery in a clean, structured, and normalized format ready for analysis.

7. Analyze the Survey Data in BigQuery

In the Google Console for BigQuery, you should be able to see the details of each of the new tables

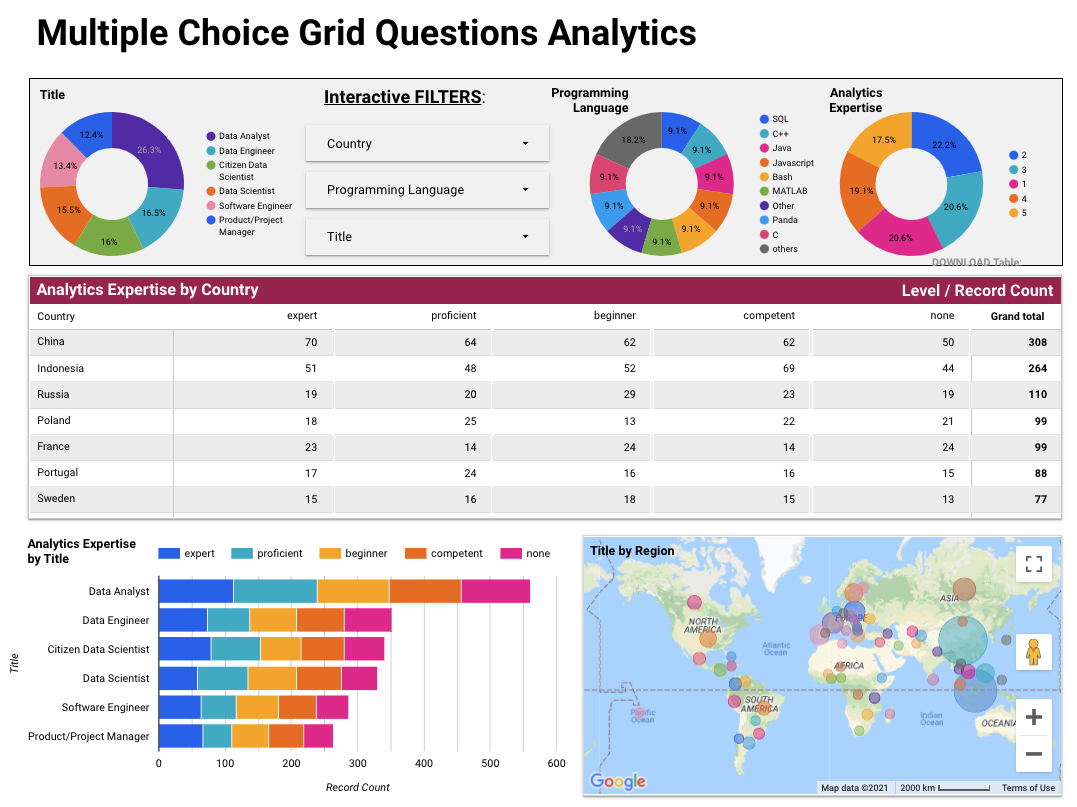

With the survey data in BigQuery, you can easily ask more comprehensive questions to understand the survey responses on a deeper level. For example, let's say you are trying to understand what programming language is most commonly used by people of different professional titles - you can write a query like this:

SELECT

programming_answers.Language AS programming_answers_language,

project_answers.Title AS project_answers_title,

AVG((case when programming_answers.Level='None' then 0

when programming_answers.Level='beginner' then 1

when programming_answers.Level='competent' then 2

when programming_answers.Level='proficient' then 3

when programming_answers.Level='expert' then 4

else null end) ) AS programming_answers_average_level_value

FROM `my-project.DesignPattern.A000111_ProjectAnswers` AS project_answers

INNER JOIN `my-project.A000111_ProgrammingAnswers` AS programming_answers

ON programming_answers.RESPONSE_ID = project_answers.RESPONSE_ID

GROUP BY 1,2

ORDER BY 3 DESC

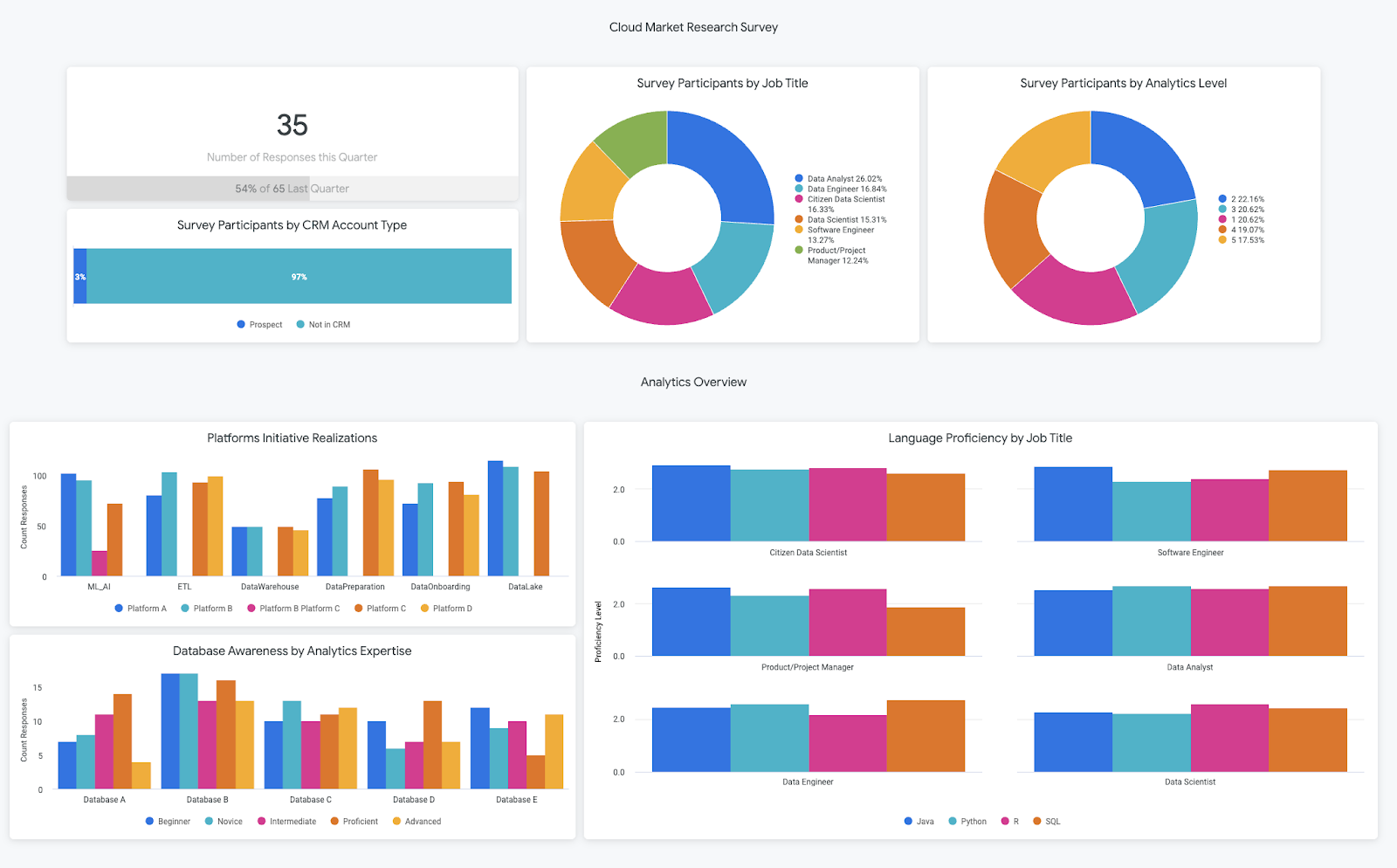

To make your analyses even more powerful, you can join the survey responses onto CRM data to see if participants map to any accounts that are already included in your data warehouse. This can help your business make more informed decisions on customer support or targeting users for new launches.

Here, we show how you can join the survey data onto an account table based on the responder's domain and the account website. Now, you can see the distribution of responses by account type, which helps you understand how many responders belong to existing customer accounts.

SELECT

account.TYPE AS account_type,

COUNT(DISTINCT project_answers.Domainname) AS project_answers_count_domains

FROM `my-project.A000111_ProjectAnswers` AS project_answers

LEFT JOIN `my-project.testing.account` AS account

ON project_answers.Domainname=account.website

GROUP BY 1

8. Perform Visual Analytics

Now that your survey data is centralized in a data warehouse, you can easily analyze the data in a business intelligence tool. We have created some example reports in Data Studio and in Looker.

Looker

If you already have a Looker instance you can use the LookML in this folder to get started analyzing the sample survey and CRM data for this pattern. Simply create a new Looker project, add the LookML, and replace the connection and table names in the file to match your BigQuery configuration. If you don't have a Looker instance but are interested in learning more, you can schedule a demo here.

Data Studio

Alternatively, to create a report in Data Studio, click the frame with the Google cross "Blank Report" and connect to BigQuery. Follow all the instructions from Data Studio. If you want to know more, a quick start and an introduction to the Data Studio's main features can be found here. You can also find our pre-built data studio dashboards here.

9. Cleaning Up

The easiest way to eliminate billing is to delete the Cloud project you created for the tutorial. Alternatively, you can delete the individual resources.

- In the Cloud Console, go to manage resources

- In the project list, select the project that you want to delete, and then click Delete.

- In the dialog, type the project ID, and then click Shut down to delete the project.