1. Introduction

Generative AI models are powerful reasoners, but they lack institutional context. If an executive asks an AI agent, "What is our Q1 revenue?", the agent might find dozens of tables named "revenue" across your data lake. Some are rigorous financial reports, others are real-time marketing estimates, and many are likely deprecated sandboxes.

Without explicit grounding, an AI agent will select a table based on simple name similarity, leading to "convincingly wrong" answers derived from unverified data.

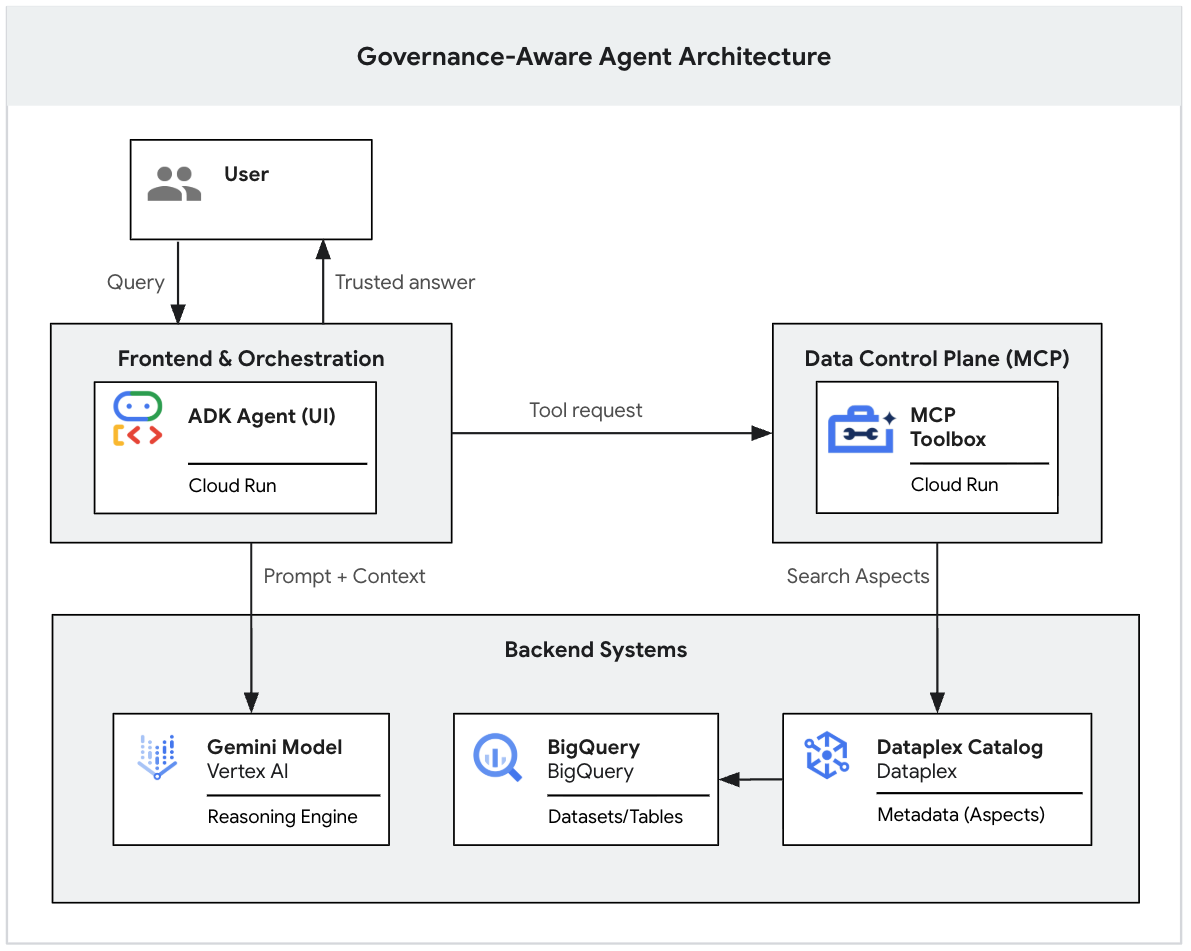

This codelab is part of a two-part series exploring how to build a Governance-Aware GenAI Agent.

In this first part, you will build the data foundation. You will set up a realistic, "messy" data lake in BigQuery, apply rigid metadata tags (Dataplex Aspects) to differentiate valid data from noise, and use the Gemini CLI to locally test if the LLM strictly follows your governance rules.

(You can read the second part of this series, which covers how to deploy this local prototype into a secure, enterprise-grade web application using the Model Context Protocol (MCP) and Cloud Run. 👉 Read Part 2)

Prerequisites

- A Google Cloud project with billing enabled.

- Basic understanding and familiarity with BigQuery, Dataplex Universal Catalog, and Terraform.

- Access to Google Cloud Shell.

What you'll learn

- Deploy a realistic, multi-tiered data lake using Terraform.

- Design strict metadata templates (Aspect Types) in Dataplex to distinguish official data products from raw sandbox tables.

- Verify governance rules locally using the Gemini CLI before writing any application code.

What you'll need

- Access to Google Cloud Shell

- Terraform (pre-installed in Cloud Shell).

- Gemini CLI (pre-installed in Cloud Shell).

Key concepts

- Dataplex Universal Catalog: The unified metadata management service. We use it to enrich technical metadata (schemas) with business context (governance).

- Aspect Type: A structured metadata template. Unlike free-text tags, Aspects enforce strong typing (enums, booleans), making them reliable for machines to evaluate.

2. Setup and requirements

Start Cloud Shell

While Google Cloud can be operated remotely from your laptop, in this codelab you will be using Google Cloud Shell, a command line environment running in the Cloud.

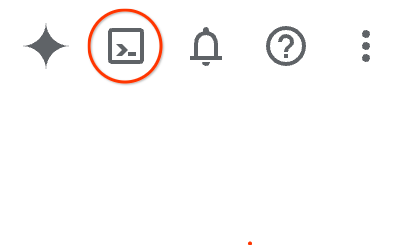

From the Google Cloud Console, click the Cloud Shell icon on the top right toolbar:

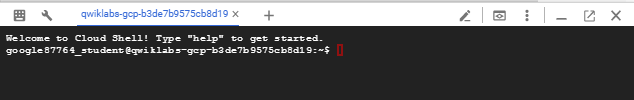

It should only take a few moments to provision and connect to the environment. When it is finished, you should see something like this:

This virtual machine is loaded with all the development tools you'll need. It offers a persistent 5GB home directory, and runs on Google Cloud, greatly enhancing network performance and authentication. All of your work in this codelab can be done within a browser. You do not need to install anything.

Initialize environment

Open Cloud Shell and set your project variables to ensure all commands target the correct infrastructure.

export PROJECT_ID=$(gcloud config get-value project)

gcloud config set project $PROJECT_ID

export REGION="us-central1"

Enable APIs

Enable the necessary Google Cloud services to execute the following instruction.

gcloud services enable \

artifactregistry.googleapis.com \

bigqueryunified.googleapis.com \

cloudaicompanion.googleapis.com \

cloudbuild.googleapis.com \

cloudresourcemanager.googleapis.com \

datacatalog.googleapis.com \

run.googleapis.com

Clone the repository

Get the infrastructure code and automation scripts from the GitHub repository. To save disk space in Cloud Shell, we will only download the specific folder needed for this lab.

# Perform a shallow clone to get only the latest repository structure without the full history

git clone --depth 1 --filter=blob:none --sparse https://github.com/GoogleCloudPlatform/devrel-demos.git

cd devrel-demos

# Specify and download only the folder we need for this lab

git sparse-checkout set data-analytics/governance-context

cd data-analytics/governance-context

Build the "messy" data lake

Real-world data environments are rarely clean. To simulate reality, we need a mix of "official" data marts and untrusted "sandbox" tables.

We will use Terraform to deploy this environment. The configuration handles two tasks:

- Infrastructure: Creates Dataplex Aspect Types and BigQuery Datasets/Tables.

- Data Loading: Runs BigQuery INSERT jobs to populate the tables with sample data immediately after creation.

- Navigate to the

terraformdirectory and initialize it.

cd terraform

terraform init

- Apply the configuration. This may take up to a minute.

terraform apply -var="project_id=${PROJECT_ID}" -var="region=${REGION}" -auto-approve

Checkpoint: You now have a fully populated, but completely un-governed, data lake. To an AI, every table looks exactly the same.

3. Applying governance

This is the critical engineering step. Currently, the table finance_mart.fin_monthly_closing_internal and analyst_sandbox.tmp_data_dump_v2_final_real look identical to an LLM. They are just objects with columns.

As a governance engineer, you must attach an Aspect (a certified metadata label) to these tables to differentiate them. In a real enterprise, you would automate this via CI/CD pipelines. We will simulate that automation with scripts.

Generate governance payloads

Dataplex Aspect keys must be globally unique (prefixed with your Project ID). The ./generate_payloads.sh script will dynamically generate the YAML metadata files.

cd ..

chmod +x ./generate_payloads.sh

./generate_payloads.sh

Output:

This creates a folder "./aspect_payloads" containing 4 YAML files, defining the governance scenarios (Gold/Internal, Gold/Public, Silver/Realtime, Bronze/Sandbox).

Apply Aspects via CLI

Before running the script, let's look at what we are actually applying to demystify the process. Run the following command to see the structure of the internal finance payload:

cat aspect_payloads/fin_internal.yaml

It will show you the following contents.

your-project-id.us-central1.official-data-product-spec:

data:

product_tier: GOLD_CRITICAL

data_domain: FINANCE

usage_scope: INTERNAL_ONLY

update_frequency: DAILY_BATCH

is_certified: true

Notice how this YAML explicitly defines the business context, such as setting the is_certified: true flag and assigning the GOLD_CRITICAL tier. Giving the AI clear, structured rules to evaluate instead of just guessing based on table names.

Now, run the application script. This iterates through the BigQuery tables and executes the gcloud dataplex entries update command to attach this rigid metadata.

chmod +x ./apply_governance.sh

./apply_governance.sh

Verification (Optional)

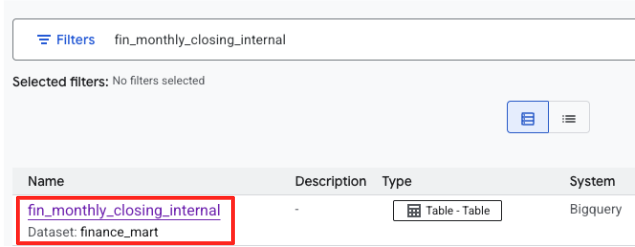

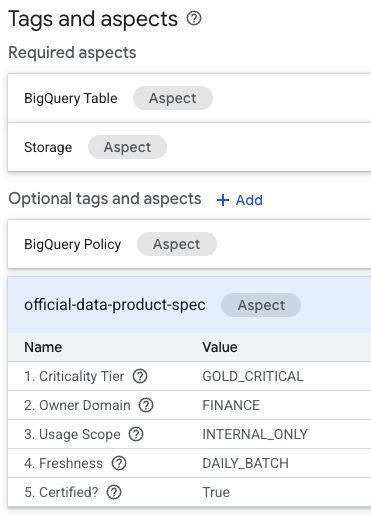

Before proceeding, verify that the metadata was correctly applied in the console.

- Open the Dataplex Universal Catalog page in Google Cloud Console. If you do not see "Dataplex Universal Catalog" in the left-hand navigation menu, use the Search bar at the top of the Google Cloud console window, type Dataplex, and select the result under "Top results" or "Products & Pages".

- Search for

fin_monthly_closing_internal. You should see the BigQuery table listed in the results. Click on the table name to enter its details page.

- On the table's details page, look for the "Optional tags and aspects" section located on the bottom.

- You will find the

official-data-product-specaspect. Confirm that the values match the "Gold Internal" scenario we applied.

You have now confirmed that technically identical BigQuery tables (fin_monthly_closing_internal and tmp_data_dump_v2_final_real) are logically differentiated by machine-readable metadata.

4. Configure and prototype the agent

Before building a web application (which we will do in Part 2), we will verify our governance logic locally. We need to install the Dataplex Extension and configure the system prompt.

Install the extension

In Cloud Shell, install the Dataplex Extension. It will ask you for confirmation and your setup details.

export DATAPLEX_PROJECT="${PROJECT_ID}"

gemini extensions install https://github.com/gemini-cli-extensions/dataplex

(Type Y to accept the installation, and enter your Project ID when prompted).

Define the policy file

The GEMINI.md file contains the logic that translates abstract human rules (e.g., "I need safe data") into strict technical lookups.

This file is currently generic. The agent needs to know exactly which Google Cloud project to search to prevent it from hallucinating tables from the public internet or other contexts.

- Inject your

PROJECT_IDinto the policy file.

envsubst < GEMINI.md > GEMINI.md.tmp && mv GEMINI.md.tmp GEMINI.md

- Inspect the file to understand the algorithm we are teaching the AI.

cat GEMINI.md

Notice two things in this file:

- Project Scope: Check Phase 2. Ensure that projectid:

${PROJECT_ID}has been replaced with your actual Project ID(e.g., projectid:my-lab-project). If this variable is not replaced, the agent will search across every project you have access to, leading to incorrect answers. - The Algorithm: Notice the Phase 1 / Phase 2 logic. We explicitly instruct the model NOT to guess SQL. It must first search for the correct tag definition (Phase 1) and only then search for data (Phase 2).

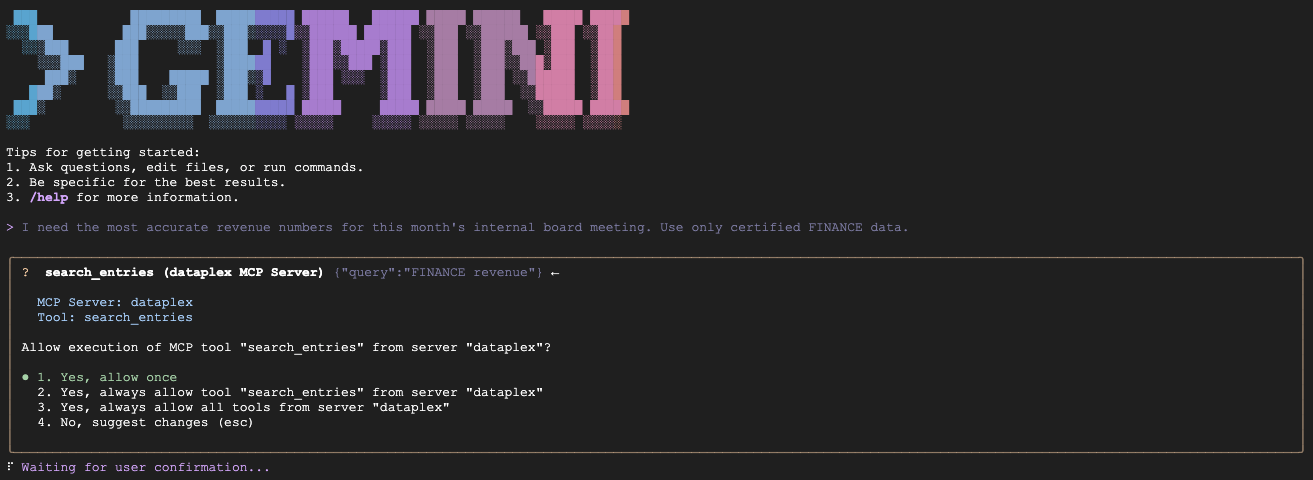

Start the agent and test scenarios

Start the Gemini CLI session, this time loading your governance policy as the system context.

gemini

Note: You may see multiple context files being loaded (e.g., GEMINI.md and others). This is normal. The CLI loads the local GEMINI.md for this project's specific rules, plus the default instructions for the Dataplex Extension itself.

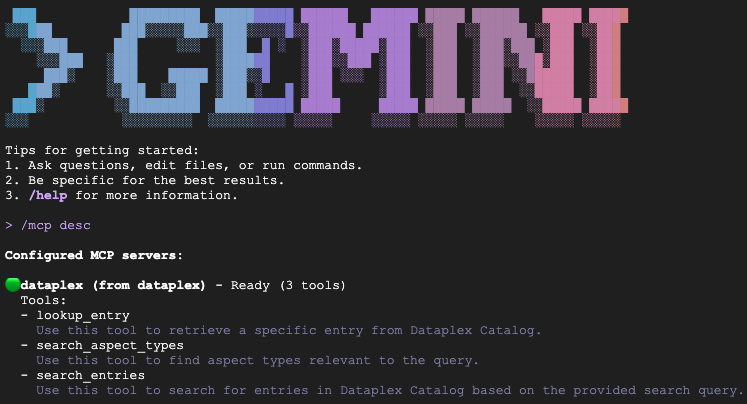

Verify installation

Type /mcp desc to confirm the Dataplex Extension is active. You should see dataplex listed as a configured MCP server with available tools.

Test scenarios (Prototyping)

Paste the following prompts into the running agent session one by one to verify it adheres to your rules.

- Scenario A (Certify the CFO's data):

"We are preparing the deck for an internal Board of Directors meeting next week. I need the numbers to be absolutely finalized, trustworthy, and kept strictly confidential. Which table is safe to use?"

Expected: Queries fin_monthly_closing_internal because it semantically matches GOLD_CRITICAL (accurate) and INTERNAL_ONLY (board meeting) in its Aspect.

- Scenario B (Public disclosure):

"I need to share our quarterly financial summary with an external consulting firm. It is critical that we do not leak any raw or internal metrics. Which dataset is officially scrubbed and explicitly approved for external sharing?"

Expected: The agent must bypass the monthly internal table and strictly select fin_quarterly_public_report because it is the only asset tagged EXTERNAL_READY.

- Scenario C (Operational needs):

"My dashboard needs to show what's happening right now with our ad spend. I can't wait for the overnight load. What do you recommend?"

Expected: The agent selects mkt_realtime_campaign_performance because it identifies the REALTIME_STREAMING update frequency, prioritizing that over the GOLD_CRITICAL tier of the finance data.

- Scenario D (Sandbox experimentation):

"I'm just playing around with some new ML models and need a lot of raw data. It doesn't need to be perfect, just a sandbox environment."

Expected: The agent selects tmp_data_dump_v2_final_real because it semantically matches BRONZE_ADHOC (raw data) and is_certified: false (sandbox environment) in its Aspect.

(To exit the Gemini session, type /quit)

5. Congratulations! What's next?

You have successfully built a governed data foundation and proven that an AI can strictly follow your metadata rules using a local CLI prototype!

Now, you have reached a checkpoint. Please choose your next step:

Option A: I want to continue to Part 2 right now!

If you are ready to turn this local prototype into a secure, production-grade web application using the Model Context Protocol (MCP) and Cloud Run:

Option B: I will do Part 2 later or I only wanted to complete Part 1.

If you want to stop for today and avoid cloud costs, you should clean up your resources.

Don't worry! In Part 2, we will provide a "Fast-Track Script" that will completely rebuild this Part 1 environment for you in just 2 minutes so you can pick up exactly where you left off.

👉 Proceed to the Clean up section.

6. Clean up (For option B only)

If you are stopping here, destroy the resources to avoid incurring charges.

Destroy the Datalake (Terraform)

If you are currently in the Gemini CLI environment, exit the session by pressing Ctrl+C twice or typing /quit. Then, run the following commands:

cd ~/devrel-demos/data-analytics/governance-context/terraform

terraform destroy -var="project_id=${PROJECT_ID}" -var="region=${REGION}" -auto-approve

Uninstall the Gemini CLI extension and remove local files

gemini extensions uninstall dataplex

cd ~

rm -rf ~/devrel-demos