1. Introduction

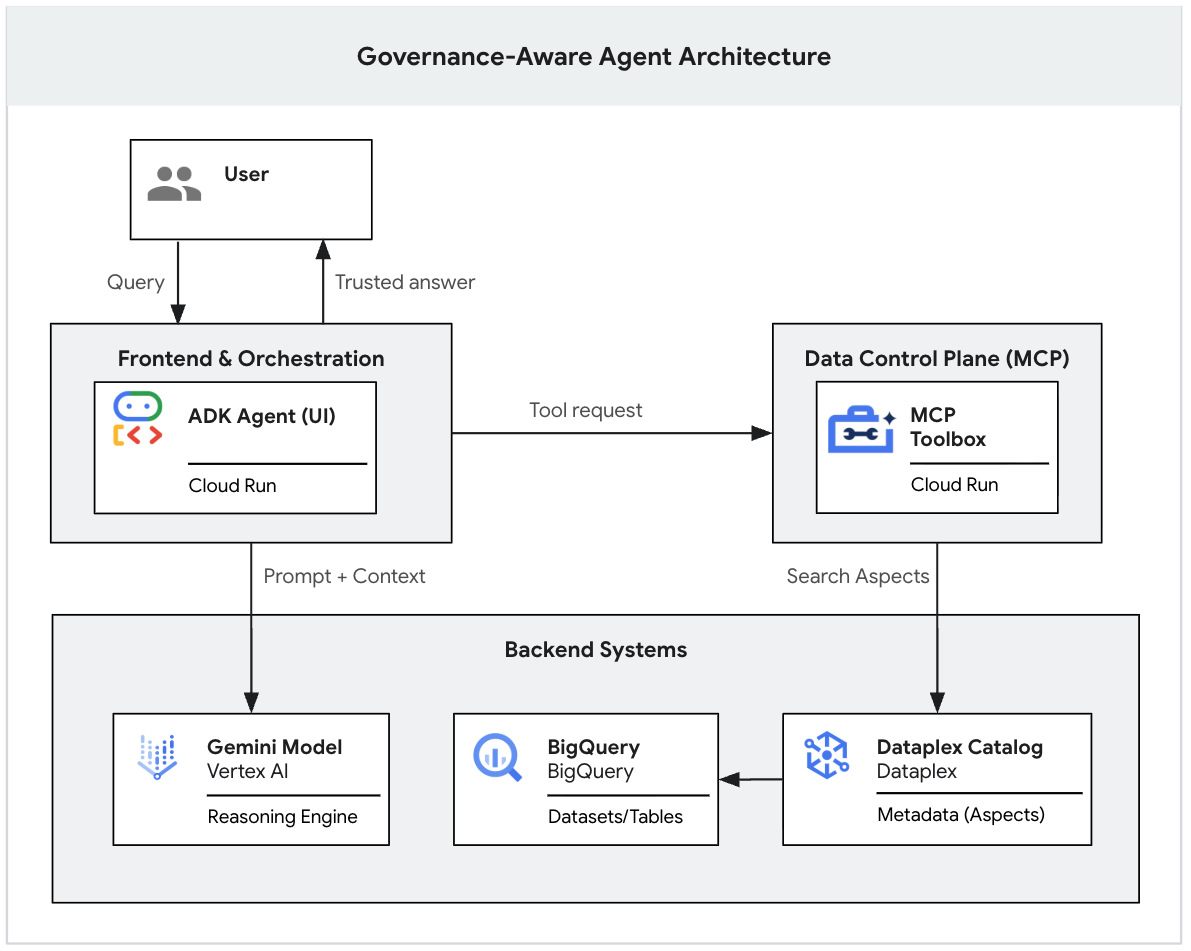

This codelab is part of a two-part series exploring how to build a Governance-Aware GenAI Agent.

(You can read the first part of this series, which covers how to establish the data foundation by applying Dataplex Aspects to BigQuery tables and testing the rules locally via the Gemini CLI. 👉 Read Part 1)

However, testing in a local CLI is just the beginning. To roll this out to your entire company, you need centralized security, standardized AI tool connections, and a proper application framework to orchestrate the agent's logic and provide a familiar chat interface.

In this second part, you will solve these challenges and scale to production. You will deploy your governance rules into a central MCP server hosted on Cloud Run. Then, you will use Google's Agent Development Kit (ADK) to build the actual agent application and connect it to your MCP tools, complete with a professional web UI.

Prerequisites

- A Google Cloud project with billing enabled.

- Basic understanding of Cloud Run, IAM Service Accounts, and Python.

- The BigQuery datasets and Dataplex Aspects created in Part 1. (Don't worry if you deleted them; we provide a fast-track script below to recreate them!)

What you'll learn

- How to use the Model Context Protocol (MCP) to standardize how AI agents interact with Google Cloud data.

- How to deploy a secure MCP server to Cloud Run.

- How to build an AI agent using the Agent Development Kit (ADK) and connect it to your MCP backend.

- How to run the ADK's built-in developer UI to interact with your governed agent.

What you'll need

- Access to Google Cloud Shell

Key concepts

- Model Context Protocol (MCP): Think of MCP as a "universal USB-C cable" for AI agents. Instead of writing custom API integration code for every single AI model, MCP provides a standard way for AI to securely connect to your enterprise data tools (like Dataplex and BigQuery).

- Agent Development Kit (ADK): A flexible, open-source framework by Google designed to simplify the end-to-end development of AI agents. It applies software engineering principles to agent creation, allowing you to orchestrate complex tools, manage state, and easily launch a built-in developer UI for testing and deployment.

2. Setup and requirements

Start Cloud Shell

While Google Cloud can be operated remotely from your laptop, in this codelab you will be using Google Cloud Shell, a command line environment running in the Cloud.

From the Google Cloud Console, click the Cloud Shell icon on the top right toolbar:

It should only take a few moments to provision and connect to the environment. When it is finished, you should see something like this:

This virtual machine is loaded with all the development tools you'll need. It offers a persistent 5GB home directory, and runs on Google Cloud, greatly enhancing network performance and authentication. All of your work in this codelab can be done within a browser. You do not need to install anything.

Initialize environment

Open Cloud Shell and set your project variables to ensure all commands target the correct infrastructure.

export PROJECT_ID=$(gcloud config get-value project)

gcloud config set project $PROJECT_ID

export REGION="us-central1"

Checkpoint: Resume or rebuild?

Since this is Part 2, your agent needs the governed data from Part 1 to function. Please choose your path:

Path A: I just finished Part 1 and my resources are still running.

Great! Navigate to the working directory and you are ready to proceed.

cd ~/devrel-demos/data-analytics/governance-context

Path B: I skipped Part 1 OR I deleted my resources (Cleaned up).

No problem! We have provided a "Fast-Track" command block below. This will automatically rebuild the BigQuery data lake and apply the Dataplex governance metadata exactly as we did in Part 1.

# 1. Clone the repo and navigate to the working directory

git clone --depth 1 --filter=blob:none --sparse https://github.com/GoogleCloudPlatform/devrel-demos.git

cd devrel-demos

git sparse-checkout set data-analytics/governance-context

cd data-analytics/governance-context

# 2. Rebuild the messy data lake with Terraform

cd terraform

terraform init

terraform apply -var="project_id=${PROJECT_ID}" -var="region=${REGION}" -auto-approve

# 3. Generate and apply Dataplex Aspects (Governance rules)

cd ..

chmod +x ./generate_payloads.sh ./apply_governance.sh

./generate_payloads.sh

./apply_governance.sh

3. Scale with MCP: Building the data control plane

So far, you have successfully tested your governance logic using the Gemini CLI. This is excellent for rapid prototyping, but it runs locally using your personal user credentials.

In a real enterprise environment, you need a centralized data control plane. To build this, we will use the GenAI Toolbox for Databases, an official open-source project by Google. This toolbox provides a pre-built MCP server designed specifically to connect AI agents securely to Google Cloud databases and metadata services like Dataplex.

By deploying this toolbox as our MCP Server on Cloud Run, we achieve:

- Centralized identity: The agent runs as a restricted Service Account, not as your personal user account.

- Standardization: Any client (ADK, Gemini, custom apps) can "plug in" to this server using the standard MCP protocol.

- Controlled scope (least privilege): We don't give the LLM open-ended access to BigQuery. We force it to navigate through the Dataplex metadata catalog first.

Configure the tool definition (tools.yaml)

The GenAI Toolbox requires a declarative configuration file, tools.yaml. This file defines the sources (where to connect) and the tools (what the AI is allowed to do).

- Navigate to the server directory and inject your Project ID into the configuration file:

cd ~/devrel-demos/data-analytics/governance-context/mcp_server

envsubst < tools.yaml > tools.tmp && mv tools.tmp tools.yaml

cat tools.yaml

It should look identical to the following snippet. Verify that the project field now matches your actual Google Cloud Project ID.

sources:

dataplex:

kind: dataplex

project: YOUR-PROJECT-ID

tools:

search_entries:

kind: dataplex-search-entries

source: dataplex

description: Search for entries in Dataplex Catalog.

lookup_entry:

kind: dataplex-lookup-entry

source: dataplex

description: Retrieve a specific entry from Dataplex Catalog.

search_aspect_types:

kind: dataplex-search-aspect-types

source: dataplex

description: Find aspect types relevant to a query.

toolsets:

dataplex-toolset:

- search_entries

- lookup_entry

- search_aspect_types

By defining these three tools, we can force the AI to be "read-only" and "governance-first".

Secure the configuration (Secret Manager)

In enterprise architecture, you should never bake configuration files directly into container images. We will store tools.yaml securely in Google Cloud Secret Manager.

gcloud services enable secretmanager.googleapis.com

gcloud secrets create dataplex-tools-config --data-file=tools.yaml

Implement Least Privilege (IAM)

Next, we create a dedicated Service Account for the GenAI Toolbox MCP server. This identity will only have the exact permissions required to read the Dataplex catalog and access BigQuery data.

export MCP_SA=mcp-sa

gcloud iam service-accounts create ${MCP_SA} \

--display-name="Service Account for Dataplex MCP"

export MCP_SERVICE_ACCOUNT="${MCP_SA}@${PROJECT_ID}.iam.gserviceaccount.com"

# Allow the server to read its own config from Secret Manager

gcloud projects add-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$MCP_SERVICE_ACCOUNT" \

--role="roles/secretmanager.secretAccessor"

# Allow the server to read Dataplex Metadata and BigQuery Data

gcloud projects add-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$MCP_SERVICE_ACCOUNT" \

--role="roles/dataplex.catalogViewer"

gcloud projects add-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$MCP_SERVICE_ACCOUNT" \

--role="roles/bigquery.dataViewer"

Deploy the MCP server to Cloud Run

Now, we deploy the GenAI Toolbox. We use Google's pre-built container image (database-toolbox/toolbox) and mount our configuration from Secret Manager (--set-secrets) at runtime.

export IMAGE=us-central1-docker.pkg.dev/database-toolbox/toolbox/toolbox:latest

gcloud run deploy governance-mcp \

--image=$IMAGE \

--service-account $MCP_SERVICE_ACCOUNT \

--region=$REGION \

--no-allow-unauthenticated \

--set-secrets="/app/tools.yaml=dataplex-tools-config:latest" \

--args="--tools-file=/app/tools.yaml","--address=0.0.0.0","--port=8080"

You have now established a governed API! Instead of giving your GenAI frontend direct database access, it will connect to this Cloud Run URL. The agent can only see what this Toolbox allows it to see.

4. Build the agent backend with ADK

You have established a secure, governed Data Control Plane (MCP) running on Cloud Run. Now your AI agent needs a framework to orchestrate its logic, such as processing user inputs, deciding when to call the MCP server, and formatting the output.

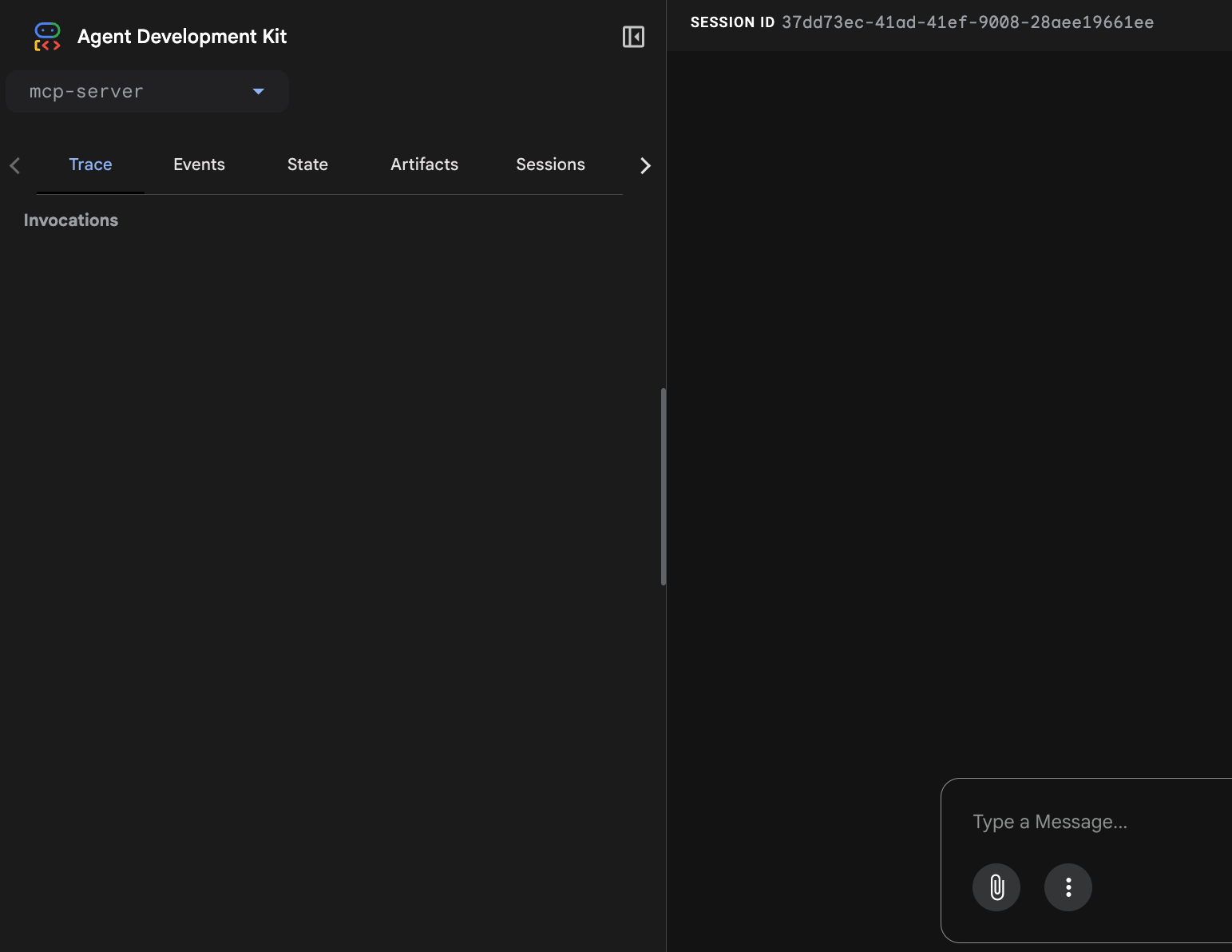

Instead of writing all this boilerplate code from scratch, we will use Google's Agent Development Kit (ADK). The ADK is a code-first framework that automatically wraps your agent logic into a FastAPI backend. Furthermore, it comes with a built-in developer UI, allowing you to instantly visualize the agent's reasoning process and tool calls without building a custom frontend first.

Inspect the Agent Logic (agent.py)

Before configuring the infrastructure, let's look at the core of this application.

Navigate to the directory and output the contents of agent.py. This file is the "brain" of your ADK deployment.

cd ~/devrel-demos/data-analytics/governance-context/mcp_server

cat agent.py

Look at the code structure. It performs three critical functions with minimal boilerplate:

- MCPToolset Integration: Instead of writing custom HTTP clients to interact with your Dataplex tools, the ADK uses

MCPToolset(server_url=mcp_url). This dynamically fetches thetools.yamldefinition from your deployed MCP server and translates them into native function calls for the LLM. - System Instructions: The

instructionsparameter contains the strict governance rules (the same logic we used in the CLIGEMINI.md). It explicitly orders the model to execute the Phase 1 (Metadata lookup) to Phase 2 (Data query) reasoning loop. - Agent Orchestration: The

Agent(...)class binds the Gemini model, the system prompt, and the MCP tools together. When deployed, ADK automatically converts this object into a scalable FastAPI endpoint.

Separation of duties: Configure the frontend identity

To run this code securely, we must tell the agent where your MCP server is located. We will construct the URL dynamically and save it to a .env file that the ADK will read at runtime.

We will also create a separate identity (dataplex-agent-sa) for this user-facing application. This separation of duties ensures that the frontend agent has different permissions than the backend governance server.

Run the following commands to configure the environment and identity:

export PROJECT_NUMBER=$(gcloud projects describe $PROJECT_ID --format="value(projectNumber)")

export MCP_SERVER_URL=https://governance-mcp-${PROJECT_NUMBER}.${REGION}.run.app/mcp

export AGENT_SA=dataplex-agent-sa

export AGENT_SERVICE_ACCOUNT="${AGENT_SA}@${PROJECT_ID}.iam.gserviceaccount.com"

gcloud iam service-accounts create ${AGENT_SA} \

--display-name="Service Account for Dataplex Agent "

Configure Runtime Variables

The ADK framework relies on environment variables to understand its context. We need to explicitly set the Project ID, Region, and enable Vertex AI usage. We append these to the same .env file.

echo MCP_SERVER_URL=$MCP_SERVER_URL > .env

echo GOOGLE_GENAI_USE_VERTEXAI=1 >> .env

echo GOOGLE_CLOUD_PROJECT=$PROJECT_ID >> .env

echo GOOGLE_CLOUD_LOCATION=$REGION >> .env

Grant Permissions

Even though the agent delegates governance checks to the MCP server, it still needs basic permissions to operate. We grant exactly two roles:

- Vertex AI User: To invoke the Gemini model for generating natural language responses.

- Cloud Run Invoker: To securely call your MCP Server API. It does not get direct BigQuery or Dataplex access!

gcloud projects add-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$AGENT_SERVICE_ACCOUNT" \

--role="roles/aiplatform.user"

gcloud run services add-iam-policy-binding governance-mcp \

--region=$REGION \

--member="serviceAccount:$AGENT_SERVICE_ACCOUNT" \

--role="roles/run.invoker"

Deploy to Cloud Run

Finally, we deploy the full stack to Cloud Run.

We use uvx to run the ADK tool without manually installing dependencies. The command below packages your agent.py logic, builds a container image, injects your Service Account, and launches a FastAPI server. By adding the --with_ui flag, it also bundles the ADK Web Playground for debugging.

This command builds the container and deploys it. It may take 1-3 minutes to complete.

uvx --from google-adk \

adk deploy cloud_run \

--project=$PROJECT_ID \

--region=$REGION \

--service_name=dataplex-agent \

--with_ui \

. \

-- \

--service-account=$AGENT_SERVICE_ACCOUNT \

--allow-unauthenticated

Once this command completes, it will output a Service URL (e.g., https://dataplex-agent-xyz.run.app). Click that link to open your fully governed GenAI Chat Interface.

End-to-End Architectural Flow

You have now completed the system. When a user interacts with the ADK UI, the following sequence occurs:

- User submits a prompt in the ADK Agent (Dev UI).

- The ADK Agent (agent.py) processes the input and calls the Gemini model.

- Gemini determines it needs context and asks the MCP Server to execute the Dataplex tools.

- The MCP Server enforces Dataplex Governance rules and returns the metadata.

- Gemini synthesizes the trusted answer based on the metadata and returns it to the user.

5. Test the Enterprise Agent

Now that your agent is live, let's revisit the governance scenarios tested earlier with the CLI. The logic remains the same, but you are now interacting with the deployed ADK Web Playground, which visualizes the internal state and tool executions.

- Orchestration: The ADK Agent (running on Cloud Run) receives your text.

- Tool Routing: Gemini recognizes that your question requires data context and forwards the request to the MCP Server.

- Governance Check: The MCP Server (running on a separate Cloud Run instance) queries Dataplex for specific Aspect Types.

- Synthesis: The relevant metadata is returned to Gemini to generate the final answer.

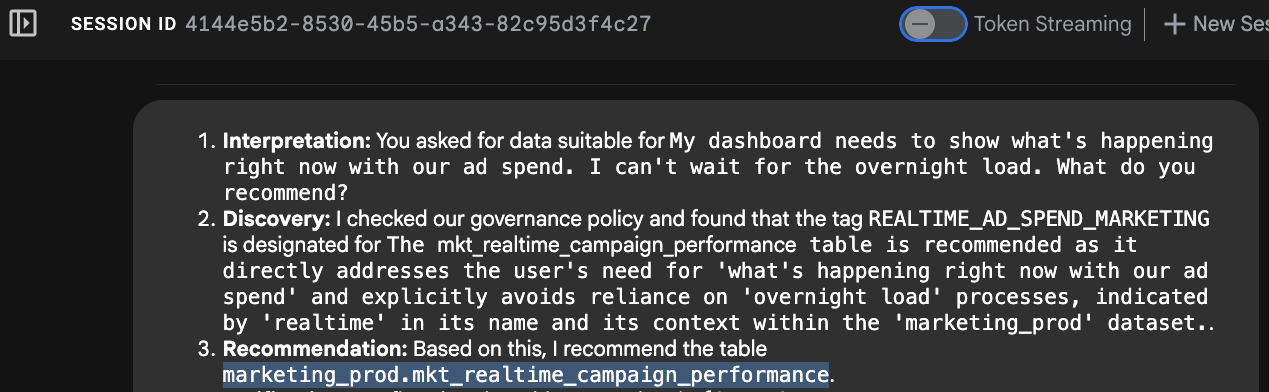

Verify the Governance Logic

Open the Service URL you generated in the previous step (e.g., https://dataplex-agent-xyz.run.app) in your browser. Paste the following prompt:

"My dashboard needs to show what's happening right now with our ad spend. I can't wait for the overnight load. What do you recommend?"

Observe the Agent's reasoning process in the developer UI:

- Intent Recognition: The agent parses "right now" and "can't wait for overnight."

- Metadata Lookup: It calls the MCP tool

search_aspect_types. It looks for data assets where theupdate_frequencyAspect is set to REALTIME or STREAMING, rather than DAILY or MONTHLY. - Selection: It identifies that the table

mkt_realtime_campaign_performancemeets these criteria, whereasfin_monthly_closing_internal(despite being high quality) is too slow for your request. - Response: The agent recommends the real-time table.

Why this matters:

Without this governance metadata, an LLM would likely recommend the fin_monthly_closing_internal table simply because it has a column named "ad_spend," ignoring the fact that the data is 24 hours old. Your metadata context prevented a business error.

You can also test the "Board Meeting" prompt to see how the agent pivots to different tables based on the Data Product Tier aspect:

"We are preparing the deck for an internal Board of Directors meeting next week. I need the numbers to be absolutely finalized, trustworthy, and kept strictly confidential. Which table is safe to use?"

6. Clean up

To avoid incurring charges to your Google Cloud account for the resources used in this codelab, follow these steps to destroy all infrastructure created in Part 1 and Part 2.

Destroy the Datalake (Terraform)

Use Terraform to tear down the BigQuery tables, datasets, and Dataplex Aspect definitions.

cd ~/devrel-demos/data-analytics/governance-context/terraform

terraform destroy -var="project_id=${PROJECT_ID}" -var="region=${REGION}" -auto-approve

Delete Cloud Run services

Remove the compute resources to stop any active billing for the running containers.

gcloud run services delete governance-mcp --region=$REGION --quiet

gcloud run services delete dataplex-agent --region=$REGION --quiet

Clean up build artifacts and staging storage

When you deployed the ADK agent using uvx, the system automatically built a container image and uploaded your source code to a temporary Cloud Storage bucket. These artifacts persist even after the Cloud Run service is deleted and will incur ongoing storage costs.

Remove the Artifact Registry repository and the Cloud Storage staging bucket:

# Delete the repository used for the agent build

gcloud artifacts repositories delete cloud-run-source-deploy \

--location=$REGION \

--quiet

# Delete the staging bucket created by Cloud Run source deploy

gcloud storage rm --recursive gs://run-sources-${PROJECT_ID}-${REGION}

Delete identity, permissions, and secrets

Remove the IAM policy bindings first to prevent "tombstone" entries (orphaned records) from remaining in your project's IAM page. Then, delete the Service Accounts and configuration secrets.

# Remove IAM roles granted to the MCP Service Account

gcloud projects remove-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$MCP_SERVICE_ACCOUNT" \

--role="roles/secretmanager.secretAccessor" --quiet

gcloud projects remove-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$MCP_SERVICE_ACCOUNT" \

--role="roles/dataplex.catalogViewer" --quiet

gcloud projects remove-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$MCP_SERVICE_ACCOUNT" \

--role="roles/bigquery.dataViewer" --quiet

# Remove IAM roles granted to the Agent Service Account

gcloud projects remove-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$AGENT_SERVICE_ACCOUNT" \

--role="roles/aiplatform.user" --quiet

# Delete the Service Accounts

gcloud iam service-accounts delete $MCP_SERVICE_ACCOUNT --quiet

gcloud iam service-accounts delete $AGENT_SERVICE_ACCOUNT --quiet

# Delete the Secret Manager entry

gcloud secrets delete dataplex-tools-config --quiet

Remove local configuration

Finally, clean up the local configuration files and environment variables in Cloud Shell.

# Uninstall the Gemini CLI extension (installed in Part 1)

gemini extensions uninstall dataplex

# Remove local repository files and unset variables

cd ~

rm -rf ~/devrel-demos

unset MCP_SERVER_URL

unset MCP_SERVICE_ACCOUNT

unset AGENT_SERVICE_ACCOUNT

7. Congratulations!

You have successfully deployed an end-to-end, Governance-Aware GenAI Agent.

In this two-part codelab, you moved beyond simple prompt engineering to implement a robust, production-ready architecture. By treating data governance as a prerequisite for GenAI, you established a systematic method to prevent the model from retrieving uncertified or hallucinated data.

Key Takeaways

- Deterministic AI through Metadata: Rather than relying on the LLM to guess the correct table based on column names, you enforced a strict reasoning loop using the GenAI Toolbox for Databases. By explicitly exposing only three Dataplex tools (

search_aspect_types,search_entries,lookup_entry), you forced the model to verify data certifications before synthesizing answers. - Decoupled Architecture (MCP): By deploying the Model Context Protocol (MCP) server on Cloud Run, you abstracted your data governance rules into a centralized, standardized API. The frontend agent does not need to contain database logic; it only needs to communicate via the MCP standard. This means you can plug any future AI model or client into the same governed backend.

- Separation of Duties: You applied the principle of least privilege by isolating IAM identities. The user-facing ADK agent operates with permissions restricted to model invocation and API routing, while the backend MCP server securely handles Dataplex catalog queries and BigQuery data retrieval.

- Code-first Agent Orchestration: You utilized the Google Agent Development Kit (ADK) to instantly wrap your python agent logic into a scalable FastAPI backend, utilizing its built-in developer UI to visualize and debug the agent's internal tool executions.

What's Next?

- Dataplex Foundational Governance Codelab: Master the fundamentals of data governance in Dataplex before adding the AI layer.

- Dataplex Tools Documentation: Explore the official documentation for the pre-built Dataplex tools and extensions used in this lab.

- Getting Started with Gemini CLI Extensions: Learn how to build your own custom extensions to give your GenAI agents even more capabilities.

- Deep Dive into MCP: Check out the official MCP specification to understand how to build custom servers for your internal enterprise APIs.