1. Before you begin

One of the most exciting machine learning breakthroughs recently is Large Language Models (LLMs). They can be used to generate text, translate languages, and answer questions in a comprehensive and informative way. LLMs, such as Google LaMDA and PaLM, are trained on massive amounts of text data, which allows them to learn the statistical patterns and relationships between words and phrases. This enables them to generate text that is similar to human-written text, and to translate languages with a high degree of accuracy.

LLMs are very large in terms of storage, and generally consume a lot of computing power to run, which means they are usually deployed on the cloud and are quite challenging for On-Device Machine Learning (ODML) due to limited computational power on mobile devices. But it is possible to run LLMs of smaller scale (for example, GPT-2) on a modern Android device and still achieve impressive results.

Here is a demo of running a version of Google PaLM model with 1.5 billion parameters on Google Pixel 7 Pro without playback speedup.

In this codelab, you learn the techniques and tooling to build an LLM-powered app (using GPT-2 as an example model) with:

- KerasNLP to load a pre-trained LLM

- KerasNLP to finetune an LLM

- TensorFlow Lite to convert, optimize and deploy the LLM on Android

Prerequisites

- Intermediate knowledge of Keras and TensorFlow Lite

- Basic knowledge of Android development

What you'll learn

- How to use KerasNLP to load a pre-trained LLM and fine-tune it

- How to quantize and convert an LLM to TensorFlow Lite

- How to run inference on the converted TensorFlow Lite model

What you'll need

- Access to Colab

- The latest version of Android Studio

- A modern Android device with more than 4G RAM

2. Get set up

To download the code for this codelab:

- Navigate to the GitHub repository for this codelab.

- Click Code > Download zip to download all the code for this codelab.

- Unzip the downloaded zip file to unpack an

examplesroot folder with all the resources you need.

3. Run the starter app

- Import

examples/lite/examples/generative_ai/androidfolder into Android Studio. - Start the Android Emulator, and then click

Run in the navigation menu.

Run in the navigation menu.

Run and explore the app

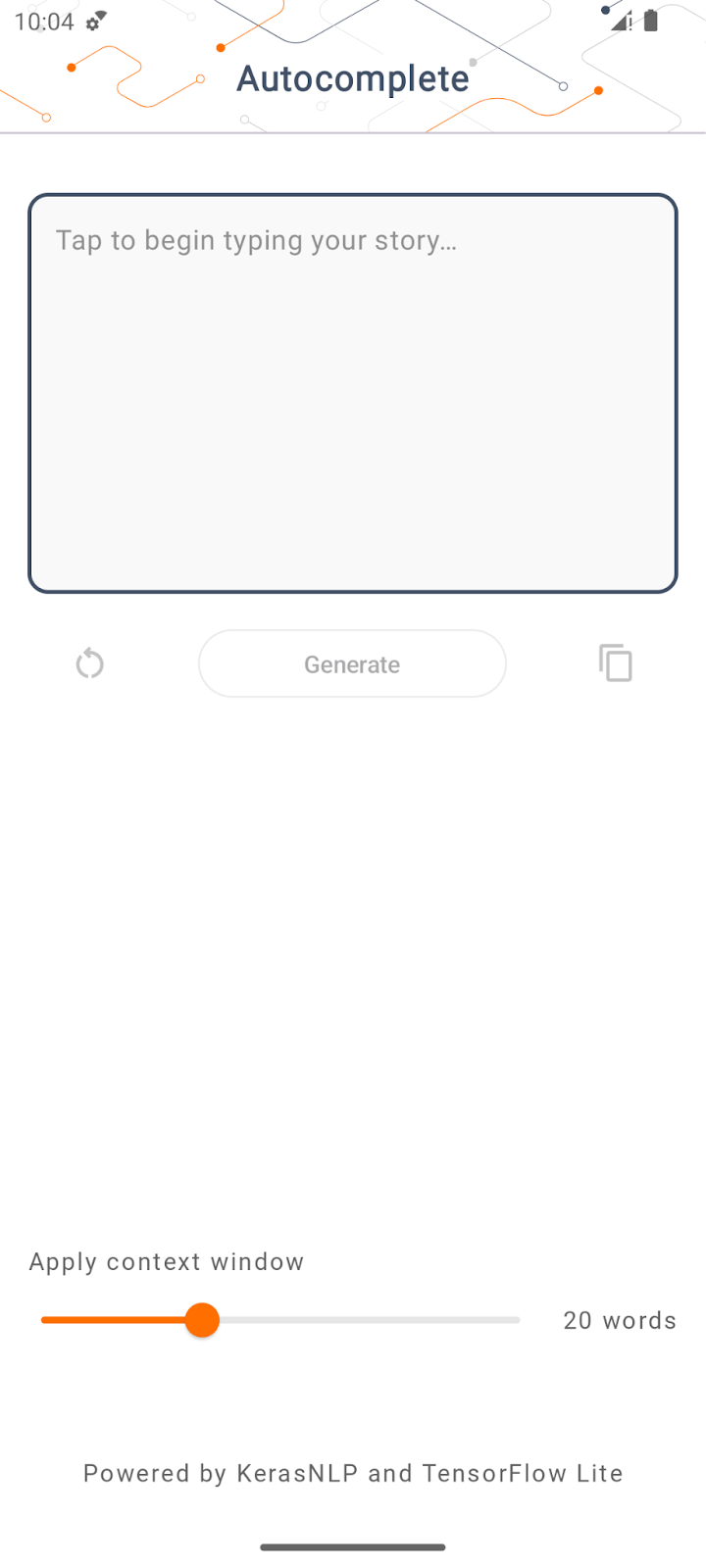

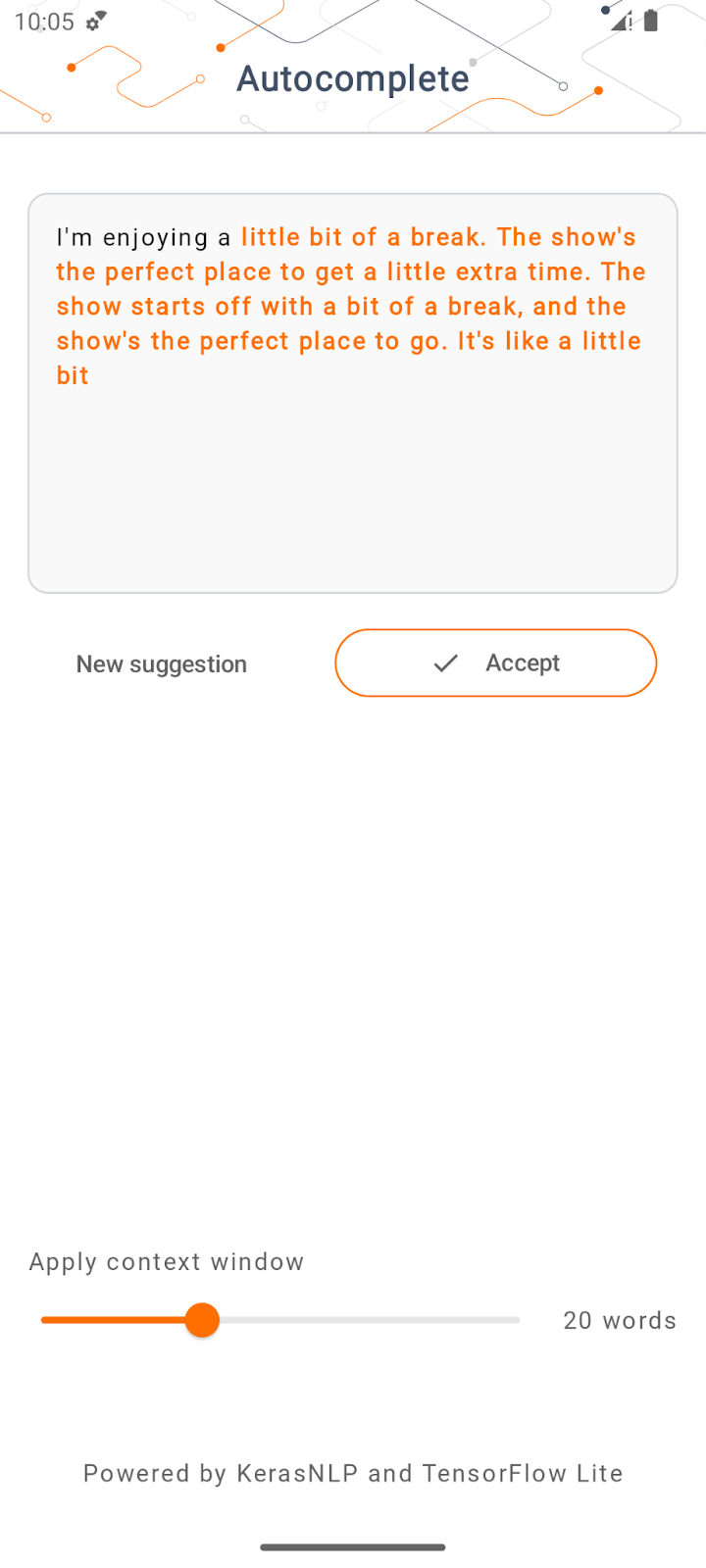

The app should launch on your Android device. The app is called ‘Auto-complete'. The UI is pretty straightforward: you can type in some seed words in the text box and tap Generate; the app then runs inference on an LLM and generates additional text based on your input.

Right now, if you tap Generate after typing some words, nothing happens. This is because it is not running an LLM yet.

4. Prepare the LLM for on-device deployment

- Open the Colab and run through the notebook (which is hosted in the TensorFlow Codelabs GitHub repository).

5. Complete the Android app

Now that you have converted the GPT-2 model into TensorFlow Lite, you can finally deploy it in the app.

Run the app

- Drag the

autocomplete.tflitemodel file downloaded from the last step into theapp/src/main/assets/folder in Android Studio.

- Click

Run in the navigation menu and then wait for the app to load.

Run in the navigation menu and then wait for the app to load. - Type some seed words in the text field, and then tap Generate.

6. Notes on Responsible AI

As noted in the original OpenAI GPT-2 announcement, there are notable caveats and limitations with the GPT-2 model. In fact, LLMs today generally have some well-known challenges such as hallucinations, offensive output, fairness, and bias; this is because these models are trained on real-world data, which make them reflect real world issues.

This codelab is created only to demonstrate how to create an app powered by LLMs with TensorFlow tooling. The model produced in this codelab is for educational purposes only and not intended for production usage.

LLM production usage requires thoughtful selection of training datasets and comprehensive safety mitigations. To learn more about Responsible AI in the context of LLMs, make sure to watch the Safe and Responsible Development with Generative Language Models technical session at Google I/O 2023 and check out the Responsible AI Toolkit.

7. Conclusion

Congratulations! You built an app to generate coherent text based on user input by running a pre-trained Large Language Model purely on device!